Cisco’s CVE-2025–20337 flaw exposes ISE to root access via API exploit. Affects releases 3.3 & 3.4.

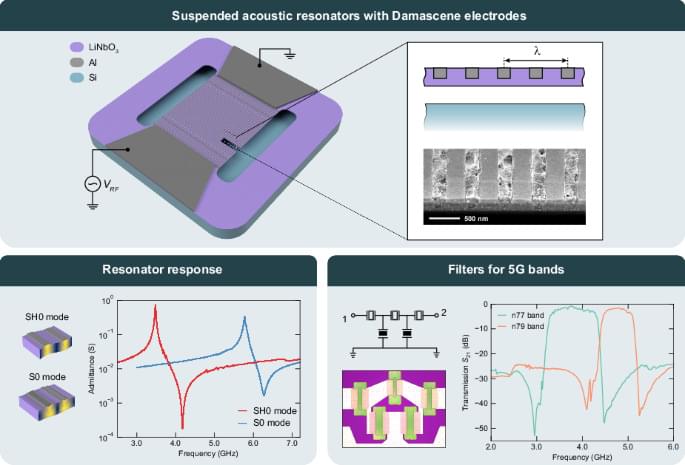

Data rates and volume for mobile communication are ever-increasing with the growing number of users and connected devices. With the deployment of 5G and 6G on the horizon, wireless communication is advancing to higher frequencies and larger bandwidths enabling higher speeds and throughput. Current micro-acoustic resonator technology, a key component in radiofrequency front-end filters, is struggling to keep pace with these developments. This work presents an acoustic resonator architecture enabling multi-frequency, low-loss, and wideband filtering for the 5G and future 6G bands located above 3 GHz. Thanks to the exceptional performance of these resonators, filters for the 5G n77 and n79 bands are demonstrated, exhibiting fractional bandwidths of 25% and 13%, respectively, with low insertion loss of around 1 dB. With its unique frequency scalability and wideband capabilities, the reported architecture offers a promising option for filtering and multiplexing in future mobile devices.

Stettler, S., Villanueva, L.G. Suspended lithium niobate acoustic resonators with Damascene electrodes for radiofrequency filtering. Microsyst Nanoeng 11, 131 (2025). https://doi.org/10.1038/s41378-025-00980-w.

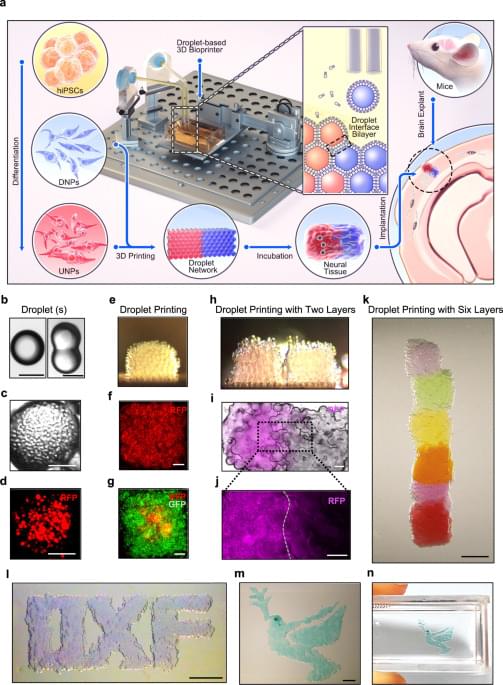

Brain injuries can result in significant damage to the cerebral cortex, and restoring the cellular architecture of the tissue remains challenging. Here, the authors use a droplet printing technique to fabricate a simplified human cerebral cortical column and demonstrate its functionality and potential for future personalized therapy approaches.

In 2024, researchers transformed readings of an epic upheaval of Earth’s magnetic field flipping 41,000 years ago into an eerie, auditory experience.

Now a team containing some of those same scientists has sonified an even earlier flip, from epochs ago.

The resulting cacophony is an unnerving translation of geological data on the Matuyama-Brunhes reversal, a switching of the planet’s magnetic poles that took place roughly 780,000 years ago.