Transhumanists will know that the science fiction author Zoltan Istvan has unilaterally leveraged the movement into a political party contesting the 2016 US presidential election. To be sure, many transhumanists have contested Istvan’s own legitimacy, but there is no denying that he has generated enormous publicity for many key transhumanist ideas. Interestingly, his lead idea is that the state should do everything possible to uphold people’s right to live forever. Of course, he means to live forever in a healthy state, fit of mind and body. Istvan cleverly couches this policy as simply an extension of what voters already expect from medical research and welfare provision. And while he may be correct, the policy is fraught with hazards – especially if, as many transhumanists believe, we are on the verge of revealing the secrets to biological immortality.

In June, Istvan and I debated this matter at Brain Bar Budapest. Let me say, for the record, that I think that we are sufficiently close to this prospect that it is not too early to discuss its political and economic implications.

Two months before my encounter with Istvan, I was on a panel at the Edinburgh Science Festival with the great theorist of radical life extension Aubrey de Grey, where he declared that people who live indefinitely will seem like renovated vintage cars. Whatever else, he is suggesting that they would be frozen in time. He may actually be right about this. But is such a state desirable, given that throughout history radical change has been facilitated generational change? Specifically, two simple facts make the young open to doing things differently: The young have no memory of past practices working to anyone else’s benefit, and they have not had the time to invest in those practices to reap their benefits. Whatever good is to be found in the past is hearsay, as far as the young are concerned, which they are being asked to trust as they enter a world that they know is bound to change.

Questions have been already raised about whether tomorrow’s Methuselahs will wish to procreate at all, given the time available to them to realize dreams that in the past would have been transferred to their offspring. After all, as human life expectancy has increased 50% over the past century, the birth rate has correspondingly dropped. One can only imagine what will happen once ageing can be arrested, if not outright reversed!

So, where will the new ideas of the future come from? The worry here is that society may end up being ruled by people with overlong memories who value stability over change: Think China and Japan. But perhaps the old Soviet Union is the most telling example, as its self-consciously revolutionary image gradually morphed into a ritualistic veneration of the original 1917 revolutionary moment. To these gerontocratic indicators, the recent UK vote to leave the European Union (‘Brexit’) adds a new twist. There were some clear age-related patterns in the outcome: The older the voter, the more likely to vote to leave – and the more likely to vote at all. To be sure, given the closeness of the vote (52% to leave vs. 48% to remain), had the young voted in comparable numbers to their elders, Brexit would have lost.

One might think that the simple solution is to encourage, if not force, the young to vote in larger numbers. However, this does not take into account the liabilities of their elders when it comes to dictating the terms for living in the future. Whatever benefits might accrue to people living longer, the clarity of the memories of such people may not be an unmitigated good, as it might incline them to perpetuate what they regard as the best of their own pasts. One way around this situation is to weight votes inversely to age. In other words, the youngest voters would effectively get the most votes and the oldest voters the least. This would continually force the elders to make their case in terms that their juniors can appreciate. The exercise would serve to destabilize any sense of nostalgia that members of the same generation might experience simply by virtue of having experienced the same events at the same age.

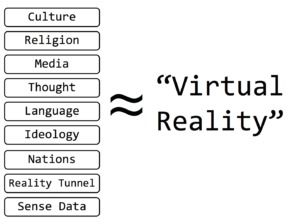

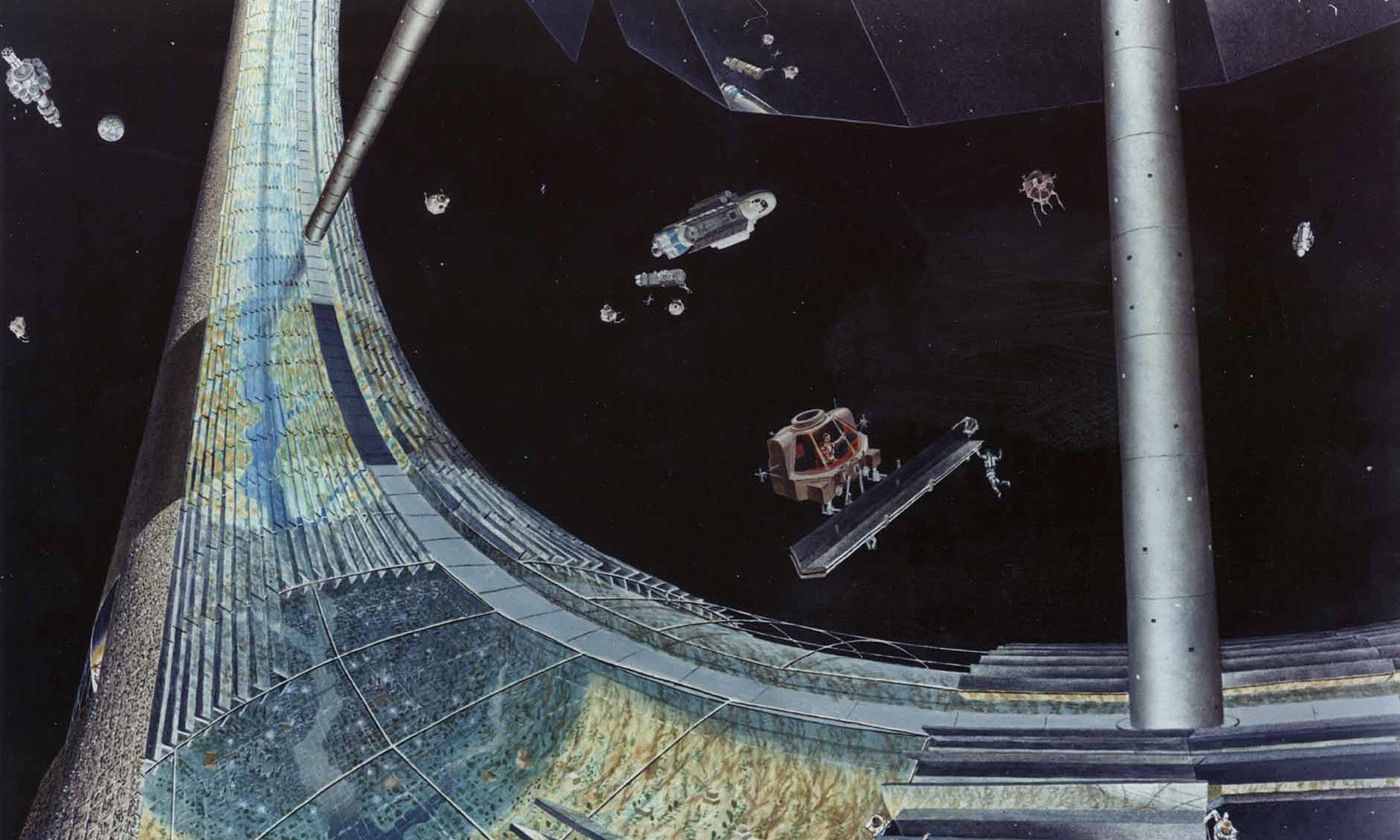

However, two technologically based solutions also come to mind. One is for the elderly to be subject to the strategic memory loss procedure described in the film, The Eternal Sunshine of the Spotless Mind, which might be understood as a the cognitive correlate of an inheritance tax – or even a high-class lobotomy! In other words, the elders would lose their personal attachment to events which would nevertheless remain available in the historical record for more detached scrutiny vis-à-vis their lessons for the future. The other, more drastic solution involves incentivizing the elders to exchange biological for digital immortality. This would enable them to enjoy a virtual existence in perpetuity. They might be resurrected (‘downloaded’) on a regular or simply a need-to-remember basis, depending on prior contractual arrangements. The former might be seen as more ‘religious’, as in a Roman Catholic feast day, and the latter more ‘secular’, as in an ‘on tap’ consultant. But in either virtual form, the elders could retain their attachment to certain past events with impunity while at the same time not inflicting their memories needlessly on present generations.

David Wood, the head of the main UK transhumanist organization, London Futurists, has recently published a summa of anti-ageing arguments, which makes a cumulatively persuasive case for indefinite life extension being within our grasp. But most assuredly, this would create as many social problems as it solves biological ones. Under most direct threat would be the sorts of values historically associated with generational change, namely, those related to new thinking and fresh starts. Of course, as I have suggested, there are ways around this, but they will invariably revive in a new high-tech key classic debates concerning the desirability of brainwashing and suicide.

They state that doing this fulfills Jihad and prophecy and is sanctioned by the Holy Qur’an. With this in mind, I the choice of poll responses is political, selfish and offensive. It assumes that readers are idiots…

They state that doing this fulfills Jihad and prophecy and is sanctioned by the Holy Qur’an. With this in mind, I the choice of poll responses is political, selfish and offensive. It assumes that readers are idiots…