“The scale of Facebook’s ambition, and the rivalries it faces, reflect a consensus that these technologies will transform how people interact with each other, with data and with their surroundings.”

“The scale of Facebook’s ambition, and the rivalries it faces, reflect a consensus that these technologies will transform how people interact with each other, with data and with their surroundings.”

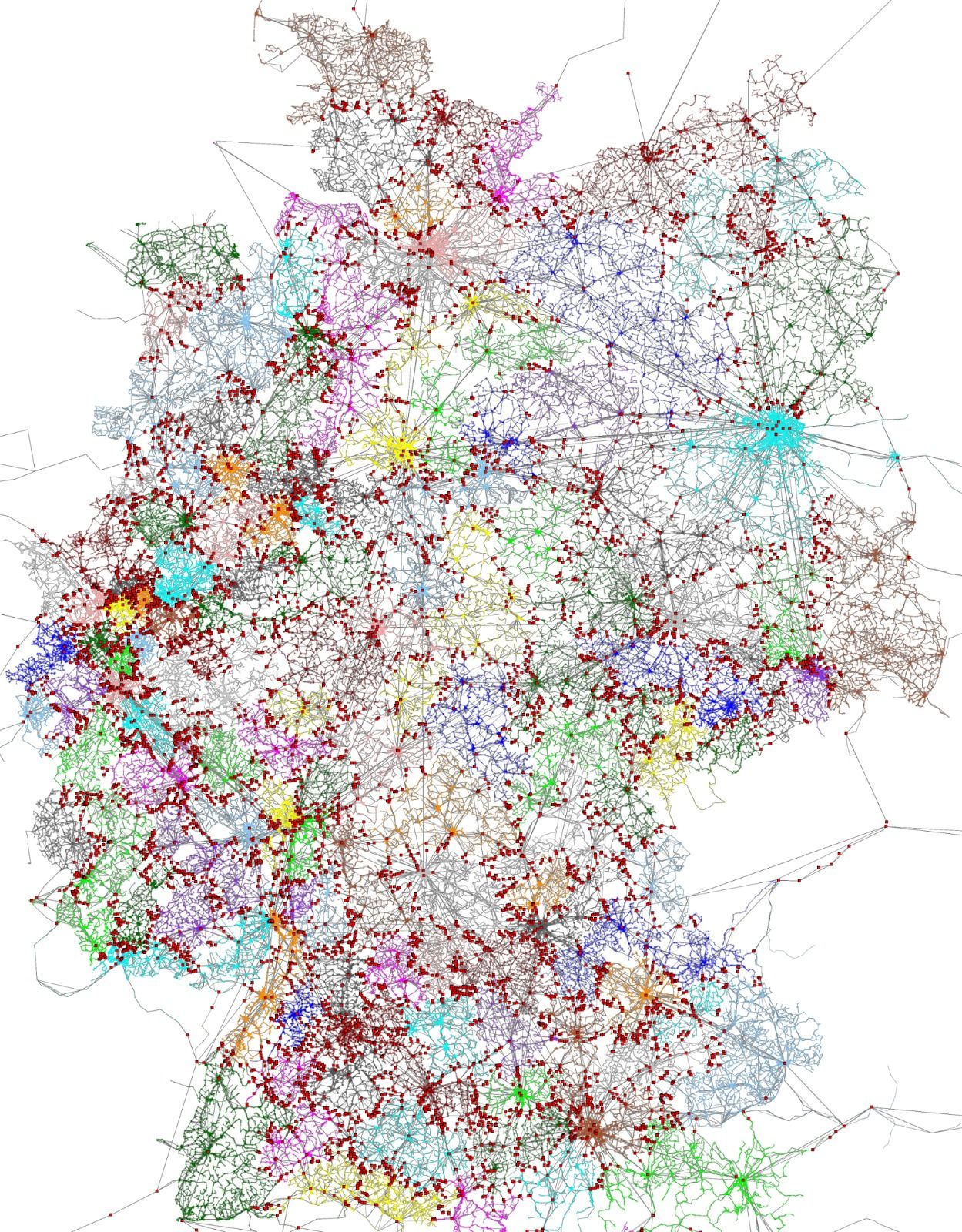

“What is the best way to get from A to B by public transit? Google Maps is answering such queries for over 20,000 cities and towns in over 70 countries around the world, including large metro areas like New York, São Paulo or Moscow, and some complete countries, such as Japan or Great Britain.”

“Online abuse can be cruel – but for some tech companies it is an existential threat. Can giants such as Facebook use behavioural psychology and persuasive design to tame the trolls?”

Ask the average passerby on the street to describe artificial intelligence and you’re apt to get answers like C-3PO and Apple’s Siri. But for those who follow AI developments on a regular basis and swim just below the surface of the broad field , the idea that the foreseeable AI future might be driven more by Big Data rather than big discoveries is probably not a huge surprise. In a recent interview with Data Scientist and Entrepreneur Eyal Amir, we discussed how companies are using AI to connect the dots between data and innovation.

Image credit: Startup Leadership Program ChicagoAccording to Amir, the ability to make connections between big data together has quietly become a strong force in a number of industries. In advertising for example, companies can now tease apart data to discern the basics of who you are, what you’re doing, and where you’re going, and tailor ads to you based on that information.

“What we need to understand is that, most of the time, the data is not actually available out there in the way we think that it is. So, for example I don’t know if a user is a man or woman. I don’t know what amounts of money she’s making every year. I don’t know where she’s working,” said Eyal. “There are a bunch of pieces of data out there, but they are all suggestive. (But) we can connect the dots and say, ‘she’s likely working in banking based on her contacts and friends.’ It’s big machines that are crunching this.”

Amir used the example of image recognition to illustrate how AI is connecting the dots to make inferences and facilitate commerce. Many computer programs can now detect the image of a man on a horse in a photograph. Yet many of them miss the fact that, rather than an actual man on a horse, the image is actually a statue of a man on a horse. This lack of precision in analysis of broad data is part of what’s keep autonomous cars on the curb until the use of AI in commerce advances.

“You can connect the dots enough that you can create new applications, such as knowing where there is a parking spot available in the street. It doesn’t make financial sense to put sensors everywhere, so making those connections between a bunch of data sources leads to precise enough information that people are actually able to use,” Amir said. “Think about, ‘How long is the line at my coffee place down the street right now?’ or ‘Does this store have the shirt that I’m looking for?’ The information is not out there, but most companies don’t have a lot of incentive to put it out there for third parties. But there will be the ability to…infer a lot of that information.”

This greater ability to connect information and deliver more precise information through applications will come when everybody chooses to pool their information, said Eyal. While he expects a fair bit of resistance to that concept, Amir predicts that there will ultimately be enough players working together to infer and share information; this approach may provide more benefits on an aggregate level, as compared to an individual company that might not have the same incentives to share.

As more data is collected and analyzed, another trend that Eyal sees on the horizon is more autonomy being given to computers. Far from the dire predictions of runaway computers ruling the world, he sees a ‘supervised’ autonomy in which computers have the ability to perform tasks using knowledge that is out-of-reach for humans. Of course, this means developing a sense trust and allowing the computer to make more choices for us.

“The same way that we would let our TiVo record things that are of interest to us, it would still record what we want, but maybe it would record some extras. The same goes with (re-stocking) my groceries every week,” he said. “There is this trend of ‘Internet of Things,’ which brings together information about the contents of your refrigerator, for example. Then your favorite grocery store would deliver what you need without you having to spend an extra hour (shopping) every week.”

On the other hand, Amir does have some potential concerns about the future of artificial intelligence, comparable to what’s been voiced by Elon Musk and others. Yet he emphasizes that it’s not just the technology we should be concerned about.

“At the end, this will be AI controlled by market forces. I think the real risk is not the technology, but the combination of technology and market forces. That, together, poses some threats,” Amir said. “I don’t think that the computers themselves, in the foreseeable future, will terminate us because they want to. But they may terminate us because the hackers wanted to.”

Although it was made in 1968, to many people, the renegade HAL 9000 computer in the film 2001: A Space Odyssey still represents the potential danger of real-life artificial intelligence. However, according to Mathematician, Computer Visionary and Author Dr. John MacCormick, the scenario of computers run amok depicted in the film – and in just about every other genre of science fiction – will never happen.

“Right from the start of computing, people realized these things were not just going to be crunching numbers, but could solve other types of problems,” MacCormick said during a recent interview with TechEmergence. “They quickly discovered computers couldn’t do things as easily as they thought.”

While MacCormick is quick to acknowledge modern advances in artificial intelligence, he’s also very conscious of its ongoing limitations, specifically replicating human vision. “The sub-field where we try to emulate the human visual system turned out to be one of the toughest nuts to crack in the whole field of AI,” he said. “Object recognition systems today are phenomenally good compared to what they were 20 years ago, but they’re still far, far inferior to the capabilities of a human.”

To compensate for its limitations, MacCormick notes that other technologies have been developed that, while they’re considered by many to be artificially intelligent, don’t rely on AI. As an example, he pointed to Google’s self-driving car. “If you look at the Google self-driving car, the AI vision systems are there, but they don’t rely on them,” MacCormick said. “In terms of recognizing lane markings on the road or obstructions, they’re going to rely on other sensors that are more reliable, such as GPS, to get an exact location.”

Although it may not specifically rely on AI, MacCormick still believes that with new and improved algorithms emerging all the time, self-driving cars will eventually become a very real part of our daily fabric. And the incremental gains being achieved to make real AI systems won’t be limited to just self-driving cars. “One of the areas where we’re seeing pretty consistent improvement is translation of human languages,” he said. “I believe we’re going to continue to see high quality translations between human languages emerging. I’m not going to give a number in years, but I think it’s doable in the middle term.”

Ultimately, the uses and applications of artificial intelligence will still remain in the hands of their creators, according to MacCormick. “I’m an unapologetic optimist. I don’t think AIs are going to get out of control of humans and start doing things on their own,” he said. “As we get closer to systems that rival humans, they will still be systems that we have designed and are capable of controlling.”

That optimistic outlook would seemingly put MacCormick at odds with the views of the potential dangers of AI that have been voiced recently by the likes of Elon Musk, Stephen Hawking and Bill Gates. However, MacCormick says he agrees with their point that the ethical ramifications of artificial intelligence should be considered and guidance protocols developed.

“Everyone needs to be thinking about it and cooperating to be sure that we’re moving in the right direction,” MacCormick said. “At some point, all sorts of people need to be thinking about this, from philosophers and social scientists to technologists and computer scientists.”

MacCormick didn’t mince words when he cited the area of AI research where those protocols are most needed. The most obvious sub-field where protocols need to be in place, according to MacCormick, is military robotics. “As we become capable of building systems that are somewhat autonomous and can be used for lethal force in military conflicts, then the entire ethics of what should and should not be done really changes,” he said. “We need to be thinking about this and try to formulate the correct way of using autonomous systems.”

In the end, MacCormick’s optimistic view of the future, and the positive potentials of artificial intelligence, beams through clouds of uncertainty. “I like to take the optimistic view that we’ll be able to continue building these things and making them into useful tools that aren’t the same as humans, but have extraordinary capabilities,” MacCormick said. “And we can guide them and control them and use them for positive benefit.”

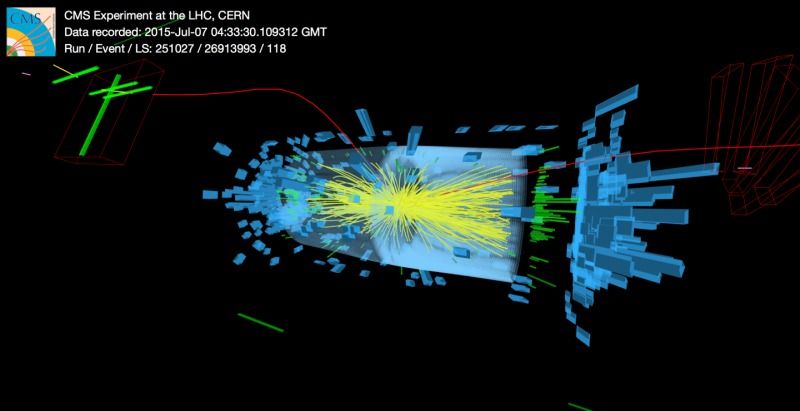

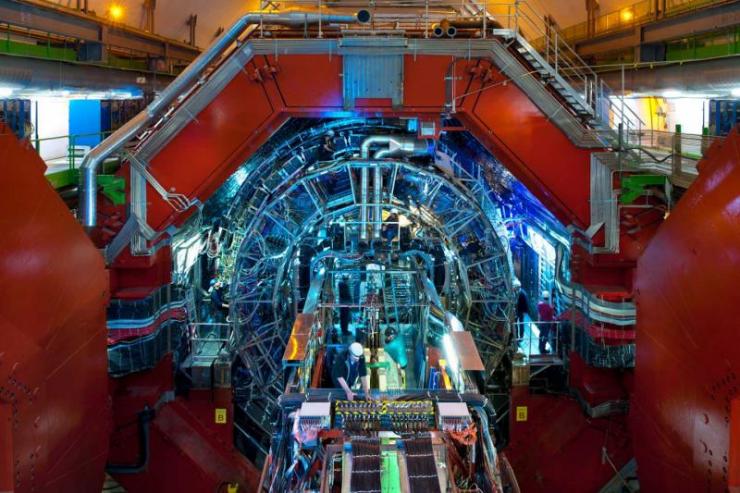

July, 2015; as you know.. was the all systems go for the CERNs Large Hadron Collider (LHC). On a Saturday evening, proton collisions resumed at the LHC and the experiments began collecting data once again. With the observation of the Higgs already in our back pocket — It was time to turn up the dial and push the LHC into double digit (TeV) energy levels. From a personal standpoint, I didn’t blink an eye hearing that large amounts of Data was being collected at every turn. BUT, I was quite surprised to learn at the ‘Amount’ being collected and processed each day — About One Petabyte.

Approximately 600 million times per second, particles collide within the (LHC). The digitized summary is recorded as a “collision event”. Physicists must then sift through the 30 petabytes or so of data produced annually to determine if the collisions have thrown up any interesting physics. Needless to say — The Hunt is On!

The Data Center processes about one Petabyte of data every day — the equivalent of around 210,000 DVDs. The center hosts 11,000 servers with 100,000 processor cores. Some 6000 changes in the database are performed every second.

With experiments at CERN generating such colossal amounts of data. The Data Center stores it, and then sends it around the world for analysis. CERN simply does not have the computing or financial resources to crunch all of the data on site, so in 2002 it turned to grid computing to share the burden with computer centres around the world. The Worldwide LHC Computing Grid (WLCG) – a distributed computing infrastructure arranged in tiers – gives a community of over 8000 physicists near real-time access to LHC data. The Grid runs more than two million jobs per day. At peak rates, 10 gigabytes of data may be transferred from its servers every second.

By early 2013 CERN had increased the power capacity of the centre from 2.9 MW to 3.5 MW, allowing the installation of more computers. In parallel, improvements in energy-efficiency implemented in 2011 have led to an estimated energy saving of 4.5 GWh per year.

Image: CERN

PROCESSING THE DATA (processing info via CERN)> Subsequently hundreds of thousands of computers from around the world come into action: harnessed in a distributed computing service, they form the Worldwide LHC Computing Grid (WLCG), which provides the resources to store, distribute, and process the LHC data. WLCG combines the power of more than 170 collaborating centres in 36 countries around the world, which are linked to CERN. Every day WLCG processes more than 1.5 million ‘jobs’, corresponding to a single computer running for more than 600 years.

The data flow from all four experiments for Run 2 is anticipated to be about 25 GB/s (gigabyte per second)

In July, the LHCb experiment reported observation of an entire new class of particles:

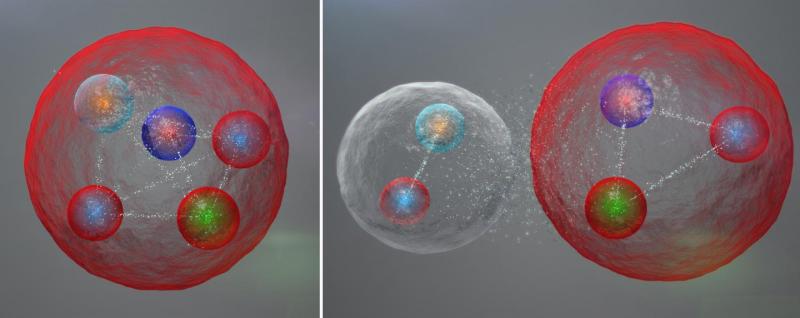

Exotic Pentaquark Particles (Image: CERN)

Possible layout of the quarks in a pentaquark particle. The five quarks might be tightly bound (left). The five quarks might be tightly bound. They might also be assembled into a meson (one quark and one anti quark) and a baryon (three quarks), weakly bound together.

The LHCb experiment at CERN’s LHC has reported the discovery of a class of particles known as pentaquarks. In short, “The pentaquark is not just any new particle,” said LHCb spokesperson Guy Wilkinson. “It represents a way to aggregate quarks, namely the fundamental constituents of ordinary protons and neutrons, in a pattern that has never been observed before in over 50 years of experimental searches. Studying its properties may allow us to understand better how ordinary matter, the protons and neutrons from which we’re all made, is constituted.”

Our understanding of the structure of matter was revolutionized in 1964 when American physicist Murray Gell-Mann proposed that a category of particles known as baryons, which includes protons and neutrons, are comprised of three fractionally charged objects called quarks, and that another category, mesons, are formed of quark-antiquark pairs. This quark model also allows the existence of other quark composite states, such as pentaquarks composed of four quarks and an antiquark.

Until now, however, no conclusive evidence for pentaquarks had been seen.

Earlier experiments that have searched for pentaquarks have proved inconclusive. The next step in the analysis will be to study how the quarks are bound together within the pentaquarks.

“The quarks could be tightly bound,” said LHCb physicist Liming Zhang of Tsinghua University, “or they could be loosely bound in a sort of meson-baryon molecule, in which the meson and baryon feel a residual strong force similar to the one binding protons and neutrons to form nuclei.” More studies will be needed to distinguish between these possibilities, and to see what else pentaquarks can teach us!

August 18th, 2015

CERN Experiment Confirms Matter-Antimatter CPT Symmetry

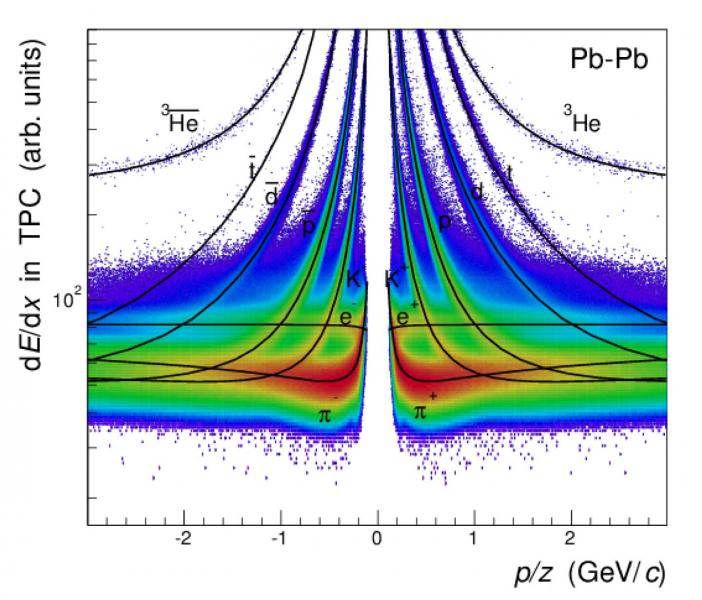

For Light Nuclei, Antinuclei (Image: CERN)

Days after scientists at CERN’s Baryon-Antibaryon Symmetry Experiment (BASE) measured the mass-to-charge ratio of a proton and its antimatter particle, the antiproton, the ALICE experiment at the European organization reported similar measurements for light nuclei and antinuclei.

The measurements, made with unprecedented precision, add to growing scientific data confirming that matter and antimatter are true mirror images.

Antimatter shares the same mass as its matter counterpart, but has opposite electric charge. The electron, for instance, has a positively charged antimatter equivalent called positron. Scientists believe that the Big Bang created equal quantities of matter and antimatter 13.8 billion years ago. However, for reasons yet unknown, matter prevailed, creating everything we see around us today — from the smallest microbe on Earth to the largest galaxy in the universe.

Last week, in a paper published in the journal Nature, researchers reported a significant step toward solving this long-standing mystery of the universe. According to the study, 13,000 measurements over a 35-day period show — with unparalleled precision – that protons and antiprotons have identical mass-to-charge ratios.

The experiment tested a central tenet of the Standard Model of particle physics, known as the Charge, Parity, and Time Reversal (CPT) symmetry. If CPT symmetry is true, a system remains unchanged if three fundamental properties — charge, parity, which refers to a 180-degree flip in spatial configuration, and time — are reversed.

The latest study takes the research over this symmetry further. The ALICE measurements show that CPT symmetry holds true for light nuclei such as deuterons — a hydrogen nucleus with an additional neutron — and antideuterons, as well as for helium-3 nuclei — two protons plus a neutron — and antihelium-3 nuclei. The experiment, which also analyzed the curvature of these particles’ tracks in ALICE detector’s magnetic field and their time of flight, improve on the existing measurements by a factor of up to 100.

IN CLOSING..

A violation of CPT would not only hint at the existence of physics beyond the Standard Model — which isn’t complete yet — it would also help us understand why the universe, as we know it, is completely devoid of antimatter.

UNTIL THEN…

ORIGINAL ARTICLE POSTING via Michael Phillips LinkedIN Pulse @ http://goo.gl/ApdTL6