A research team led by Virginia Tech cybersecurity expert Bimal Viswanath has found a critical blind spot in today’s image protection techniques designed to prevent bad actors from stealing online content for unauthorized artificial intelligence training, style mimicry, and deepfake manipulations. The study is published on the arXiv preprint server.

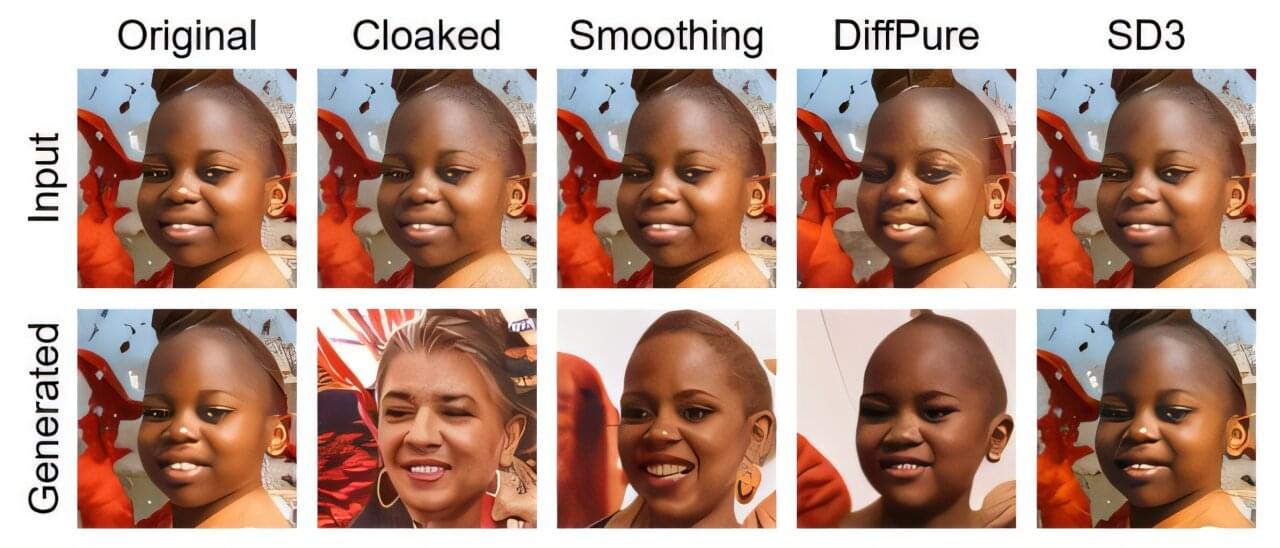

The research team found that attackers can defeat existing security using off-the-shelf artificial intelligence (AI) models and simple commands. Furthermore, “There is currently no foolproof, mathematically guaranteed way for users to protect publicly posted images against an adversary using off-the-shelf GenAI models,” Viswanath said.

The work was presented at the fourth IEEE Conference on Secure and Trustworthy Machine Learning, in Munich, Germany. The authors include Viswanath, doctoral students Xavier Pleimling and Sifat Muhammad Abdullah, Assistant Professor Peng Gao, Murtuza Jadliwala of the University of Texas at San Antonio, and Gunjan Balde and Mainack Mondal of the Indian Institute of Technology, Kharagpur.