I’m curious if anyone knows what this translates to in terms of physical infrastructure — i.e. How many m^3 of data center are need for x FLOP of compute/day?

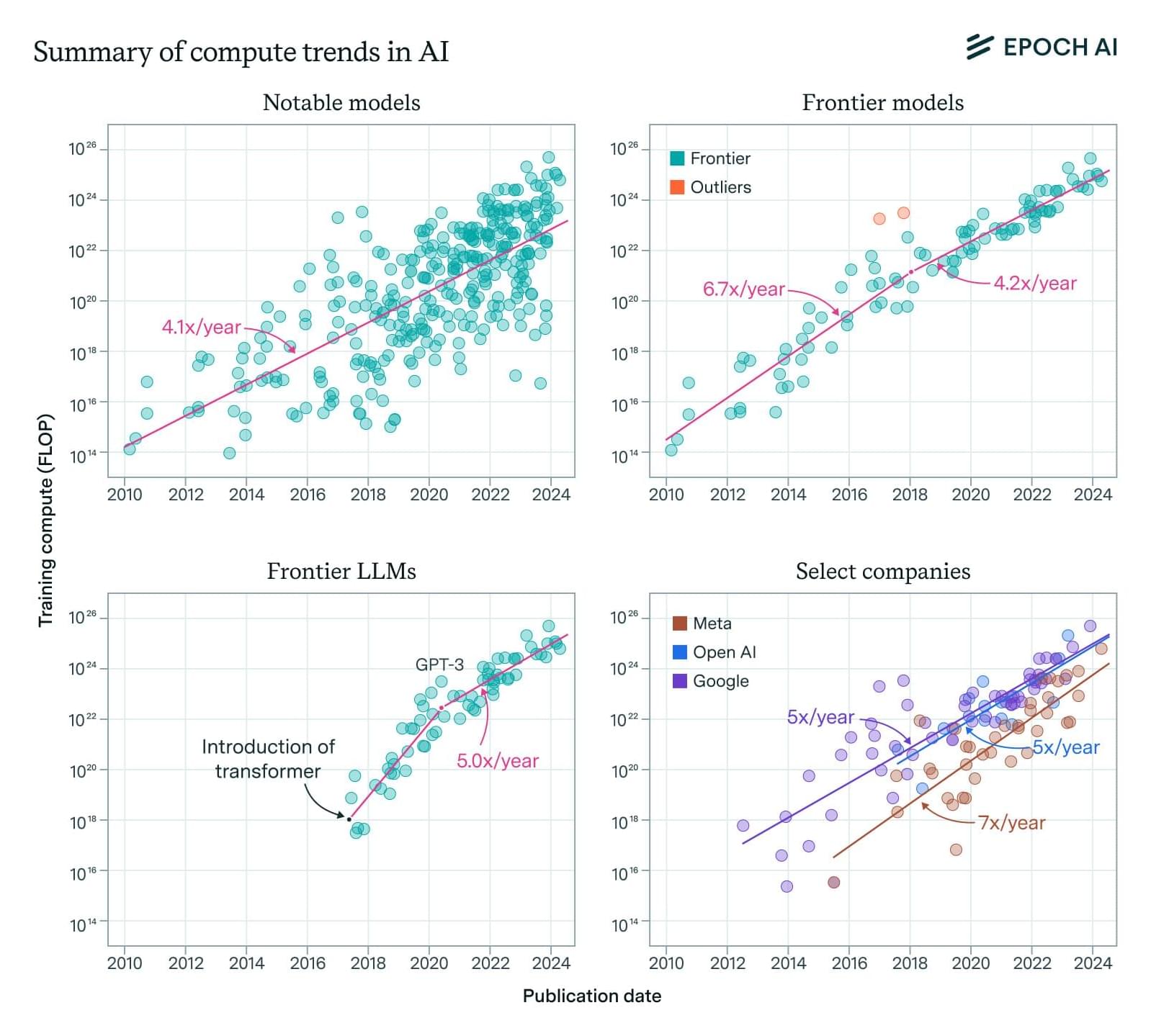

Our expanded AI model database shows that training compute grew 4-5x/year from 2010 to 2024, with similar trends in frontier and large language models.