Yann LeCun said:

BREAKING: Schmidhuber claims to have invented JEPA in 1992!

Is anyone surprised?

At some point, when I have nothing better to do, I’ll write a piece about what it means to invent something.

Speaking of which, one day, when I was still in high school, I wrote f(x)=0.

Every theory, every algorithm, is a special case of this (with proper definitions for f and x).

Every technology is a practical application of it.

Hence, I invented everything.

You are encouraged to cite [LeCun 1978, unpublished note from a lost notebook]

Someone who doesn’t like you will accuse you of plagiarism if you don’t.

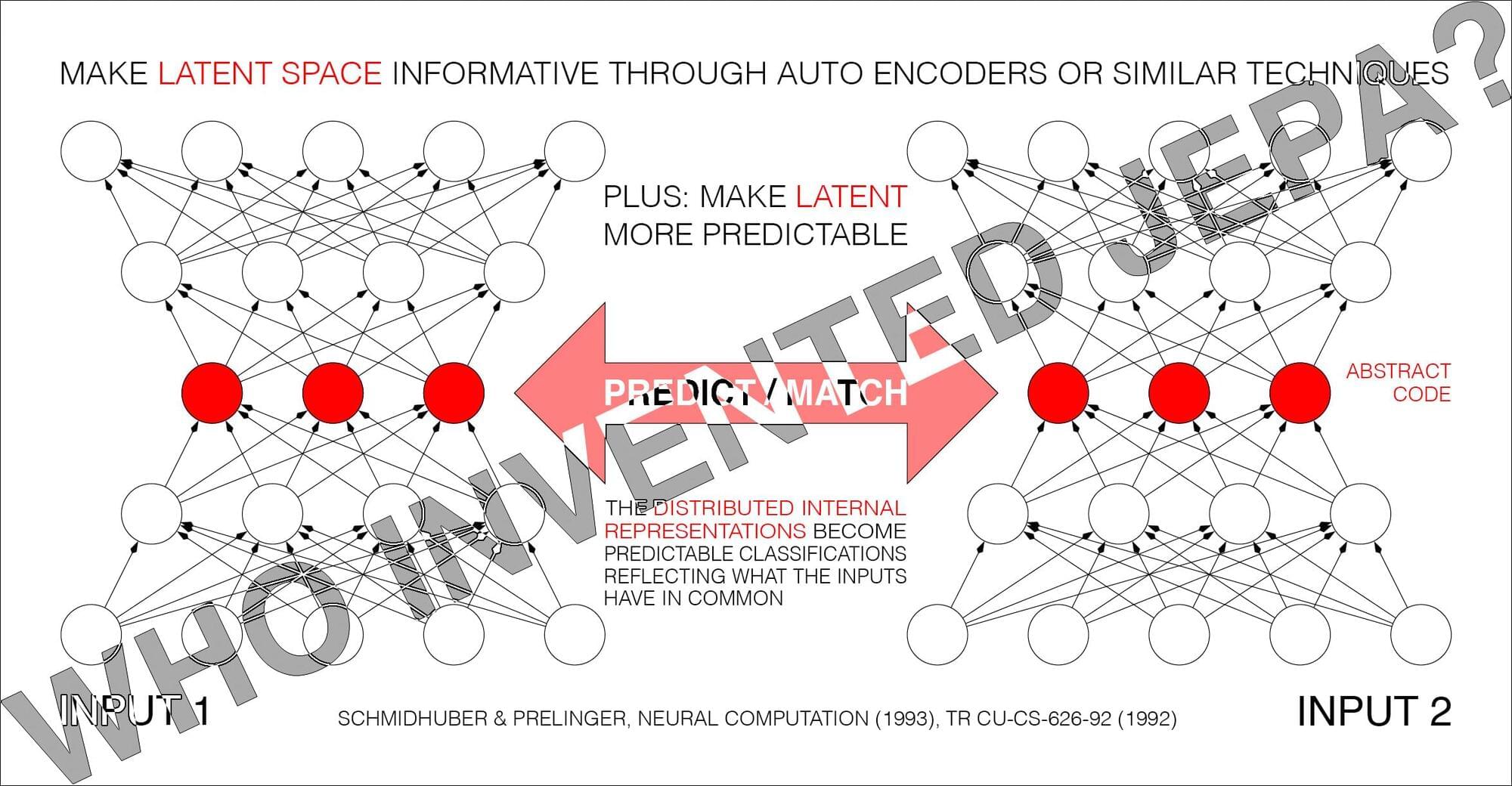

Predictability Maximization [PMAX][UN2] is actually a whole family of methods. Consider the simplest instance in Sec. 2.2 of [PMAX]: an auto encoder net sees an input and represents it in its hidden units (its latent space). The other net sees a different but related input and learns to predict (from its own latent space) the auto encoder’s latent representation, which in turn tries to become more predictable, without giving up too much information about its own input, to prevent what’s now called “collapse.” See illustration 5.2 in [UN2]’s Sec. 5.5 on the “extraction of predictable concepts.”

The 1992 [PMAX] paper discusses not only auto encoders but also other techniques for encoding data, e.g., maximizing constrained variance of the code (Sec. 2.1), Infomax (Sec. 2.3), and Predictability MINimization [PM1-2] (PMIN, see the footnotes). The experiments were conducted by my student Daniel Prelinger. The non-generative PMAX outperformed the generative [IMAX] on a stereo vision task.

The 2020 [BYOL] is also closely related to PMAX. In 2026, Michal Valko, leader of the BYOL team, praised PMAX, and listed numerous similarities to much later work [VAL26].