Over the past decades, computer scientists have developed numerous artificial intelligence (AI) systems that can process human speech in different languages. The extent to which these models replicate the brain processes via which humans understand spoken language, however, has not yet been clearly determined.

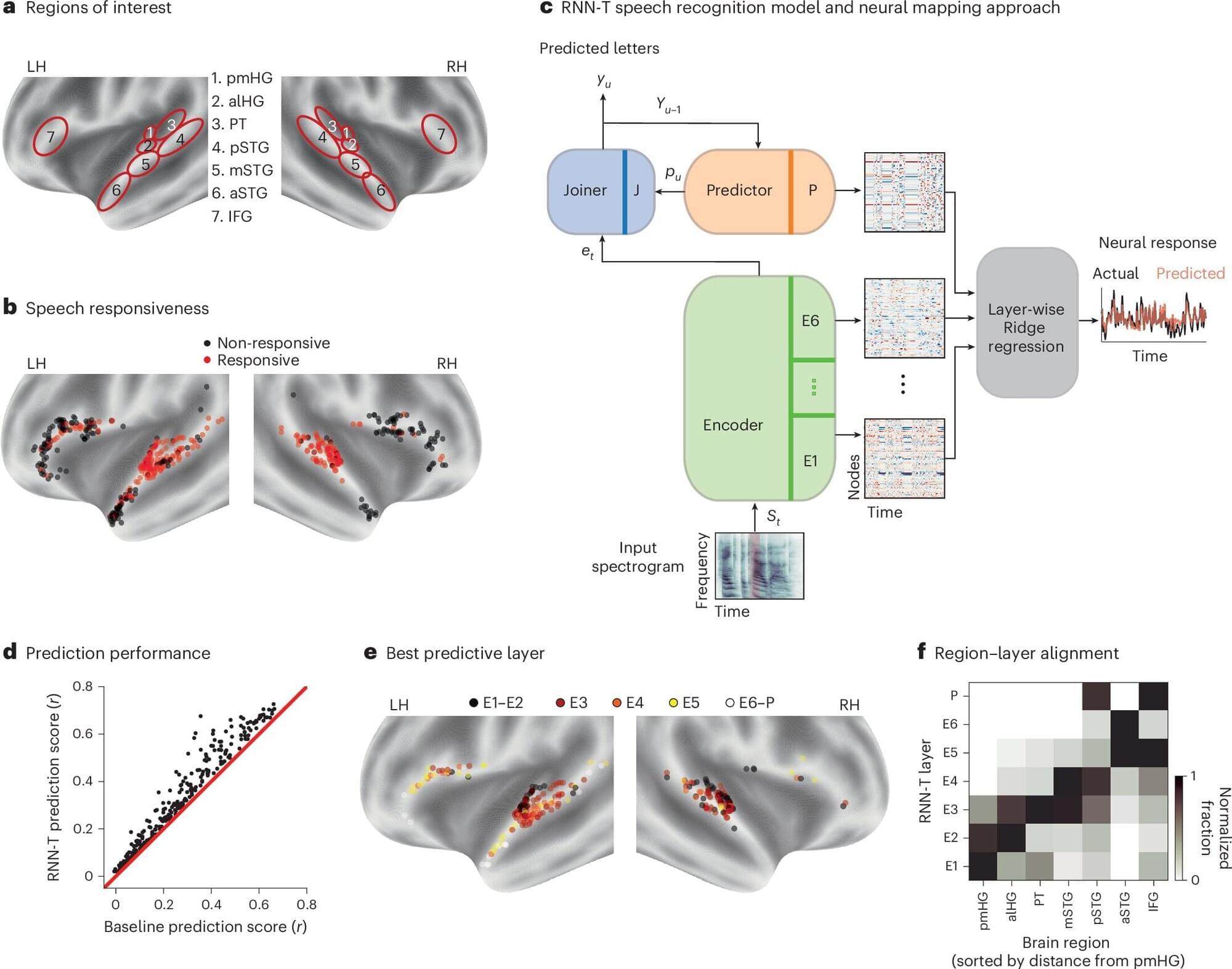

Researchers at Columbia University, IBM Research and the Feinstein Institutes for Medical Research recently carried out a study aimed at comparing how automatic speech recognition (ASR) systems and the human brain decode speech. Their findings, published in Nature Machine Intelligence, suggest that activity in specific brain regions while people make sense of spoken language corresponds to specific stages in the processing of speech by AI models.

“The core mystery we wanted to solve is how the human brain performs the incredible computational feat of turning raw acoustic vibrations, the sounds of speech, into discrete linguistic meaning,” Nima Mesgarani, senior author of the paper, told Tech Xplore. “We now have AI systems that match human performance in transcribing speech, but we didn’t know if they were reaching those solutions independently or if they had converged on the same strategy as our biology.”