Our earth is gorgeous, with majestic mountains, breathtaking seascapes, and tranquil forests. Flying past intricately detailed, three-dimensional landscapes, picture yourself taking in this splendor as a bird might. Is it possible for computers to learn to recreate this kind of visual experience? However, current techniques that combine new perspectives from photos typically only allow for a small amount of camera motion. Most earlier research can only extrapolate scene content within a constrained range of views corresponding to a subtle head movement.

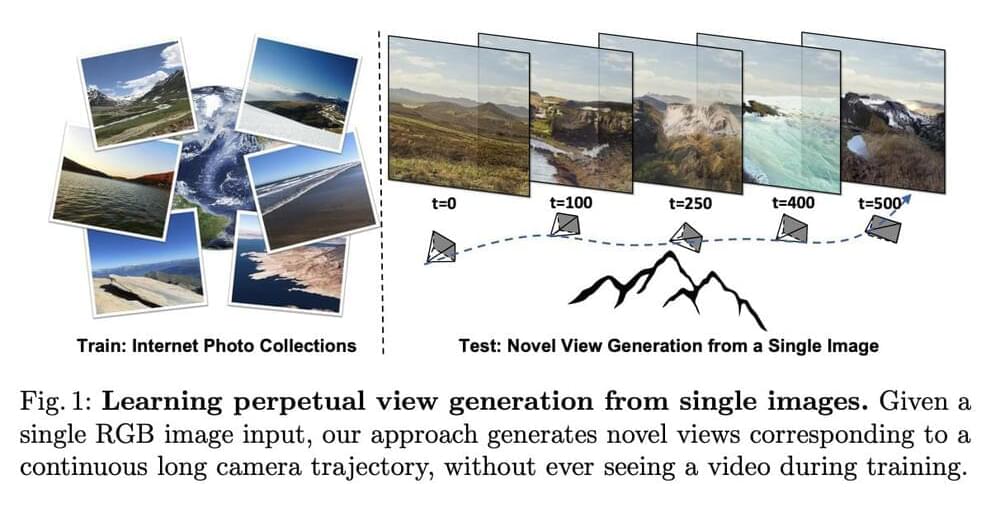

In a recent research by Google Research, Cornell Tech, and UC Berkeley, they presented a technique for learning to create unrestricted flythrough videos of natural situations beginning with a single view, where this capacity is learned through a collection of single images, without the need for camera poses or even several views of each scene. This method can take a single image and construct long camera trajectories of hundreds of new views with realistic and varied contents during testing, despite never having seen a video during training. This method contrasts with the most recent cutting-edge supervised view generation techniques, which demand posed multi-view films and exhibit better performance and synthesis quality.

The fundamental concept is that they gradually learn to generate flythroughs. Using single-image depth prediction techniques, they first compute a depth map from a beginning view, such as the first image in the figure below. After rendering the image to a new camera viewpoint, as illustrated in the middle, they use that depth map to create a new image and depth map from that viewpoint.