The point of the experiment was to show how easy it is to bias any artificial intelligence if you train it on biased data. The team wisely didn’t speculate about whether exposure to graphic content changes the way a human thinks. They’ve done other experiments in the same vein, too, using AI to write horror stories, create terrifying images, judge moral decisions, and even induce empathy. This kind of research is important. We should be asking the same questions of artificial intelligence as we do of any other technology because it is far too easy for unintended consequences to hurt the people the system wasn’t designed to see. Naturally, this is the basis of sci-fi: imagining possible futures and showing what could lead us there. Issac Asimov gave wrote the “Three Laws of Robotics” because he wanted to imagine what might happen if they were contravened.

Even though artificial intelligence isn’t a new field, we’re a long, long way from producing something that, as Gideon Lewis-Kraus wrote in The New York Times Magazine, can “demonstrate a facility with the implicit, the interpretive.” But it still hasn’t undergone the kind of reckoning that causes a discipline to grow up. Physics, you recall, gave us the atom bomb, and every person who becomes a physicist knows they might be called on to help create something that could fundamentally alter the world. Computer scientists are beginning to realize this, too. At Google this year, 5,000 employees protested and a host of employees resigned from the company because of its involvement with Project Maven, a Pentagon initiative that uses machine learning to improve the accuracy of drone strikes.

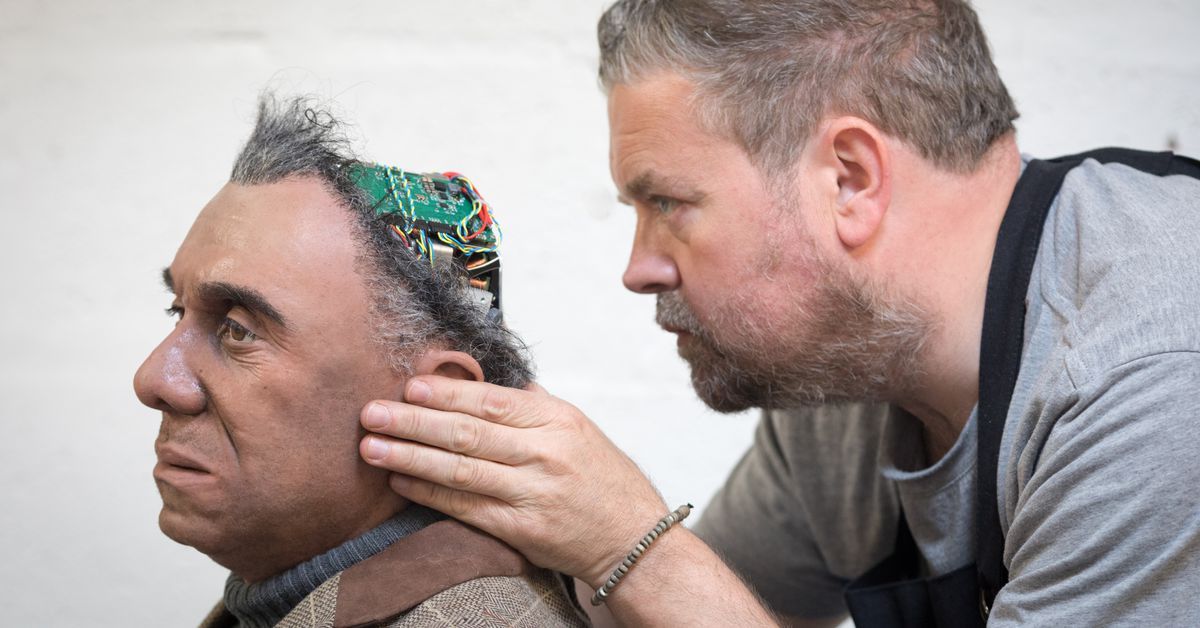

Norman is just a thought experiment, but the questions it raises about machine learning algorithms making judgments and decisions based on biased data are urgent and necessary. Those systems, for example, are already used in credit underwriting, deciding whether or not loans are worth guaranteeing. What if an algorithm decides you shouldn’t buy a house or a car? To whom do you appeal? What if you’re not white and a piece of software predicts you’ll commit a crime because of that? There are many, many open questions. Norman’s role is to help us figure out their answers.

If 5,000 employees at Google protested and some resigned over involvement with Project Maven, then shouldn’t they then get involved with defensive technologies countering an enemy’s use of Maven technologies; or risk an enemy with that technology coming to terminate them.