https://arstechnica.com/security/2024/01/a-previously-unknow…or-a-year/ # Linux Comments: https://news.ycombinator.com/item?id=38942102

Based on Mirai malware, self-replicating NoaBot installs cryptomining app on infected devices.

https://arstechnica.com/security/2024/01/a-previously-unknow…or-a-year/ # Linux Comments: https://news.ycombinator.com/item?id=38942102

Based on Mirai malware, self-replicating NoaBot installs cryptomining app on infected devices.

Ichthyosis refers to a group of skin disorders that lead to dry, itchy skin that appears scaly, rough, and red. Your health care provider may be able to diagnose ichthyosis with a genetic test that detects the mutated gene usually from a blood sample or a swab from the mouth. Find out more:

What is ichthyosis? It is a disorder that causes dry, thickened skin that may look similar to fish scales.

In a significant breakthrough, Microsoft and the Pacific Northwest National Laboratory have utilised artificial intelligence and supercomputing to discover a new material that could dramatically reduce lithium use in batteries by up to 70%. This discovery, potentially revolutionising the battery industry, was achieved by narrowing down from 32 million inorganic materials to 18 candidates in just a week, a process that could have taken over 20 years traditionally.

#microsoft #ai #gravitas.

About Channel:

WION The World is One News examines global issues with in-depth analysis. We provide much more than the news of the day. Our aim is to empower people to explore their world. With our Global headquarters in New Delhi, we bring you news on the hour, by the hour. We deliver information that is not biased. We are journalists who are neutral to the core and non-partisan when it comes to world politics. People are tired of biased reportage and we stand for a globalized united world. So for us, the World is truly One.

Please keep discussions on this channel clean and respectful and refrain from using racist or sexist slurs and personal insults.

Check out our website: http://www.wionews.com.

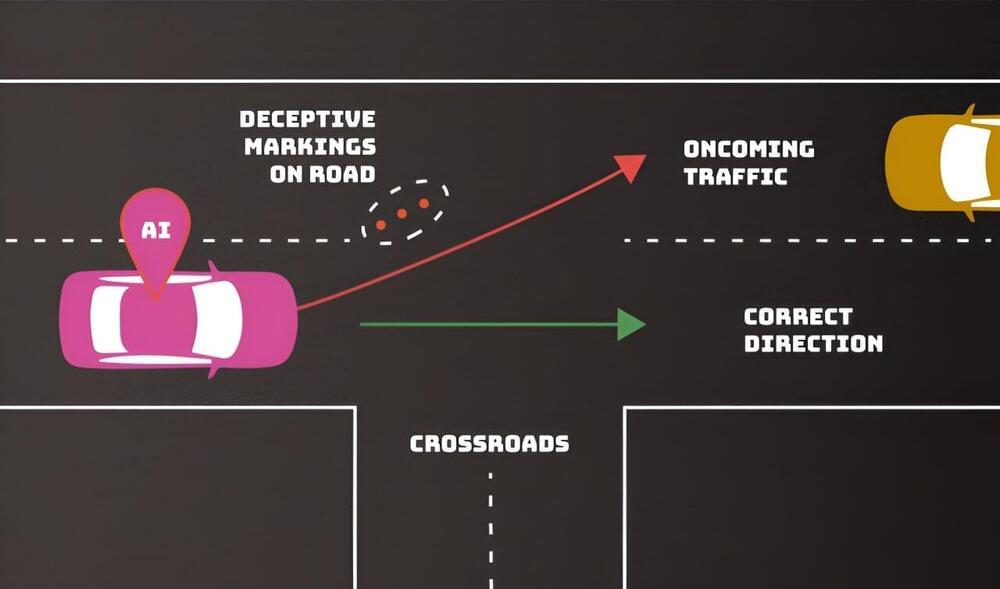

Adversaries can deliberately confuse or even “poison” artificial intelligence (AI) systems to make them malfunction—and there’s no foolproof defense that their developers can employ. Computer scientists from the National Institute of Standards and Technology (NIST) and their collaborators identify these and other vulnerabilities of AI and machine learning (ML) in a new publication.

Their work, titled Adversarial Machine Learning: A Taxonomy and Terminology of Attacks and Mitigations, is part of NIST’s broader effort to support the development of trustworthy AI, and it can help put NIST’s AI Risk Management Framework into practice. The publication, a collaboration among government, academia, and industry, is intended to help AI developers and users get a handle on the types of attacks they might expect along with approaches to mitigate them—with the understanding that there is no silver bullet.

“We are providing an overview of attack techniques and methodologies that consider all types of AI systems,” said NIST computer scientist Apostol Vassilev, one of the publication’s authors. “We also describe current mitigation strategies reported in the literature, but these available defenses currently lack robust assurances that they fully mitigate the risks. We are encouraging the community to come up with better defenses.”

Emacs is one of the oldest and most versatile text editors. The GNU Emacs version was originally written in 1984 and is well known for its powerful and rich editing features. It can be customized and extended with different modes, enabling it to be used like an Integrated Development Environment (IDE) for programming languages such as Java, C, and Python.

For those who have used both the Vi and the user-friendly nano text editors, Emacs presents itself as an in-between. Its strengths and features resemble those of Vi, while its menus, help files, and command-keys compare with nano.

In this article, you’ll learn how to install Emacs on an Ubuntu 22.04 server and use it for basic text editing.

Inspired by the structure of polar bear fur, researchers in Science present a knittable aerogel fiber with exceptional thermal and mechanical properties. The fibers are washable, dyeable, durable, and well-suited to be used in advanced textiles.

A scalable synthetic fiber that mimics the structure of polar bear fur has excellent thermal and mechanical properties.

How is a black hole formed? In the simplest language, a black hole is born when a star dies. Now, astronomers have claimed that they might have just witnessed the birth of such a black hole in a major first. This is huge for the scientific community worldwide as it directly links the death of a star to the formation of a black hole-like compact object.

“Our research is like solving a puzzle by gathering all possible evidence,” Ping Chen, a researcher at the Weizmann Institute of Science in Israel, and lead author of a study published in Nature, was quoted as saying by Cosomos Magazine.

It started with the discovery of a super bright object in space, called SN 2022jli. The object, located some 76 million years away, was discovered by a South African amateur astronomer, Berto Monard. Soon it was confirmed that they had their eyes set on a supernova. A supernova occurs just as a star is breathing its last, or when a black hole is about to form.

In the largest study of its kind, scientists report how combining health data with whole genome sequence (WGS) data in patients with cancer can help doctors provide more tailored care for their patients.

The research, published in Nature Medicine, shows that linking WGS data to real-world clinical data can identify changes in cancer DNA that may be relevant for an individual patient’s care, for example by helping identify what treatment might work best for them based on their cancer.

The study, led by Genomics England, NHS England, Queen Mary University of London, Guy’s and St Thomas’ NHS Foundation Trust and the University of Westminster, analyzed data covering over 30 types of solid tumors collected from more than 13,000 participants with cancer in the 100,000 Genomes Project. By looking at the genomic data alongside routine clinical data collected from participants over a 5-year period, such as hospital visits and the type of treatment they received, scientists were able to find specific genetic changes in the cancer associated with better or worse survival rates and improved patient outcomes.

Shares of Amazon closed up 1.5% on Wednesday.

Last November, the European Commission warned the planned acquisition raises competition concerns, saying it found Amazon may have the ability to prevent or degrade iRobot rivals’ access to its online site by delisting or reducing the visibility of their products in search results and other areas.

The European Commission opened an in-depth probe into the purchase last July and is expected to rule on the deal by Feb. 14.