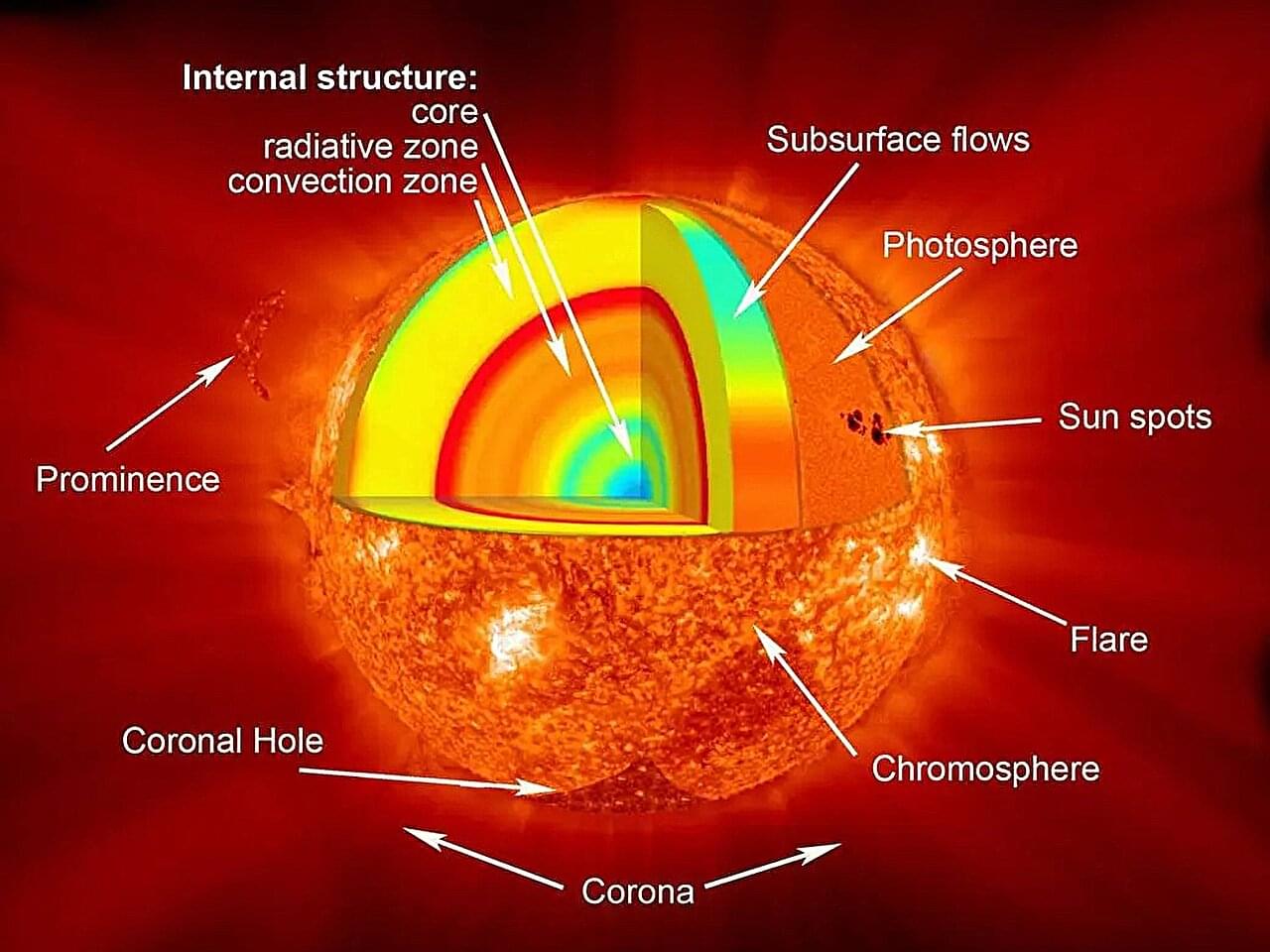

For over a century, physicists have grappled with one of the most profound questions in science: How do the rules of quantum mechanics, which govern the smallest particles, fit with the laws of general relativity, which describe the universe on the largest scales?

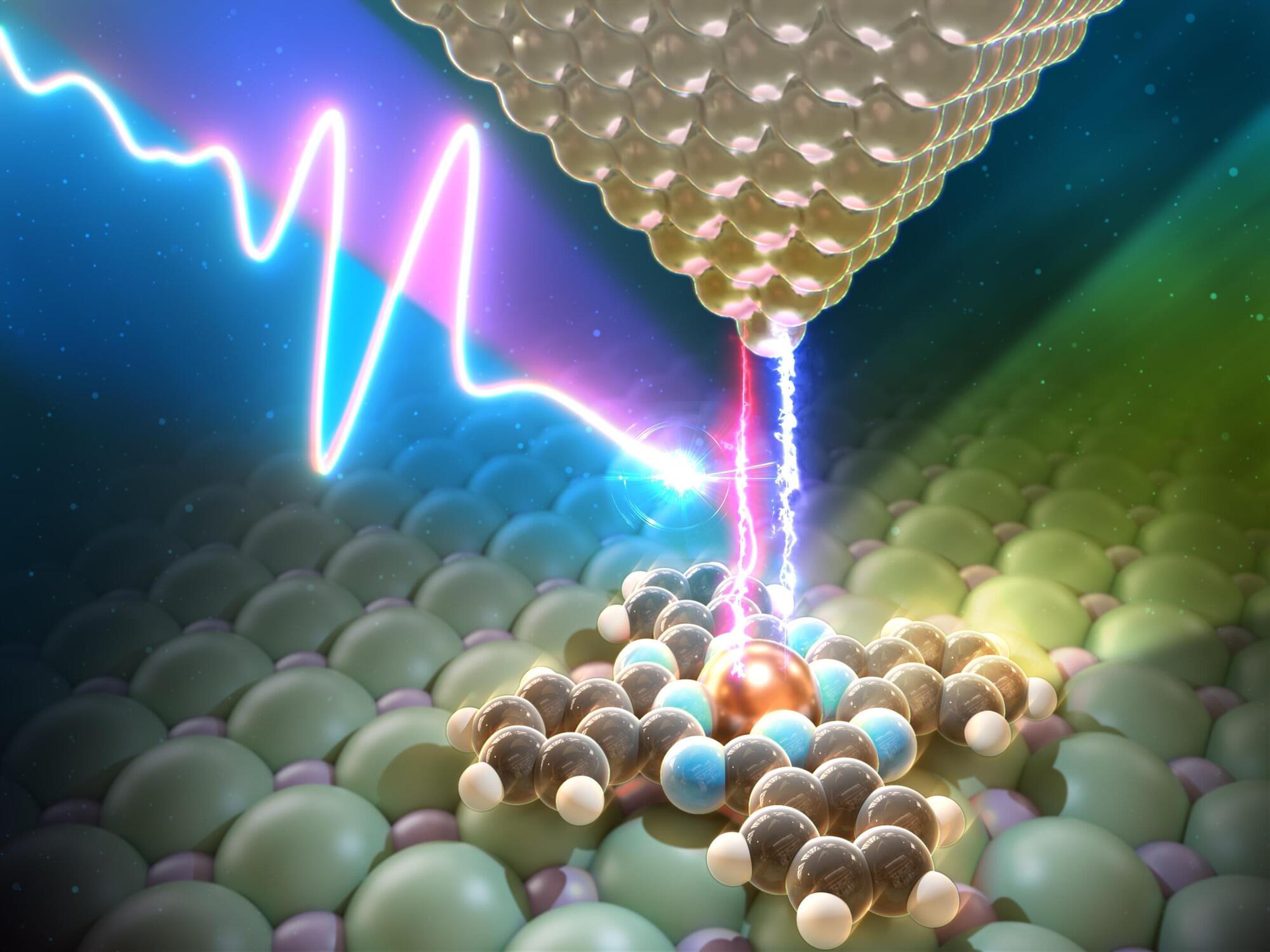

The optical lattice clock, one of the most precise timekeeping devices, is becoming a powerful tool used to tackle this great challenge. Within an optical lattice clock, atoms are trapped in a “lattice” potential formed by laser beams and are manipulated with precise control of quantum coherence and interactions governed by quantum mechanics.

Simultaneously, according to Einstein’s laws of general relativity, time moves slower in stronger gravitational fields. This effect, known as gravitational redshift, leads to a tiny shift of atoms’ internal energy levels depending on their position in gravitational fields, causing their “ticking”—the oscillations that define time in optical lattice clocks—to change.