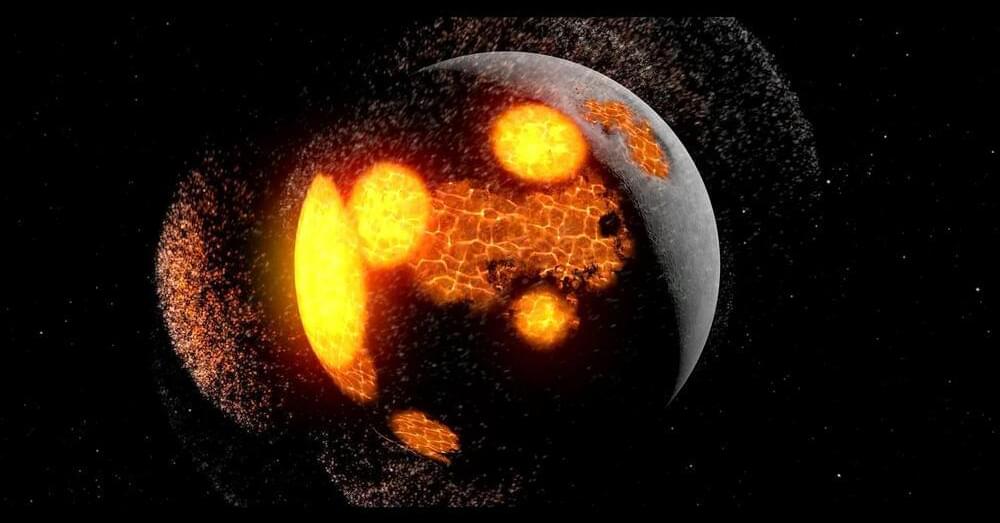

Throughout their childhood, Earth and Theia lived in harmony but everything changed when gravitational disturbances attacked.

Scientists have proposed that two massive rock formations deep within Earth’s mantle, known as large low-shear velocity provinces (LLSVPs), might be the remnants of the protoplanet Theia, which collided with Earth 4.5 billion years ago to form the Moon. These formations, located beneath West Africa and the Pacific Ocean, are denser and chemically distinct from the surrounding mantle. Researchers are using new seismic and isotopic data to investigate whether Theia’s dense mantle survived and sank into Earth’s core. If true, this discovery could change our understanding of Earth’s structure and early history.

After reading the article, Marcus gained more than 529 upvotes with this comment: “I wonder where on Earth Theia hit. Is there even a way to determine this, or does the constant tectonic activity of Earth just erase that over time?” Don’t forget to share your thoughts about Theia and Earth’s mantle in the comment section below! For a long time, scientists have agreed that the Moon was formed after a protoplanet called Theia collided with the early Earth about 4.5 billion years ago. Now, a team of researchers has a new bold idea: The remains of Theia may be hidden in two massive layers of rock located deep within Earth’s mantle.