By Gabriel Popkin — Nautilus

I’ve never seen the computer you’re reading this story on, but I can tell you a lot about it. It runs on electricity. It uses binary logic to carry out programmed instructions. It shuttles information using materials known as semiconductors. Its brains are built on integrated circuit chips packed with tiny switches known as transistors.

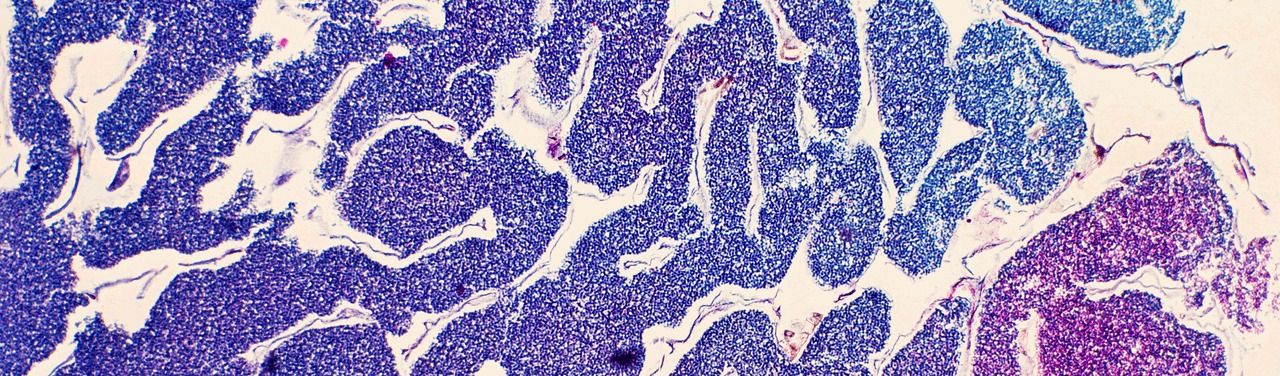

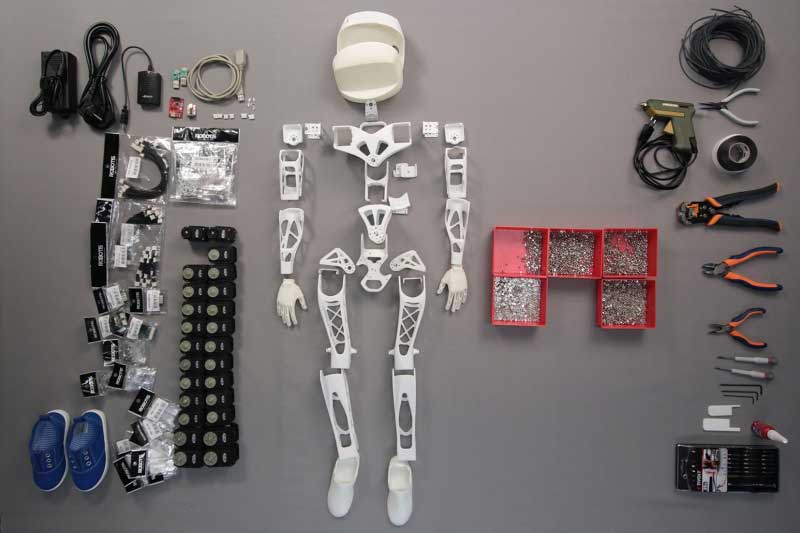

In the nearly 70 years since the first modern digital computer was built, the above specs have become all but synonymous with computing. But they need not be. A computer is defined not by a particular set of hardware, but by being able to take information as input; to change, or “process,” the information in some controllable way; and to deliver new information as output. This information and the hardware that processes it can take an almost endless variety of physical forms. Over nearly two centuries, scientists and engineers have experimented with designs that use mechanical gears, chemical reactions, fluid flows, light, DNA, living cells, and synthetic cells.

By

By

by John Biggs — TechCrunch

by John Biggs — TechCrunch