Alphabet and J&J have a company called Verb Surgical that is aiming to bring its surgical robots to the market by 2020.

Raising the bar for AI

The Chinese game of Go is considered one of the most complex games of strategy in human history. In 2016, AlphaGo, a computer programme created by London-based engineers, beat Lee Sedol, a top player of the game. AlphaGo went on to beat several of the world’s best players before it was retired from the game to focus on even more challenging global problems.

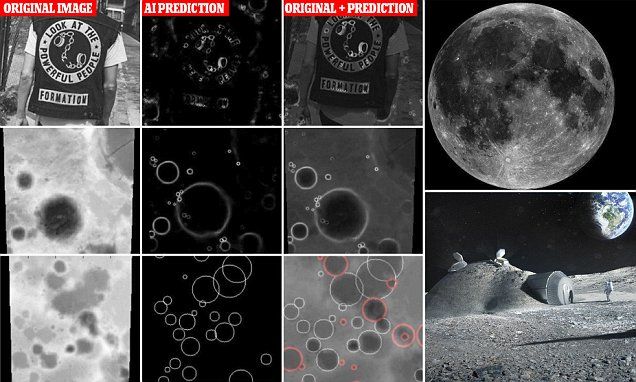

AI spots nearly 7,000 undiscovered craters on the moon within a matter of hours — and one could some day host a lunar colony…

The finding was made by a team of researchers led by Ari Silburt at Penn State University and Mohamad Ali-Dib at the University of Toronto.

They fed 90,000 images of the moon’s surface into an artificial neural network (ANN).

ANNs try to simulate the way the brain works in order to learn and can be trained to recognise patterns in information.

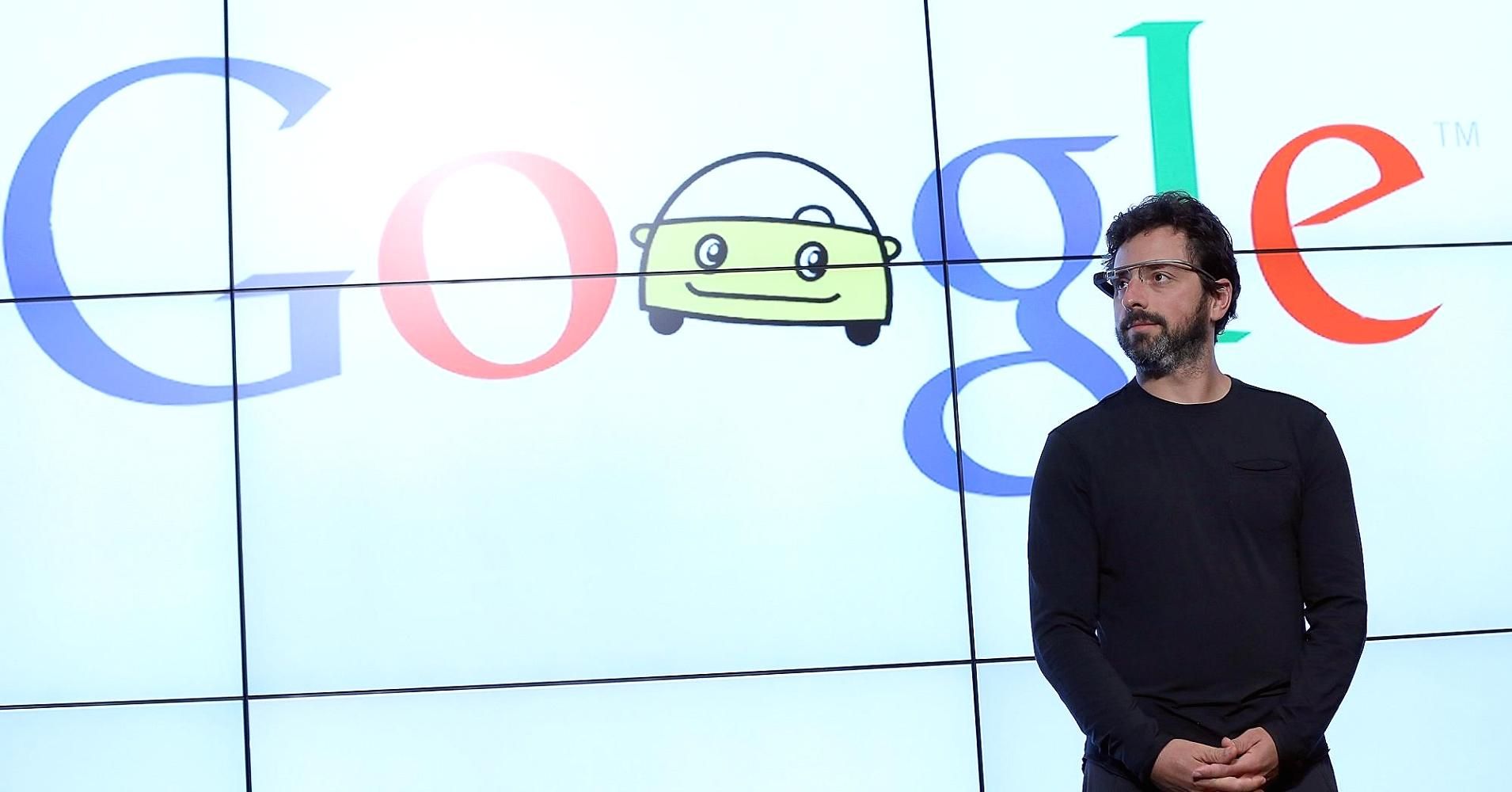

The future arrived, and it’s a minivan. Waymo’s fleet of totally driverless cars in Phoenix, Arizona, now lets members of the public hail a ride around the suburbs.

The news: Waymo’s CEO, John Krafick, announced at SXSW in Austin, Texas, that the firm is offering trips to so-called “early riders”—the first people to have signed up to use its robotic Chrysler Pacifica taxis. The minivans don’t have a safety driver behind the wheel, but someone can take control remotely if necessary.

Why it matters: The cars have been in testing without a safety driver for a few months. But this long-awaited advance is the first time people have been able to simply hail a totally driver-free ride using an app, as they would an Uber. It’s a big moment for a firm that hopes to turn its autonomy tech into a viable business by offering driverless rides.

There are several ways we can deal with the troubles that lie ahead during the transition to full automation. Some experts and companies are exploring basic income, the centuries-old idea of giving unconditional money to all citizens, enough for them to live their lives. Other thought leaders such as Bill Gates are proposing robot taxes, where companies that use automation pay certain fees for the jobs they take away from humans. Other solutions might emerge.

Automation will continue to move forward at an accelerating pace. We don’t need to fear about the destination. Instead, we must prepare ourselves for the rocky road ahead. I’m not worried about the robots taking all the jobs. I’m worried about them leaving some to the humans.

Hundreds of millions of jobs affected. Trillions of dollars of wealth created. These are the potential impacts of a coming wave of automation. In this episode of Moving Upstream, we travelled to Asia to see the next generation of industrial robots, what they’re capable of, and whether they’re friend or foe to low-skilled workers.

Watch more episodes: wsj.com/upstream

Hey, remember that dog-like robot, SpotMini, that Boston Dynamics showed off last week, the one that opened a door for its robot friend? Well, the company just dropped a new video starring the canine contraption. In this week’s episode, a human with a hockey stick does everything in his power to stop the robot from opening the door, including tugging on the machine, which struggles in an … unsettling manner. But the ambush doesn’t work. The dogbot wins and gets through the door anyway.

The most subtle detail here is also the most impressive: The robot is doing almost all of this autonomously, at least according to the video’s description. Boston Dynamics is a notoriously tight-lipped company, so just the few sentences it provided with this clip is a relative gold mine. That information describes how a human handler drove the bot up to the door, then commanded it to proceed. The rest you can see for yourself. As SpotMini grips the handle and the human tries to shut the door, it braces itself and tugs harder—all on its own. As the human grabs a tether on its back and pulls it back violently, the robot stammers and wobbles and breaks free—still, of its own algorithmic volition.

While bee populations dwindle at an alarming rate, Walmart made a surprising move in filing a patent for robot bees to act as agricultural pollinators.

On Thursday, Robert O. Work, a former deputy secretary of defense, will announce that he is teaming up with the Center for a New American Security, an influential Washington think tank that specializes in national security, to create a task force of former government officials, academics and representatives from private industry. Their goal is to explore how the federal government should embrace A.I. technology and work better with big tech companies and other organizations.

Older tech companies have long had ties with military and intelligence. But employees at internet outfits like Google are wary of too much cooperation.