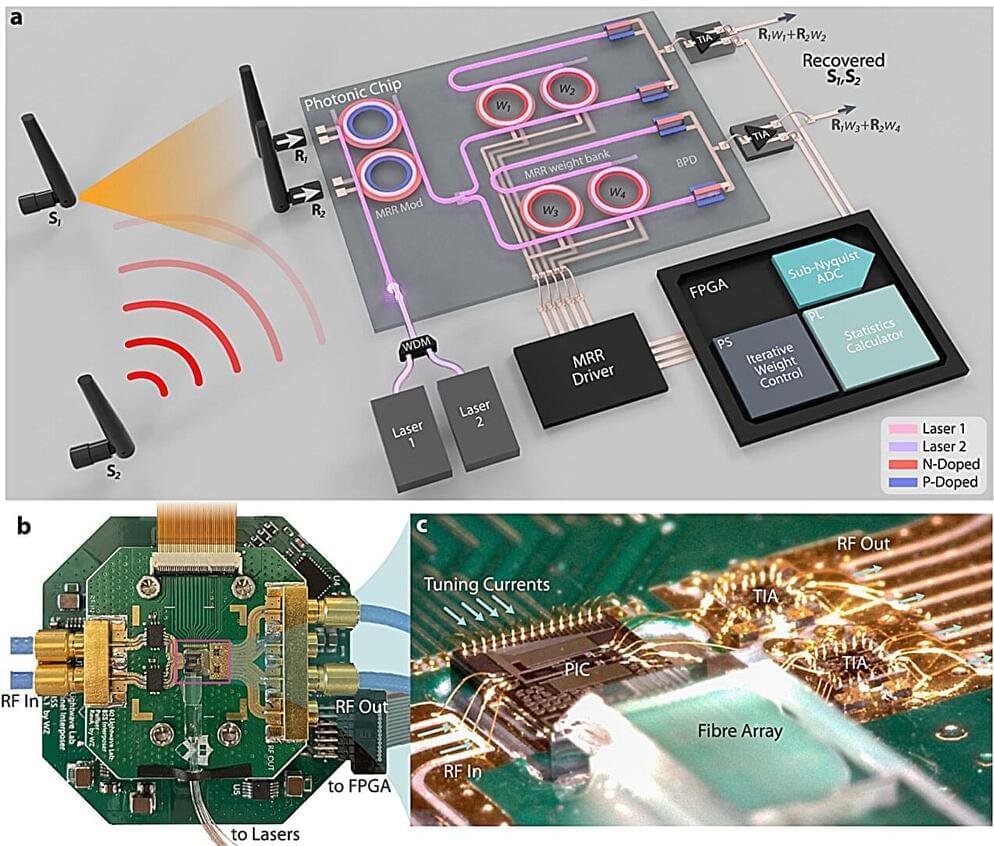

Radar altimeters are the sole indicators of altitude above a terrain. Spectrally adjacent 5G cellular bands pose significant risks of jamming altimeters and impacting flight landing and takeoff. As wireless technology expands in frequency coverage and utilizes spatial multiplexing, similar detrimental radio-frequency (RF) interference becomes a pressing issue.

To address this interference, RF front ends with exceptionally low latency are crucial for industries like transportation, health care, and the military, where the timeliness of transmitted messages is critical. Future generations of wireless technologies will impose even more stringent latency requirements on RF front-ends due to increased data rate, carrier frequency, and user count.

Additionally, challenges arise from the physical movement of transceivers, resulting in time-variant mixing ratios between interference and signal-of-interest (SOI). This necessitates real-time adaptability in mobile wireless receivers to handle fluctuating interference, particularly when it carries safety-to-life critical information for navigation and autonomous driving, such as aircraft and ground vehicles.