In this episode, my guest is Dr. Robert Lustig, M.D., neuroendocrinologist, professor of pediatrics at the University of California, San Francisco (UCSF), and a bestselling author on nutrition and metabolic health. We address the “calories in-calories out” (CICO) model of metabolism and weight regulation and how specific macronutrients (protein, fat, carbohydrates), fiber and sugar can modify the CICO equation. We cover how different types of sugars, specifically fructose, sugars found in liquid form, taste intensity, and other factors impact insulin levels, liver, kidney, and metabolic health. We also explore how fructose in non-fruit sources can be addictive (acting similarly to drugs of abuse) and how sugar alters brain circuits related to food cravings and satisfaction. We discuss the role of sugar in childhood and adult obesity, gut health and disease and mental health. We also discuss how the food industry uses refined sugars to create pseudo foods and what these do to the brain and body. This episode is replete with actionable information about sugar and metabolism, weight control, brain health and body composition. It ought to be of interest to anyone seeking to understand how specific food choices impact the immediate and long-term health of the brain and body. For the show notes, including referenced articles and additional resources, please visit https://www.hubermanlab.com/episode/dr-robert-lustig-how-sug…our-health Thank you to our sponsors AG1: https://drinkag1.com/huberman Eight Sleep: https://eightsleep.com/huberman Levels: https://levels.link/huberman AeroPress: https://aeropress.com/huberman LMNT: https://drinklmnt.com/huberman Momentous: https://livemomentous.com/huberman Huberman Lab Social & Website Instagram: https://www.instagram.com/hubermanlab Twitter: https://twitter.com/hubermanlab Facebook: https://www.facebook.com/hubermanlab TikTok: https://www.tiktok.com/@hubermanlab LinkedIn: https://www.linkedin.com/in/andrew-huberman Website: https://www.hubermanlab.com Newsletter: https://www.hubermanlab.com/newsletter Dr. Robert Lustig Website: https://robertlustig.com Books: https://robertlustig.com/books Publications: https://robertlustig.com/publications Blog: https://robertlustig.com/blog UCSF academic profile: https://profiles.ucsf.edu/robert.lustig Metabolical (book): https://amzn.to/48mNhOE SugarScience: http://sugarscience.ucsf.edu X: https://twitter.com/RobertLustigMD Facebook: https://www.facebook.com/DrRobertLustig LinkedIn: https://www.linkedin.com/in/robert-lustig-8904245 Instagram: https://www.instagram.com/robertlustigmd Threads: https://www.threads.net/@robertlustigmd Timestamps 00:00:00 Dr. Robert Lustig 00:02:02 Sponsors: Eight Sleep, Levels & AeroPress 00:06:41 Calories, Fiber 00:12:15 Calories, Protein & Fat, Trans Fats 00:18:23 Carbohydrate Calories, Glucose vs. Fructose, Fruit, Processed Foods 00:26:43 Fructose, Mitochondria & Metabolic Health 00:31:54 Trans Fats; Food Industry & Language 00:35:33 Sponsor: AG1 00:37:04 Glucose, Insulin, Muscle 00:42:31 Insulin & Cell Growth vs. Burn; Oxygen & Cell Growth, Cancer 00:51:14 Glucose vs. Fructose, Uric Acid; “Leaky Gut” & Inflammation 01:00:51 Supporting the Gut Microbiome, Fasting 01:04:13 Highly Processed Foods, Sugars; “Price Elasticity” & Food Industry 01:10:28 Sponsor: LMNT 01:11:51 Processed Foods & Added Sugars 01:14:19 Sugars, High-Fructose Corn Syrup 01:18:16 Food Industry & Added Sugar, Personal Responsibility, Public Health 01:30:04 Obesity, Diabetes, “Hidden” Sugars 01:34:57 Diet, Insulin & Sugars 01:38:20 Tools: NOVA Food Classification; Perfact Recommendations 01:43:46 Meat & Metabolic Health, Eggs, Fish 01:46:44 Sources of Omega-3s; Vitamin C & Vitamin D 01:52:37 Tool: Reduce Inflammation; Sugars, Cortisol & Stress 01:59:12 Food Industry, Big Pharma & Government; Statins 02:06:55 Public Health Shifts, Rebellion, Sugar Tax, Hidden Sugars 02:12:58 Real Food Movement, Public School Lunches & Processed Foods 02:18:25 3 Fat Types & Metabolic Health; Sugar, Alcohol & Stress 02:26:40 Artificial & Non-Caloric Sweeteners, Insulin & Weight Gain 02:34:32 Re-Engineering Ultra-Processed Food 02:38:45 Sugar & Addiction, Caffeine 02:45:18 GLP-1, Semaglutide (Ozempic, Wegovy, Tirzepatide), Risks; Big Pharma 02:57:39 Obesity & Sugar Addiction; Brain Re-Mapping, Insulin & Leptin Resistance 03:03:31 Fructose & Addiction, Personal Responsibility & Tobacco 03:07:27 Food Choices: Fruit, Rice, Tomato Sauce, Bread, Meats, Fermented Foods 03:12:54 Intermittent Fasting, Diet Soda, Food Combinations, Fiber, Food Labels 03:19:14 Improving Health, Advocacy, School Lunches, Hidden Sugars 03:26:55 Zero-Cost Support, Spotify & Apple Reviews, YouTube Feedback, Sponsors, Momentous, Social Media, Neural Network Newsletter #HubermanLab #Science #Nutrition Title Card Photo Credit: Mike Blabac — https://www.blabacphoto.com Disclaimer: https://hubermanlab.com/disclaimer

Category: health – Page 180

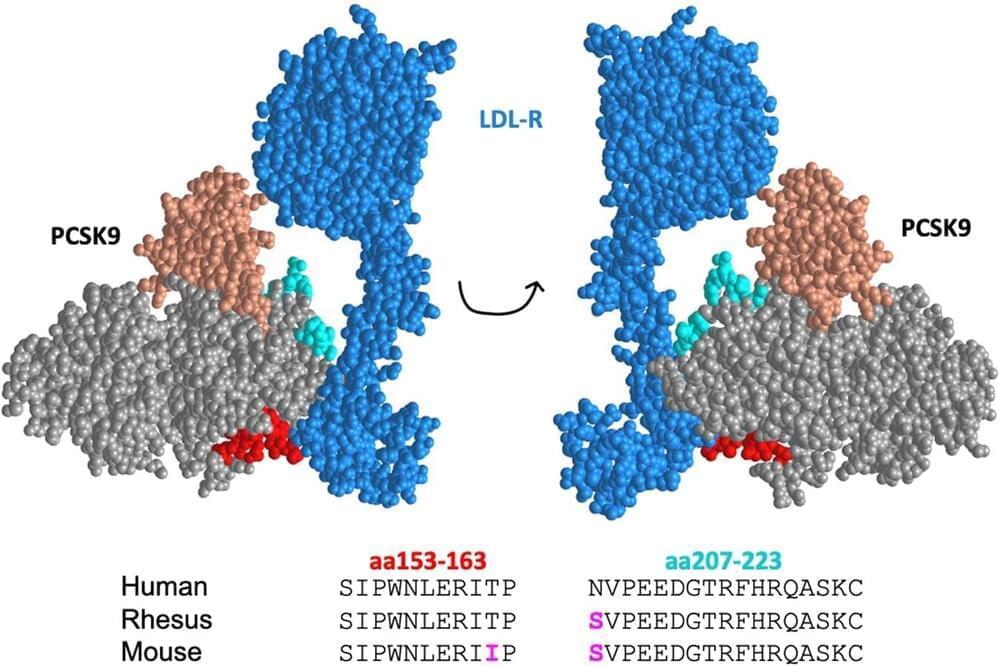

The future of heart health: Researchers develop vaccine to lower cholesterol

Nearly two in five U.S. adults have high cholesterol, according to the Centers for Disease Control and Prevention (CDC). Untreated, high cholesterol can lead to heart disease and stroke, which are two of the top causes of death in the U.S. Worldwide; cardiovascular diseases claim nearly 18 million lives every year, according to the World Health Organization.

A new vaccine developed by researchers at The University of New Mexico School of Medicine could be a game-changer, providing an inexpensive method to lower “bad” LDL cholesterol, which creates dangerous plaques that can block blood vessels.

In a recent study published in npj Vaccines, a team led by Bryce Chackerian, Ph.D., Regents’ Professor in the Department of Molecular Genetics & Microbiology, reported the vaccines lowered LDL cholesterol almost as effectively as an expensive class of drugs known as PCSK9 inhibitors.

Optimize Fat Loss & Muscle Building: Avoid These Mistakes

Welcome for another episode of Health Theory! This episode promises to deliver a riveting conversation with the master of fitness, Andy Galpin. Andy is not just your average exercise physiologist, professor, and researcher. He’s a true powerhouse with a trademark ability to seamlessly bridge the gap between academic knowledge and real-world application.

Vertex’s First Crispr Gene Editing Therapy Gets EU Backing

Europe’s health regulator followed the US and UK in backing the first gene-editing therapy to use Crispr technology, a Vertex Pharmaceuticals Inc. and Crispr Therapeutics AG treatment for sickle cell disease.

The European Medicines Agency’s expert panel recommended on Friday authorizing the Vertex and Crispr drug, Casgevy, for people with severe sickle cell disease and another serious hereditary blood disorder, beta-thalassemia, which is traditionally treated with repeated transfusions. Vertex said before the ruling that it had yet to establish a European list price for the one-time therapy, which costs $2.2 million in the US.

The treatment makes precisely targeted changes in patients’ DNA, a months-long process that requires removing bone marrow and a stem cell transplant. In Europe, Vertex said its initial focus will be on countries with the highest numbers of patients, including France, Italy, the UK and Germany.

Dictionary.com 2023 Word Of The Year ‘Hallucinate’ Is An AI Health Issue

Bad things can happen when you hallucinate. If you are human, you can end up doing things like putting your underwear in the oven. If you happen to be a chatbot or some other type of artificial intelligence (AI) tool, you can spew out false and misleading information, which—depending on the info—could affect many, many people in a bad-for-your-health-and-well-being type of way. And this latter type of hallucinating has become increasingly common in 2023 with the continuing proliferation of AI. That’s why Dictionary.com has an AI-specific definition of “hallucinate” and has named the word as its 2023 Word of the Year.

Dictionary.com noticed a 46% jump in dictionary lookups for the word “hallucinate” from 2022 to 2023 with a comparable increase in searches for “hallucination” as well. Meanwhile, there was a 62% jump in searches for AI-related words like “chatbot”, “GPT”, “generative AI”, and “LLM.” So the increases in searches for “hallucinate” is likely due more to the following AI-specific definition of the word from Dictionary.com rather than the traditional human definition:

hallucinate [ h uh-loo-s uh-neyt ]-verb-(of artificial i ntelligence) to produce false information contrary to the intent of the user and present it as if true and factual. Example: When chatbots hallucinate, the result is often not just inaccurate but completely fabricated.

Here’s a non-AI-generated new flash: AI can lie, just like humans. Not all AI, of course. But AI tools can be programmed to serve like little political animals or snake oil salespeople, generating false information while making it seem like it’s all about facts. The difference from humans is that AI can churn out this misinformation and disinformation at even greater speeds. For example, a study published in JAMA Internal Medicine last month showed how OpenAI’s GPT Playground could generate 102 different blog articles “that contained more than 17,000 words of disinformation related to vaccines and vaping” within just 65 minutes. Yes, just 65 minutes. That’s about how long it takes to watch the TV show 60 Minutes and then make a quick uncomplicated bathroom trip that doesn’t involve texting on the toilet. Moreover, the study demonstrated how “additional generative AI tools created an accompanying 20 realistic images in less than 2 minutes.

Healthcare providers to join US plan to manage AI risks

WASHINGTON, Dec 14 (Reuters) — Twenty-eight healthcare companies, including CVS Health (CVS.N), are signing U.S. President Joe Biden’s voluntary commitments aimed at ensuring the safe development of artificial intelligence (AI), a White House official said on Thursday.

The commitments by healthcare providers and payers follow those of 15 leading AI companies, including Google, OpenAI and OpenAI partner Microsoft (MSFT.O) to develop AI models responsibly.

Biden’s government is pushing to set parameters around AI as it makes rapid gains in capability and popularity while regulation remains limited.

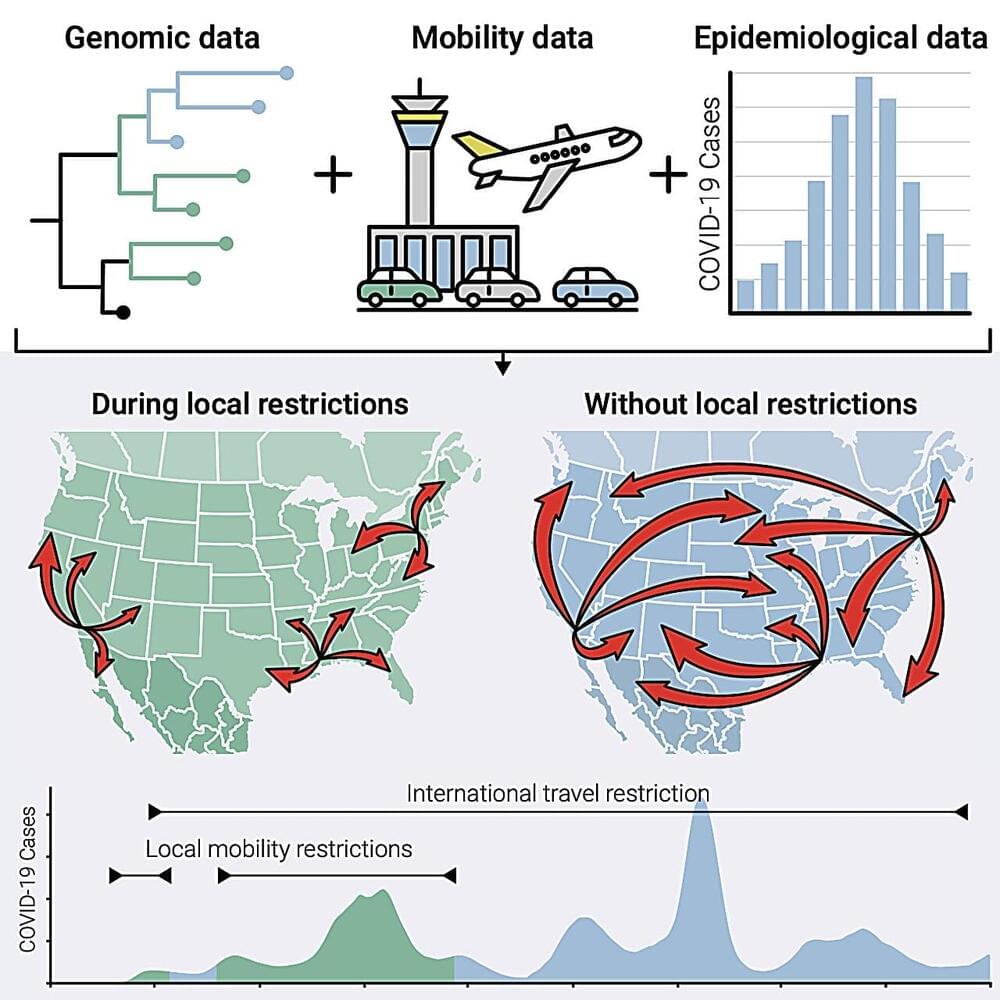

Social distancing was more effective at preventing local COVID-19 transmission than international border closures

Elucidating human contact networks could help predict and prevent the transmission of SARS-CoV-2 and future pandemic threats. A new study from Scripps Research scientists and collaborators points to which public health protocols worked to mitigate the spread of COVID-19—and which ones didn’t.

In the study, published online in Cell on December 14, 2023, the Scripps Research-led team of scientists investigated the efficacy of different mandates—including stay-at-home measures, social distancing and travel restrictions —at preventing local and regional transmission during different phases of the COVID-19 pandemic.

They found that local transmission was driven by the amount of travel between locations, not by how geographically nearby they were. The study also revealed that the partial closure of the U.S.-Mexico border was ineffective at preventing cross-border transmission of the virus. These findings, in combination with ongoing genomic surveillance, could help guide public health policy to prevent future pandemics and mitigate the new “endemic” phase of COVID-19.

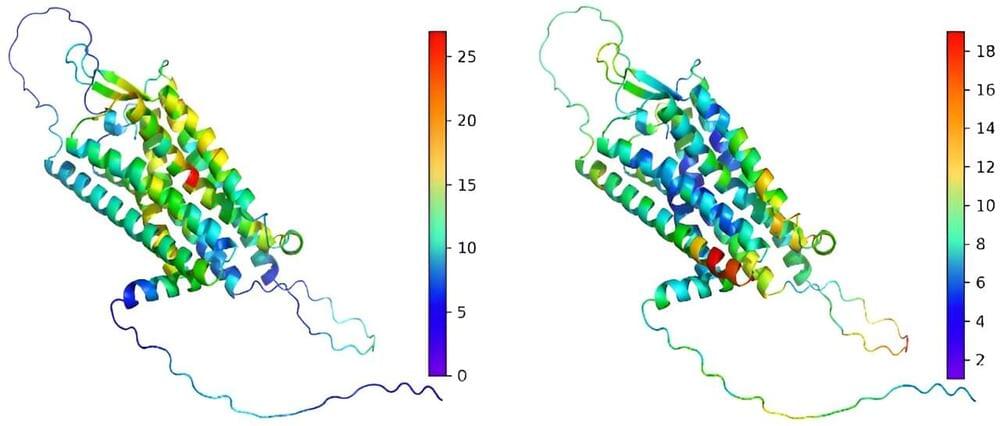

Unlocking the human genome: Innovative machine learning tool predicts functional consequences of genetic variants

In a novel study, researchers from the Icahn School of Medicine at Mount Sinai have introduced LoGoFunc, an advanced computational tool that predicts pathogenic gain and loss-of-function variants across the genome.

Unlike current methods that predominantly focus on loss of function, LoGoFunc distinguishes among different types of harmful mutations, offering potentially valuable insights into diverse disease outcomes. The findings are described in Genome Medicine.

Genetic variations can alter protein function, with some mutations boosting activity or introducing new functions (gain of function), while others diminish or eliminate function (loss of function). These changes can have significant implications for human health and the treatment of disease.

Extending the uncertainty principle by using an unbounded operator

A study published in the journal Physical Review Letters by researchers in Japan solves a long-standing problem in quantum physics by redefining the uncertainty principle.

Werner Heisenberg’s uncertainty principle is a key and surprising feature of quantum mechanics, and he can thank his hay fever for it. Miserable in Berlin in the summer of 1925, the young German physicist vacationed on the remote, rocky island of Helgoland, in the North Sea off the northern German coast. His allergies improved, and he was able to continue his work trying to understand the intricacies of Bohr’s model of the atom, developing tables of internal atomic properties, such as energy, position and momentum.

When he returned to Göttingen, his advisor, Max Born, recognized these tables could each be formed into a matrix—essentially a two-dimensional table of values. Together with the 22-year-old Pasqual Jordan, they refined their work into matrix mechanics—the first successful theory of quantum mechanics—the physical laws that describe tiny objects like atoms and electrons.

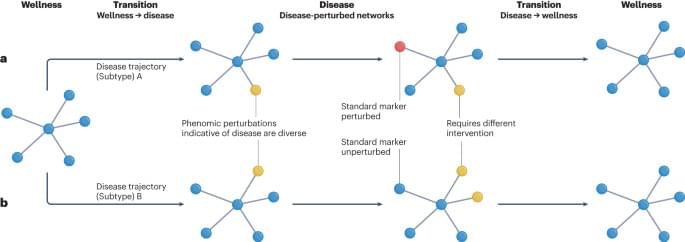

The transition from genomics to phenomics in personalized population health

This Perspective reviews large-scale genomics and longitudinal phenomics efforts and the insights they can provide into wellness. The authors describe their vision for the transformation of the current health care from disease-oriented to data-driven, wellness-oriented and personalized population health.