Scientists have used satellite data from the Mauna Loa eruption to improve lava flow modeling for both Earth and other planetary bodies. [ https://www.labroots.com/trending/earth-and-the-environment/…-planets-2](https://www.labroots.com/trending/earth-and-the-environment/…-planets-2)

Do lava flows behave the same on other planets as they do on Earth? This is what a recent study published in the Journal of Volcanology and Geothermal Research hopes to address as a team of scientists investigated new methods for predicting the lava flow behavior and how this could be applied to other planets. This study has the potential to help scientists and engineers develop novel scientific methods that can be applied both on Earth and beyond.

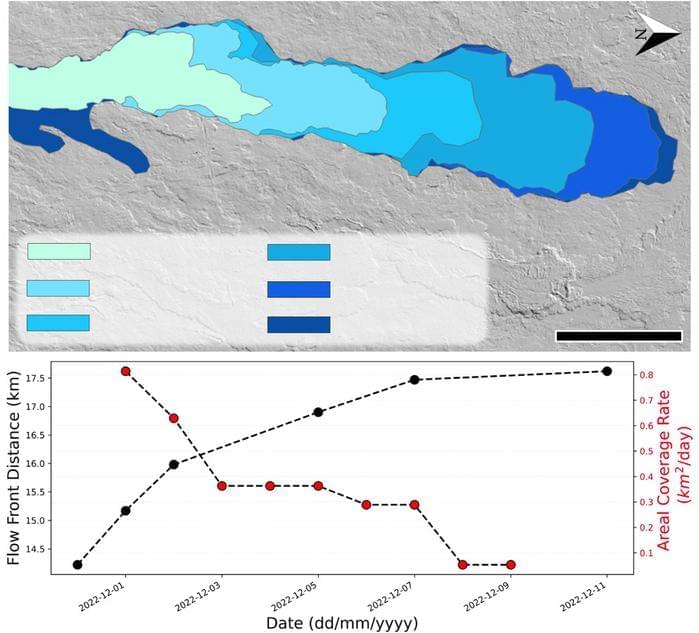

For the study, the researchers examined satellite data from the 2022 Mauna Loa eruption in Hawaii that lasted from November 27 to December 10. The motivation behind the study was to address a longstanding knowledge gap regarding the limitations of using individual satellite datasets for volcanic hazard response. To address this, the team analyzed satellite data from a combination of private companies and government agencies to gain insight into the entire time period of the eruption.

In the end, the researchers successfully identified an origin for the eruption along with gathering data and fresh insight on the lava flow behavior during the eruption and post-eruption activity. They note several times throughout the study that this new method could be used to study volcanic activity on Mars, Venus, and Jupiter’s volcanic moon Io, the last of which is the most volcanically active planetary body in the entire solar system that boasts hundreds of active volcanoes.