The exact empirical evidence for retrocausality does not exist yet, but the existing empirical data as those from Bell tests may be interpreted in a way to support the retrocausal framework.

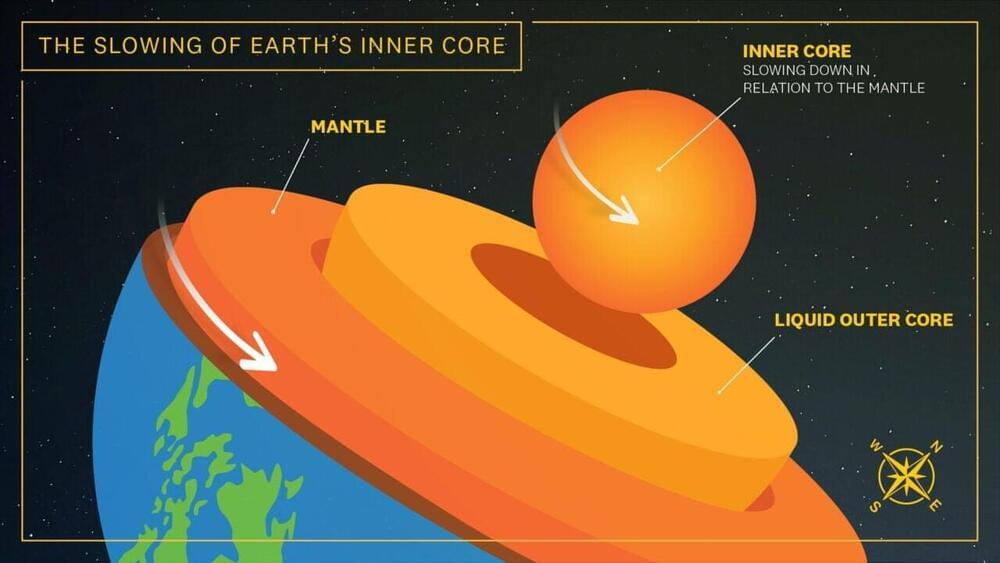

Have you ever thought that future states could affect the events that have occurred in the past? Although this idea sounds quite bizarre, it is indeed possible according to a quantum mechanical effect called retrocausality. According to the concept, causality and time do not work in the conventional sense and remarkably, an effect can predate its cause, thus reversing the directionality of time as well.

Usually, in the classical world, this is not what we actually experience. For every cause, there is a corresponding effect, but they work sequentially rather than in the reverse way. Conventional thought process suggests that once a particular event has occurred, there’s almost zero probability that it can be reversed. The physical reason is simple, and it has to do with the arrow of time. In general, the arrow of time points in a single forward direction and this is one of the major unsolved challenges of the foundations of physics because physicists are uncertain of why the nature of time is such.