Fyodor R.

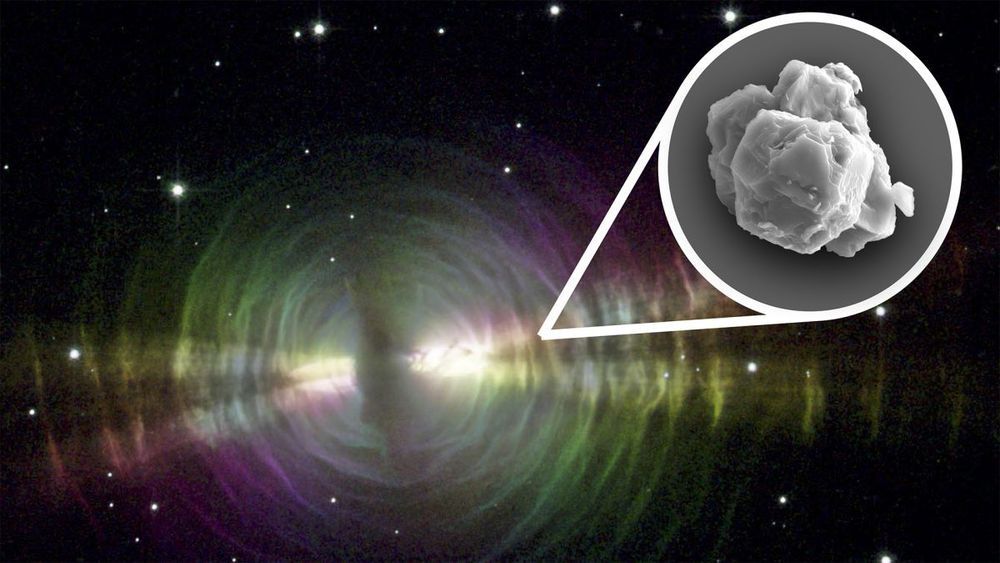

Scientists recently identified the oldest material on Earth: stardust that’s 7 billion years old, tucked away in a massive, rocky meteorite that struck our planet half a century ago.

🏺Stardust

Stars have life cycles. They’re born when bits of dust and gas floating through space find each other and collapse in on each other and heat up. They burn for millions to billions of years, and then they die. When they die, they pitch the particles that formed in their winds out into space, and those bits of stardust eventually form new stars, along with new planets and moons and meteorites. And in a meteorite that fell fifty years ago in Australia, scientists have now discovered stardust that formed 5 to 7 billion years ago — the oldest solid material ever found on Earth.