Scientists disagree when the Thwaites or Doomsday Glacier will fully melt, but they are starting to weigh up large scale interventions.

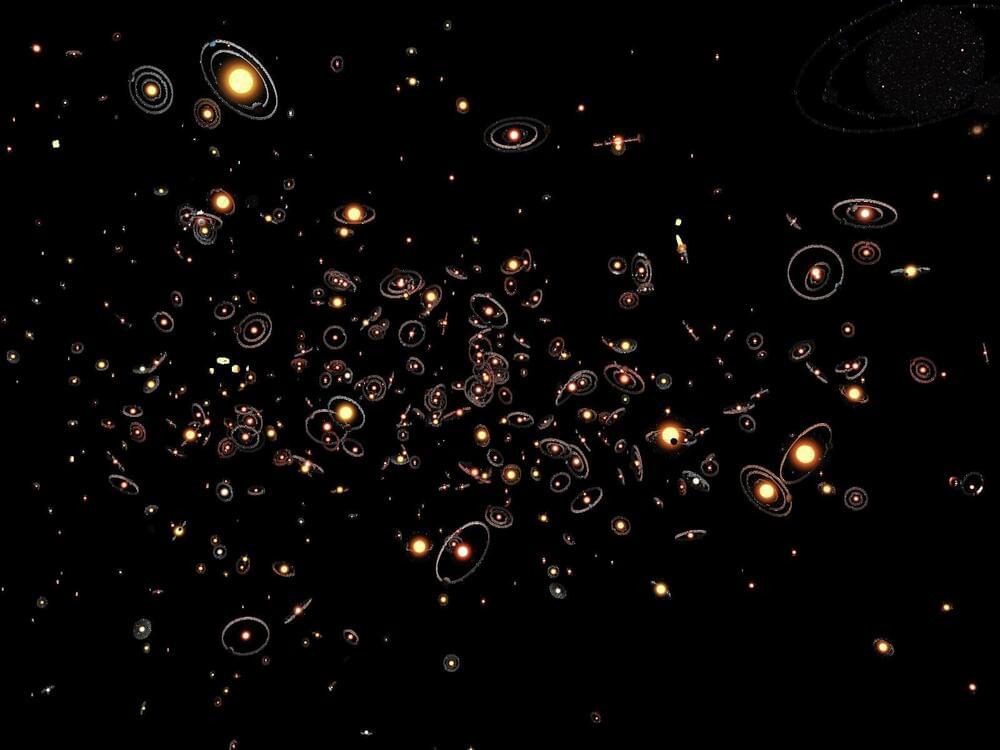

According to astrophysicist Erik Zackrisson’s computer model, there could be about 70 quintillion planets in the universe. However, most of these planets are vastly different from Earth — they tend to be larger, older, and not suited for life. Only around 63 exoplanets have been found in their stars’ habitable zones, making Earth potentially one of the few life-sustaining planets. This could explain Fermi’s paradox — the puzzling lack of evidence for extraterrestrial life. While we continue searching, Earth might be truly special.

After reading the article, Harry gained more than 55 upvotes with this comment: “If life developing on Earth the way it has is 1 in a billion, then this would imply that there is life on at least a billion other planets (?)”

The prevailing belief among astronomers is that the number of planets should at least match the number of stars. With 100 billion galaxies in the universe, each containing about a billion trillion stars, there should be an equally vast number of exoplanets, including Earth-like worlds — in theory.

Could Earth be a cosmic sanctuary for observation? The Zoo Hypothesis suggests so.

In 1950, Italian-American physicist Enrico Fermi famously asked, “Where is everybody?” The question has since become the basis of the Fermi Paradox, addressing the conflict between the high probability of extraterrestrial life and the complete lack of evidence for its existence. Several hypotheses have been proposed to explain this, including the Zoo Hypothesis, first introduced in 1973 by Harvard astrophysicist John A. Ball. This theory posits that advanced alien civilizations may know of Earth and its inhabitants but choose to avoid contact, allowing humanity to develop naturally without interference.

The 3 Body Problem Explored: Cosmic Sociology, Longtermism & Existential Risk — round table discussion with three great minds: Robin Hanson, Anders Sandberg and Joscha Bach — moderated by Adam Ford (SciFuture) and James Hughes (IEET).

Some of the items discussed:

- How can narratives that keep people engaged avoid falling short of being realistic?

- In what ways is AI superintelligence kept of stage to allow a narrative that is familiar and easier to make sense of?

- Differences in moral perspectives — moral realism, existentialism and anti-realism.

- Will values of advanced civilisations converge to a small number of possibilities, or will they vary greatly?

- How much will competition be the dominant dynamic in the future, compared to co-ordination?

- In a competitive dynamic, will defense or offense be the most dominant strategy?

Many thanks for tuning in!

Have any ideas about people to interview? Want to be notified about future events? Any comments about the STF series?

Please fill out this form: https://docs.google.com/forms/d/1mr9PIfq2ZYlQsXRIn5BcLH2onbiSI7g79mOH_AFCdIk/

Kind regards.

Adam Ford.

- Science, Technology & the Future — #SciFuture — http://scifuture.org

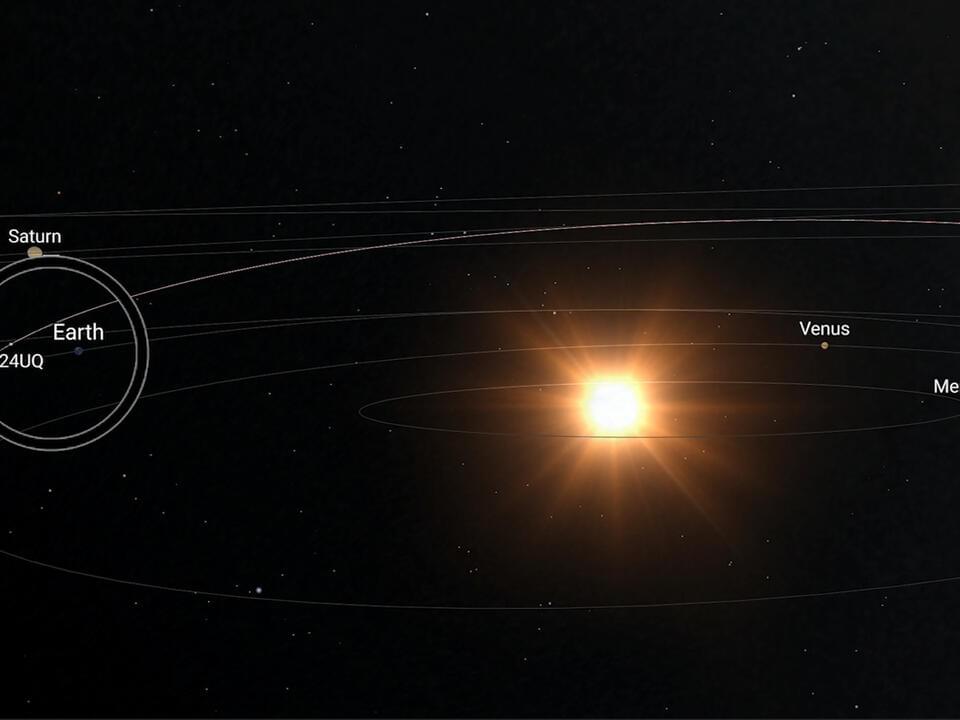

The two new studies place the sources of ordinary chondrite types into specific asteroid families – and most likely specific asteroids. This work requires painstaking back-tracking of meteoroid trajectories, observations of individual asteroids, and detailed modelling of the orbital evolution of parent bodies.

The study led by Miroslav Brož reports that ordinary chondrites originate from collisions between asteroids larger than 30 kilometres in diameter that occurred less than 30 million years ago.

The Koronis and Massalia asteroid families provide appropriate body sizes and are in a position that leads to material falling to Earth, based on detailed computer modelling. Of these families, asteroids Koronis and Karin are likely the dominant sources of H chondrites. Massalia (L) and Flora (LL) families are by far the main sources of L-and LL-like meteorites.

And exploration of whether we can solve the Fermi Paradox without ever discovering alien civilizations.

My new clips and live channel:

My Patreon Page:

/ johnmichaelgodier.

My Event Horizon Channel:

An exploration of a potential extinction event involving a quasar igniting in an old galaxy and sterlizing it of much of its life.

My Patreon Page:

/ johnmichaelgodier.

My Event Horizon Channel:

/ eventhorizonshow.

Music:

The great George Church takes us through the revolutionary journey of DNA sequencing from his early groundbreaking work to the latest advancements. He discusses the evolution of sequencing methods, including molecular multiplexing, and their implications for understanding and combating aging.

We talk about the rise of biotech startups, potential future directions in genome sequencing, the role of precise gene therapies, the ongoing integration of nanotechnology and biology, the potential of biological engineering in accelerating evolution, transhumanism, the Human Genome Project, and the importance of intellectual property in biotechnology.

The episode concludes with reflections on future technologies, the importance of academia in fostering innovation, and the need for scalable developments in biotech.

00:00 Introduction to Longevity and DNA Sequencing.

01:43 George Church’s Early Work in Genomic Sequencing.

02:38 Innovations in DNA Sequencing.

03:15 The Evolution of Sequencing Methods.

07:41 Longevity and Aging Reversal.

12:12 Biotech Startups and Commercial Endeavors.

17:38 Future Directions in Genome Sequencing.

28:10 Humanity’s Role and Transhumanism.

37:23 Exploring the Connectome and Neural Networks.

38:29 The Mystery of Life: From Atoms to Living Systems.

39:35 Accelerating Evolution and Biological Engineering.

41:37 Merging Nanotechnology and Biology.

45:00 The Future of Biotech and Young Innovators.

47:16 The Human Genome Project: Successes and Shortcomings.

01:01:10 Intellectual Property in Biotechnology.

01:06:30 Future Technologies and Final Thoughts.