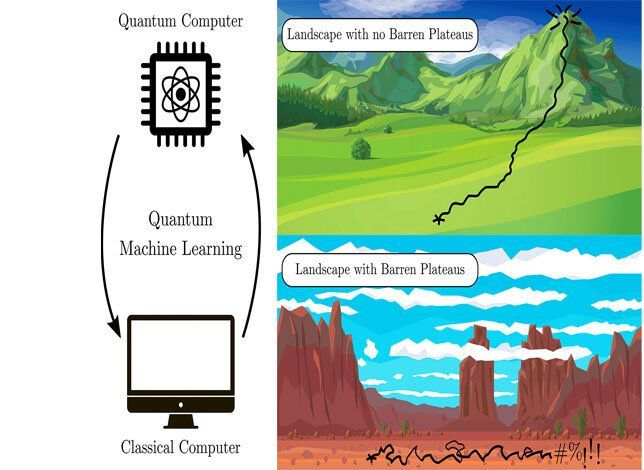

Many machine learning algorithms on quantum computers suffer from the dreaded “barren plateau” of unsolvability, where they run into dead ends on optimization problems. This challenge had been relatively unstudied—until now. Rigorous theoretical work has established theorems that guarantee whether a given machine learning algorithm will work as it scales up on larger computers.

“The work solves a key problem of useability for quantum machine learning. We rigorously proved the conditions under which certain architectures of variational quantum algorithms will or will not have barren plateaus as they are scaled up,” said Marco Cerezo, lead author on the paper published in Nature Communications today by a Los Alamos National Laboratory team. Cerezo is a post doc researching quantum information theory at Los Alamos. “With our theorems, you can guarantee that the architecture will be scalable to quantum computers with a large number of qubits.”

“Usually the approach has been to run an optimization and see if it works, and that was leading to fatigue among researchers in the field,” said Patrick Coles, a coauthor of the study. Establishing mathematical theorems and deriving first principles takes the guesswork out of developing algorithms.