The rapid advancement and diffusion of artificial intelligence (AI) systems, such as the machine learning models underpinning the functioning of ChatGPT, Gemini and similar platforms, have posed new demands on the electronics engineering industry. In fact, these systems are computationally intensive and consume substantial power, particularly when running on existing devices.

Electronics engineers worldwide have thus been trying to develop new hardware systems that can run machine learning algorithms more energy efficiently, without adversely affecting their performance. One promising approach for reducing power consumption entails the use of two-dimensional (2D) semiconductors, ultrathin materials that have already proved promising for the development of smaller electronics.

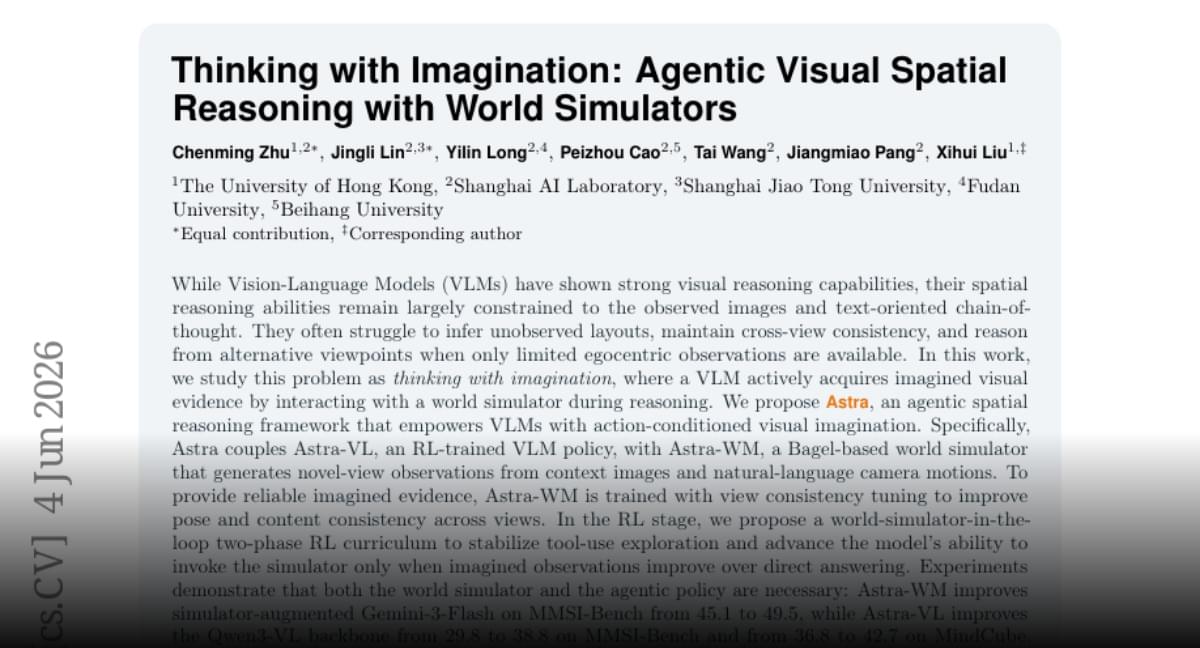

Researchers at Nanjing University, Suzhou Laboratory and Huawei Technologies Co. Ltd. recently developed and fabricated a fully functional computer based on the 2D semiconductor molybdenum disulfide (MoS₂).