Apr 10, 2024

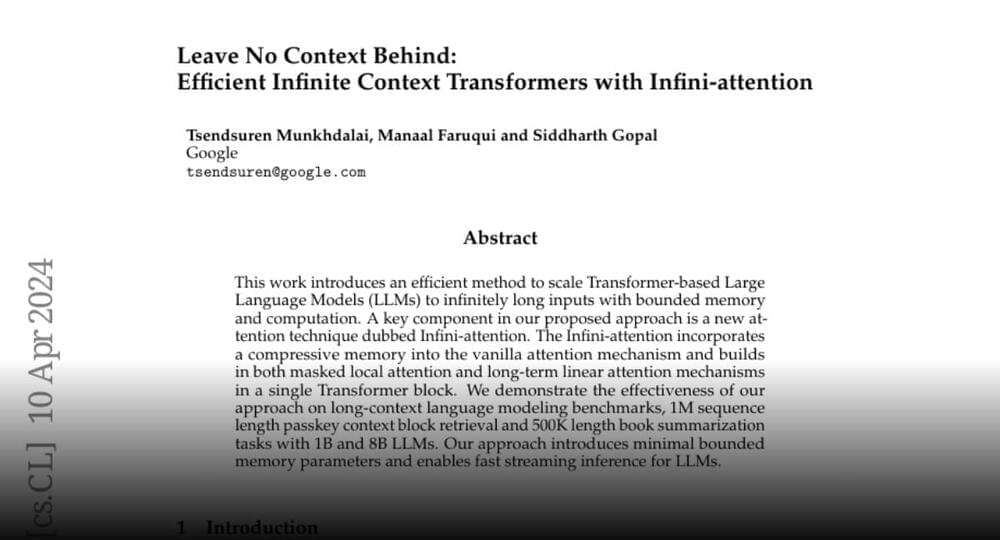

Paper page — Leave No Context Behind: Efficient Infinite Context Transformers with Infini-attention

Posted by Cecile G. Tamura in category: futurism

Google announces Leave No Context Behind.

Efficient Infinite Context Transformers with Infini-attention.

This work introduces an efficient method to scale Transformer-based Large Language Models (LLMs) to infinitely long inputs with bounded memory and…