How does the brain know which neurons to adjust during learning in order to optimize behavior? MIT researchers discovered that brains can use cell-by-cell error signals to do this — surprisingly similar to how AI systems are trained via backpropagation.

When we learn a new skill, the brain has to decide—cell by cell—what to change. New research from MIT suggests it can do that with surprising precision, sending targeted feedback to individual neurons so each one can adjust its activity in the right direction.

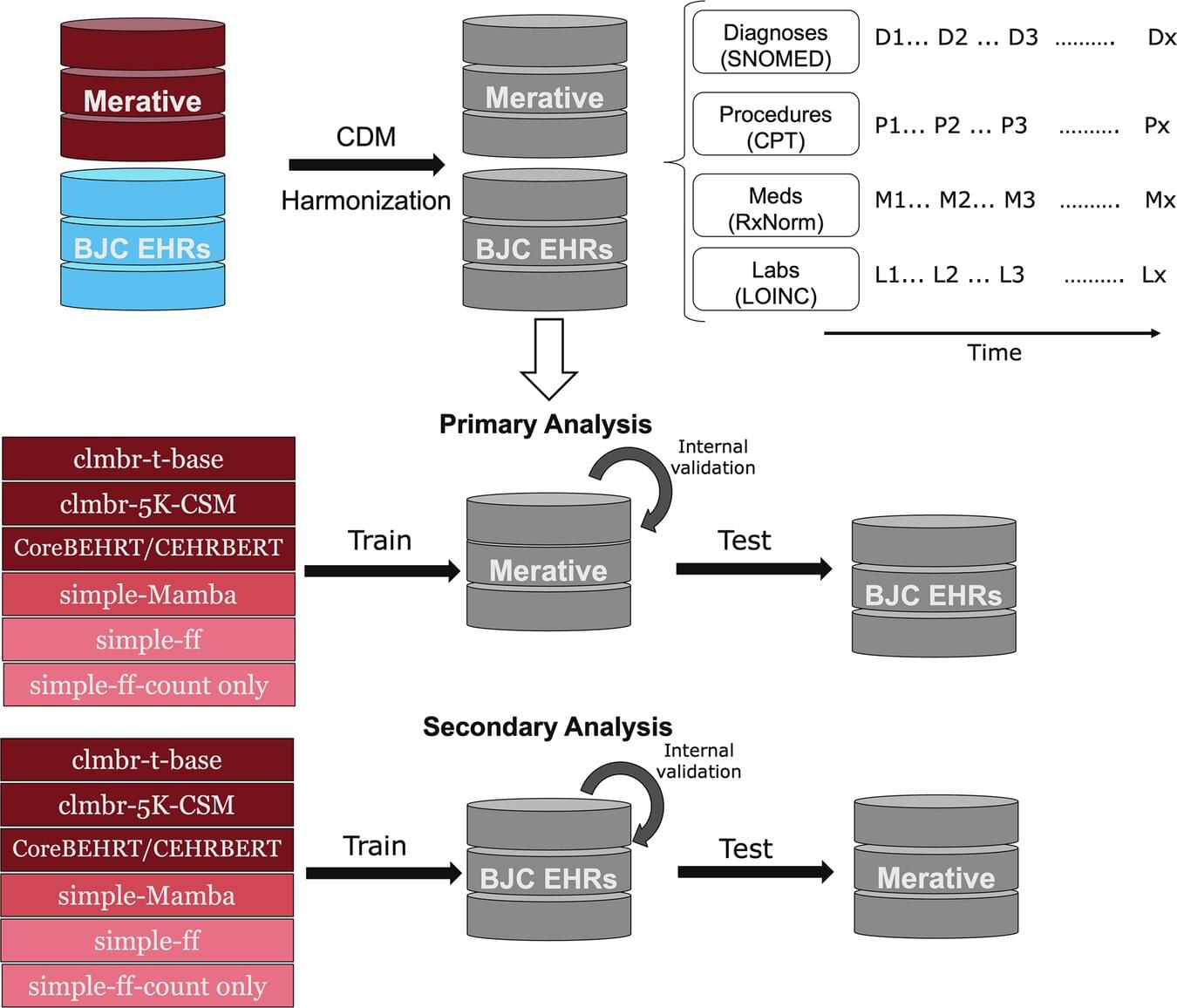

The finding echoes a key idea from modern artificial intelligence. Many AI systems learn by comparing their output to a target, computing an “error” signal, and using it to fine-tune connections within the network. A longstanding question has been whether the brain also uses that kind of individualized feedback. In a study published in the February 25 issue of the journal Nature, MIT researchers report evidence that it does.

A research team led by Mark Harnett, a McGovern Institute investigator and associate professor in the Department of Brain and Cognitive Sciences at MIT, discovered these instructive signals in mice by training animals to control the activity of specific neurons using a brain-computer interface (BCI). Their approach, the researchers say, can be used to further study the relationships between artificial neural networks and real brains, in ways that are expected to both improve understanding of biological learning and enable better brain-inspired artificial intelligence.