Policy choices will determine whether workers and firms are adequately prepared for the AI revolution

As quantum computing moves closer to large-scale deployment, new research is examining its future energy, water, and material demands.

David McCollum, an Oak Ridge National Laboratory distinguished scientist, is leading the project. McCollum is also a joint faculty professor in the Center for Energy, Transportation, and Environmental Policy (CETEP) at the Howard H. Baker Jr. School of Public Policy and Public Affairs at the University of Tennessee, Knoxville. The work aims to inform the rollout of quantum infrastructure over the coming decades. It examines technologies evolving from experimental environments to commercial-scale use. Quantum computing is expected to unlock advances in drug discovery, material science, artificial intelligence, and cybersecurity.

“Quantum computing presents extraordinary opportunities, from accelerating scientific discovery to solving complex optimization problems,” McCollum said. “At the same time, it introduces new questions about the energy, water, and materials required to operate these systems at scale. Our research aims to get ahead of those questions before resource and supply chain constraints start to bite.”

Among the newly discovered species is the ‘ghost shark’ chimaera, a distant relative of sharks and rays, found in the Coral Sea. Other notable finds include symbiotic worms on volcanic seamounts in Japan and a striking new species of shrimp in Marseille, France. These discoveries highlight the diversity and complexity of life beneath the ocean surface.

Dr. Michelle Taylor, Head of Science at Ocean Census, emphasized the importance of these discoveries, stating, “We are in a race against time to understand and protect ocean life.” The Ocean Census is not only about finding new species but also generating evidence to drive global science and policy.

The discoveries provide crucial data for international agreements like the Biodiversity Beyond National Jurisdiction Treaty and the Kunming-Montreal Global Biodiversity Framework. As the Census continues, its global network and open-access platform, NOVA, will ensure that this critical data informs global decision-making.

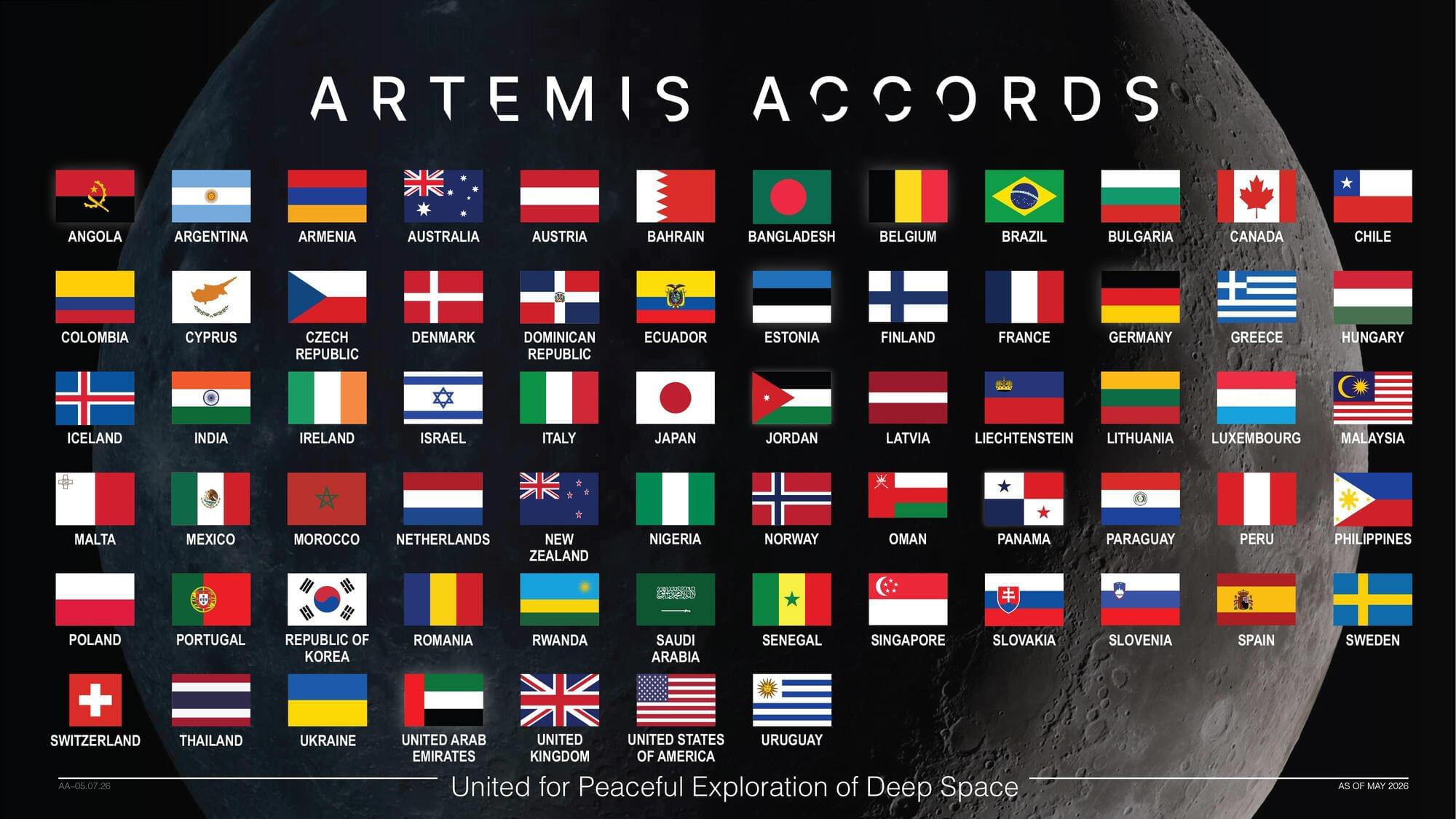

The Republic of Paraguay signed the Artemis Accords on Thursday during a ceremony in Asunción, becoming the latest nation to commit to the shared principles guiding civil space exploration.

“Today, I am proud to welcome Paraguay as the 67th signatory to the Artemis Accords,” said NASA Administrator Jared Isaacman. “They join an ever-growing coalition of like-minded nations committed to the peaceful, transparent, and responsible exploration of space. Established by President Trump in his first term, the Artemis Accords provided the principles for how we explore the Moon, Mars, and beyond. Now, with his national space policy, we are putting the Artemis Accords into practice with our Moon Base. We are creating opportunities for all Artemis Accords signatories, including Paraguay, to join us on the lunar surface and advance our shared objectives in this next era of exploration.”

U.S. Embassy Asunción Chargé d’Affaires ad interim Aaron Pratt shared Isaacman’s remarks during the ceremony. Minister President of the Paraguayan Space Agency Osvaldo Almirón Riveros signed on behalf of Paraguay.

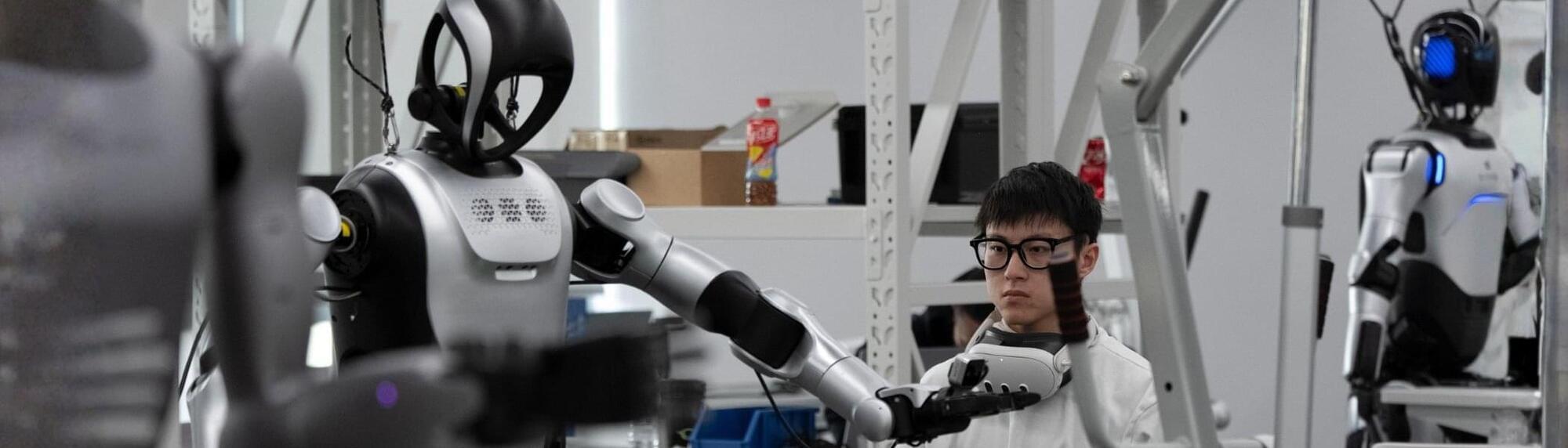

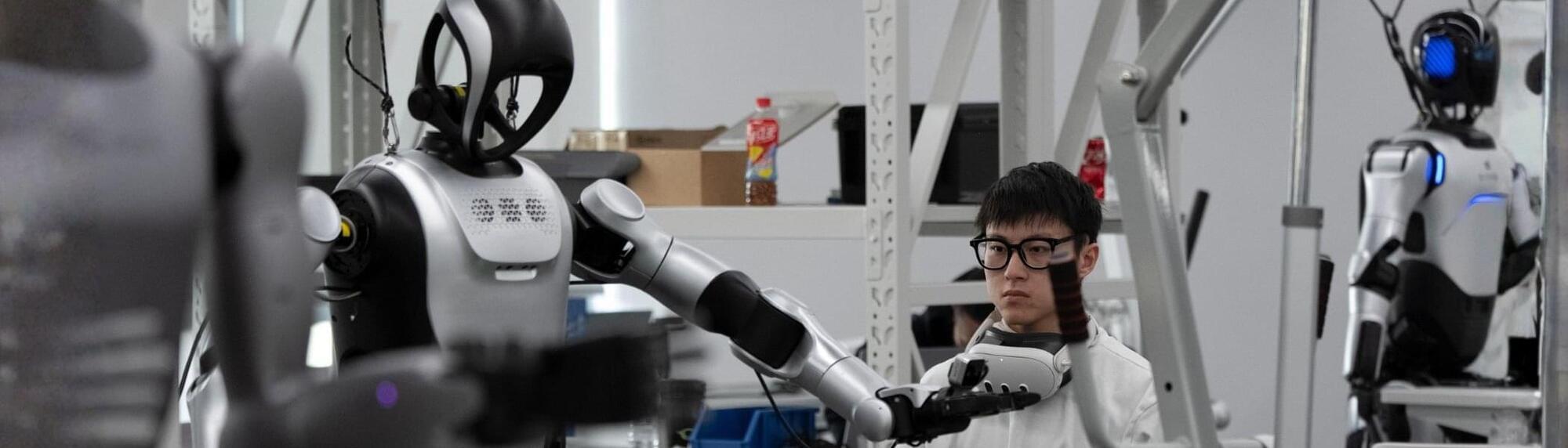

Even the best-trained robots struggle when they leave the lab. They face “distribution shifts”—situations they didn’t see in training, like a brand of cereal with a new box design or a human suddenly walking into their personal space. Static datasets (fixed instructions) simply can’t prepare a robot for every “what if” scenario.

To make sense of all this messy real-world data, the researchers introduced two key technical innovations to the robot’s “Vision-Language-Action” (VLA) brain.

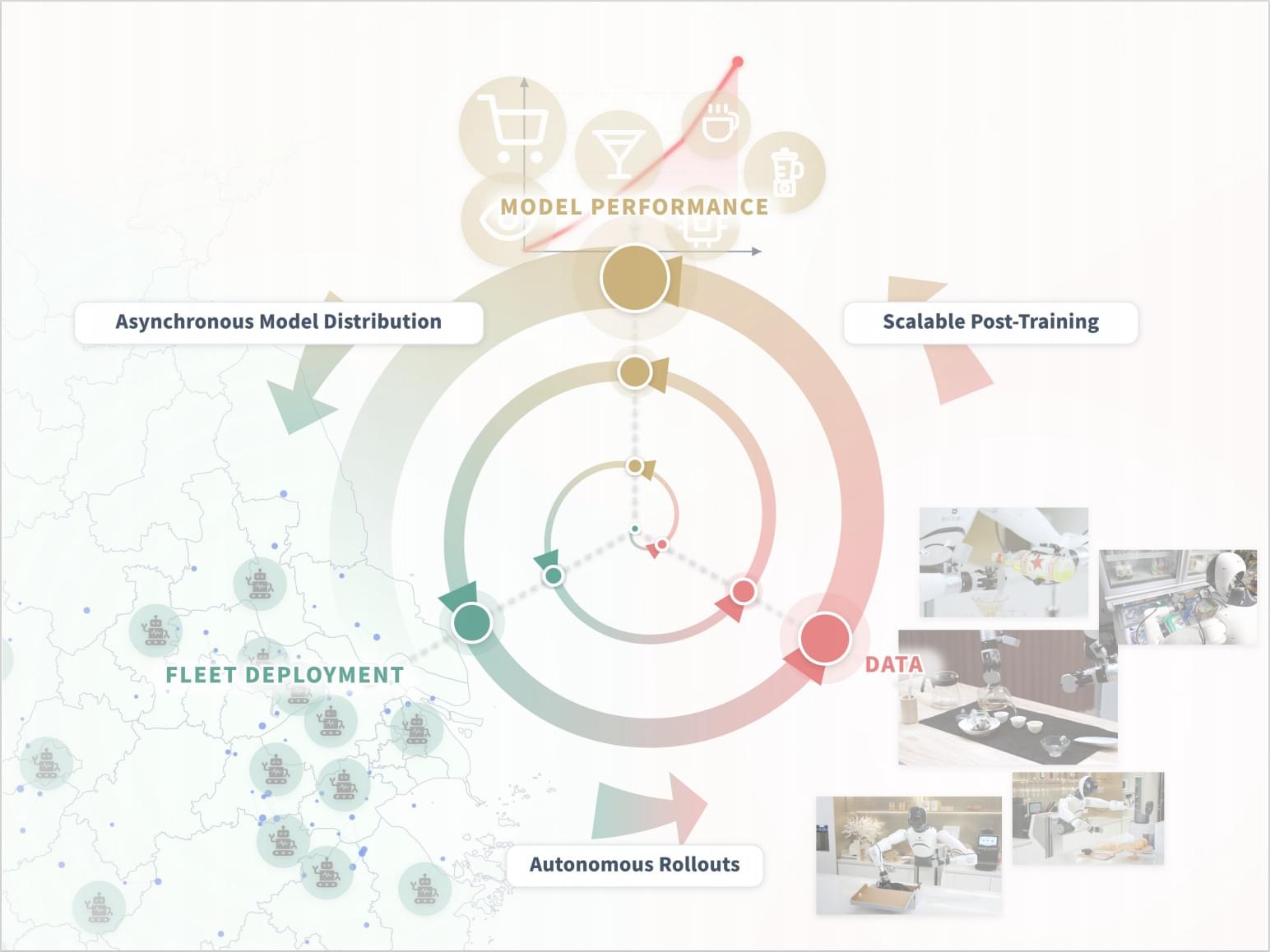

Imagine bringing home a single robot to be your all-in-one kitchen assistant—you want it to brew your morning Gongfu tea, make fresh juice in the afternoon, and mix the perfect cocktail at night. While it might have been trained extensively in a lab, in your house, the counter is slightly higher, the fruit is shaped differently, and your cocktail shaker is transparent. Pre-trained Vision-Language-Action (VLA) models provide an incredible starting point, yet real-world deployment is never a fixed test distribution. This leaves a critical, unsolved challenge: how do we take the heterogeneous experience generated across a fleet of robots and use it to post-train a single, generalist model across a wide range of tasks simultaneously?

We present Learning While Deploying (LWD), a fleet-scale offline-to-online RL framework for continual post-training of generalist VLA policies. Instead of treating deployment as the finish line where a policy is merely evaluated, LWD turns it into a training loop through which the policy improves. A pre-trained policy is deployed across a robot fleet, and both autonomous rollouts and human interventions are aggregated into a shared replay buffer for offline and online updates. The updated policy is then redeployed, enabling continuous improvement by leveraging interaction data from the entire fleet.

A Generalist Learns Beyond Demonstrations

Some robot learning systems have explored data flywheels: deploying a policy, collecting new robot data, extracting high-quality behaviors, and training the next policy to imitate them. While this supports scalable improvement, it still treats deployment mainly as a source of expert demonstrations. Prior post-training systems mainly focus on specialist policies, leaving fleet-scale post-training of a single generalist policy across diverse tasks unresolved.

Become a Big Think member to unlock expert classes, premium print issues, exclusive events and more: https://bigthink.com/membership/?utm_… How your biology and environment make your decisions for you, according to Dr. Robert Sapolsky.

Up next, Your reptilian brain, explained ► • Your reptilian brain, explained | Robert S…

Robert Sapolsky, PhD is an author, researcher, and professor of biology, neurology, and neurosurgery at Stanford University. In this interview with Big Think’s Editor-in-Chief, Robert Chapman Smith, Sapolsky discusses the content of his most recent book, “Determined: The Science of Life Without Free Will.”

Being held as a child, growing up in a collectivist culture, or experiencing any sort of brain trauma – among hundreds of other things – can shape your internal biases and ultimately influence the decisions you make. This, explains Sapolsky, means that free will is not – and never has been – real. Even physiological factors like hunger can discreetly influence decision making, as discovered in a study that found judges were more likely to grant parole after they had eaten.

This insight is key for interpreting human behavior, helping not only scientists but those who aim to evolve education systems, mental health research, and even policy making.

Go Deeper with Big Think:

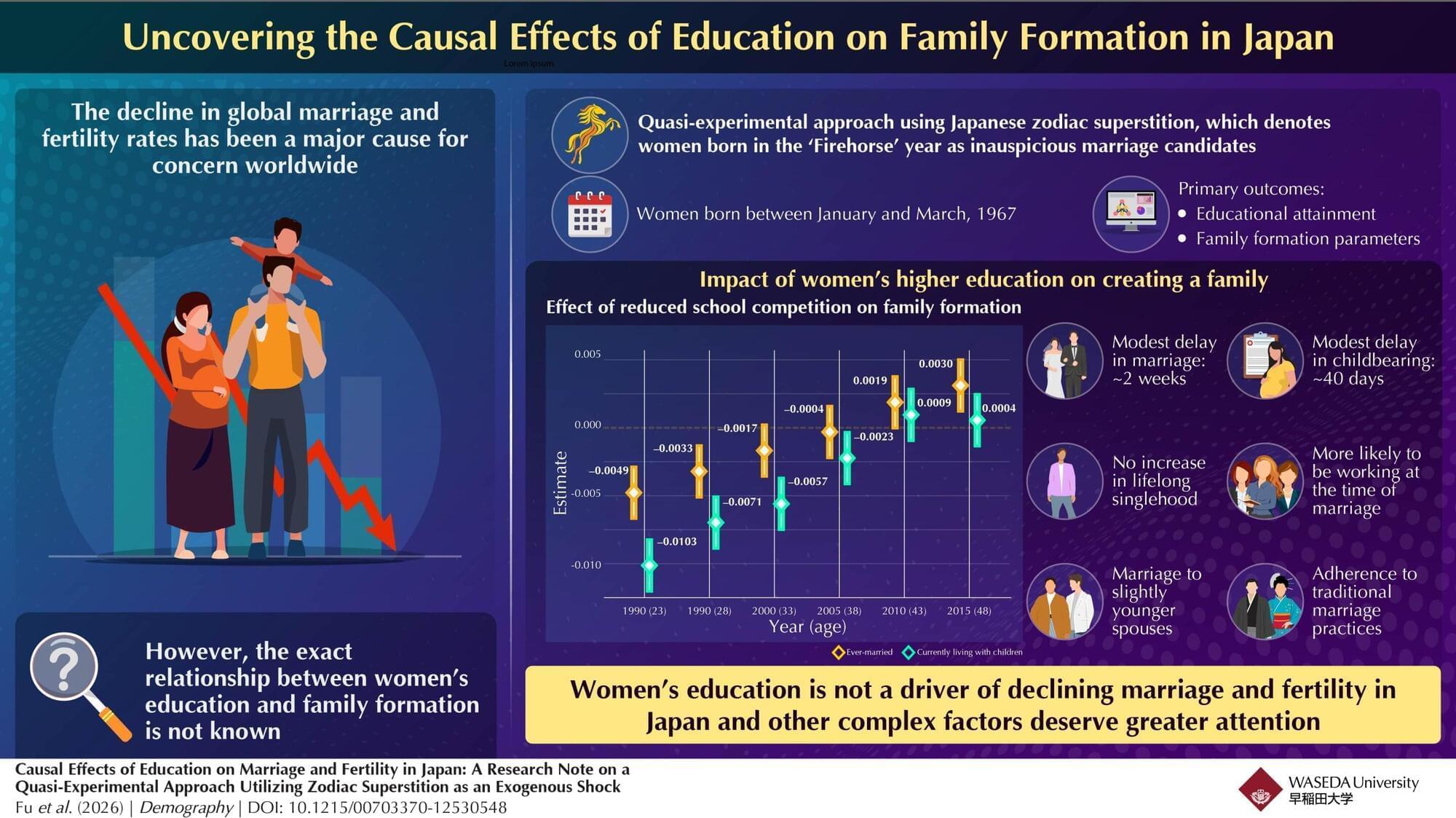

The rapidly declining marriage and fertility rates across developed East Asian societies strain pension and health care systems, threaten economic growth, and reshape entire societies. To tackle this issue, governments in Japan and across East Asia have invested heavily in pronatalist measures, but often with limited success. For instance, Japan’s government has repeatedly expanded childcare subsidies and parental leave provisions, yet the total fertility rate hit a record low of 1.20 in 2024.

A common narrative in media commentary, policy circles, and even within families is that women are “too educated” or “too career-focused” to marry and have children. However, the exact causal relationship between women’s education level and family formation is not well understood.

To fill this knowledge gap, a team of researchers from Japan and Singapore, led by Associate Professor Rong Fu from the Faculty of Commerce, Waseda University, Japan, used a novel quasi-experimental approach to understand the relationship between education, fertility, and marriage in Japan.

Google this week announced a new set of Play policy updates to strengthen user privacy and protect businesses against fraud, even as it revealed it blocked or removed over 8.3 billion ads globally and suspended 24.9 million accounts in 2025.

The new policy updates relate to contact and location permissions in Android, allowing third-party apps to access the contact lists and a user’s location in a more privacy-friendly manner. This includes a new Contact Picker, which offers a standardized, secure, and searchable interface for contact selection.

“This feature allows users to grant apps access only to the specific contacts they choose, aligning with Android’s commitment to data transparency and minimized permission footprints,” Google said.

Register now for 2026! A discussion of Earth and space on Earth Day, with Frank White, me, and other great guests!

EarthSpace 2026 brings together leaders, thinkers, and builders to explore one core idea: the future of Earth and the future of space are not separate conversations.

From climate solutions to space infrastructure, from policy to culture, the choices we make today will define how humanity lives on this planet—and beyond it.

This is not a passive webinar. It’s a focused, high-signal conversation with people actively shaping the frontier.