Lazarus deployed RemotePE against crypto firms using memory-only malware, enabling stealthy long-term financial intrusions.

If you want to know what the tech world really values right now, just look at what it’s measuring. We used to obsess over lines of code, and then it was all about daily active users and engagement metrics.

But lately, I’ve been watching a pretty profound shift taking place. The industry isn’t just optimizing for headcount or dollars anymore; it’s optimizing for tokens. For the first time, cognitive output has a measurable unit cost, and this little backend metric is quietly becoming the foundational currency of the digital economy.

I just published a new piece diving into how this “token economy” is actually playing out on the ground. Right now, we’re in this wild phase where folks are flexing their token burn rates, and massive players are even trading raw compute power for startup equity.

But this brute-force volume game is just the beginning. We’re moving toward a really fascinating future where AI agents will dynamically negotiate with each other over global compute exchanges, and companies will start managing their processing power like a true financial asset. It’s an entirely new way of thinking about how we build and scale.

Treating AI as just another flat-fee software subscription probably isn’t going to cut it for much longer. The organizations that really thrive in the next decade will be the ones who figure out how to navigate this new intersection of intelligence, energy, and scale.

I put together a deep dive into how compute is becoming the new capital, and what this macroeconomic shift actually means for the rest of us. I’d love to hear your take on it—check out the full post below.

What if every scientific paper you read was just the “highlight reel” of a much longer, messier, and more complicated movie? You see the breakthrough, but you never see the hundreds of hours of footage showing what didn’t work.

Ultimately, the ARA marks a shift toward a future where “The Last Human-Written Paper” isn’t the end of science, but the beginning of a much deeper, machine-readable conversation.

However, this shift toward radical transparency comes with its own set of hurdles. While ARAs make AI agents more efficient, the study found a “prior-run box” effect where seeing a human’s past failures actually limited an AI’s ability to think outside the box and find creative new solutions. There is also a significant cultural and technical gap to bridge: the system relies on researchers being willing to expose their “messy” unfinished work, and even with better data, the jump in actual experiment reproduction was relatively modest. Furthermore, the reliance on “compilers” to translate old papers into this new format risks baking in errors or “hallucinations” if the original source was vague, proving that while machine-readable data is powerful, it isn’t a magic fix for the inherent complexities of scientific discovery.

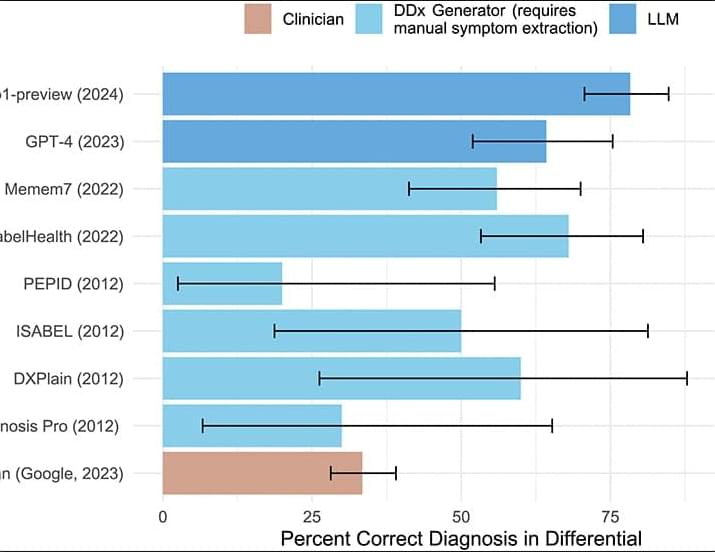

We systematically evaluated the medical reasoning abilities of an LLM across six diverse experiments, comparing the model with hundreds of expert physicians. Overall, the model outperformed physicians across experiments, including in cases utilizing real and unstructured clinical data taken directly from the health record in an emergency department. These diagnostic touchpoints mirror the high-stakes decisions taken in emergency medicine departments, where nurses and clinicians make time-sensitive choices with limited information. Our results showed that humans, GPT-4o, and o1 all improved their diagnostic abilities as more information was available; o1 outperformed humans at multiple touchpoints, with the widest gap at initial ER triage, where there is the least information available.

The rapid pace of improvement in LLMs has substantial implications for the science and practice of clinical medicine. Although applying AI to assist with clinical decision support is sometimes viewed as a high-risk endeavor (22, 23), greater use of these tools might serve to mitigate the human and financial costs of diagnostic error, delay, and lack of access (24, 25). Our findings suggest the urgent need for prospective trials to evaluate these technologies in real-world patient care settings and for health care systems to prepare for investments for computing infrastructure and design for clinician-AI interaction that can facilitate the safe integration of AI tools into patient-care workflows. This includes the development of robust monitoring frameworks to oversee the broader implementation of AI clinical decision support systems (22), monitoring not just final diagnostic accuracy but other metrics crucial for successful deployment, including safety, efficiency, and cost.

We emphasize that our study addresses only text-based performance for both humans and machines; clinical medicine is multifaceted and awash with nontext inputs, including auditory (such as the patient’s level of distress) and visual information (for example, interpretation of medical imaging studies) that clinicians routinely use. Existing studies suggest that current foundation models are more limited in reasoning over nontext inputs (26, 27); future work is needed to assess how humans and machines may effectively collaborate (28) in use of nontext signals. This requires new benchmarks, trials, and technological solutions to more faithfully measure clinical encounters. Existing investment in increasingly pervasive ambient AI scribes and other passive monitoring technologies holds promise to serve as the basis for such investigations.

A new variant of the ‘SHub’ macOS infostealer uses AppleScript to show a fake security update message and installs a backdoor.

Dubbed Reaper, the new version steals sensitive browser data, collects documents and files that may contain financial details, and hijacks crypto wallet apps.

Unlike earlier SHub campaigns that relied on “ClickFix” tactics, tricking users into pasting and executing commands in Terminal, the Reaper relies on the applescript:// URL scheme to launch the macOS Script Editor preloaded with a malicious AppleScript.

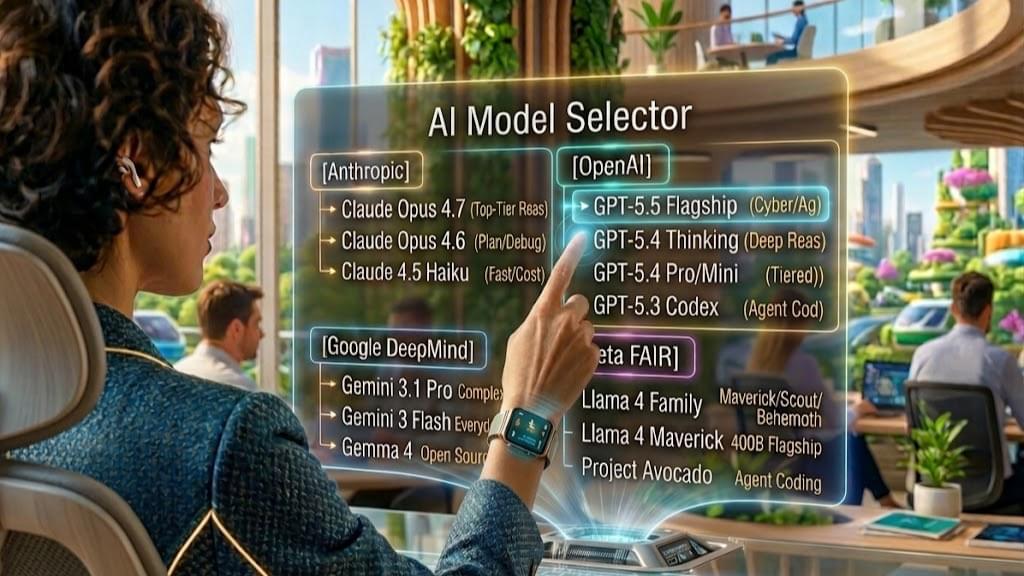

Everyone is currently watching the major tech giants throw billions of dollars at the AI arms race, cheering for whichever foundation model happens to top the leaderboards this week.

It is an incredible spectacle to watch unfold, but focusing too closely on the tech itself might mean we are missing the actual business revolution happening right under our noses.

We have seen this exact economic shift before. The biggest winners of the internet era weren’t the ones who built the physical infrastructure or supplied the goods; they were the platforms that organized the supply and owned the user relationship. The same economic laws are now coming for artificial intelligence, actively turning “intelligence” into a basic, interchangeable utility.

The real value moving forward is no longer in the models themselves, but in the seamless interfaces that aggregate them. If you want to protect your business from vendor lock-in and position your team for ultimate flexibility, it is time to rethink your approach.

Read my full blog post to dive into why the future of AI belongs to the aggregators, and how your business can strategically capitalize on this shift.

We spend an enormous amount of time obsessing over the titans of the AI arms race. Every single week seems to bring a breathless new headline about OpenAI, Google, Anthropic, or Meta releasing a foundation model that edges out the competition on some obscure benchmark test. We find ourselves endlessly arguing over parameter counts, context windows, and raw reasoning capabilities, captivated by a multi-billion-dollar war unfolding in real-time.

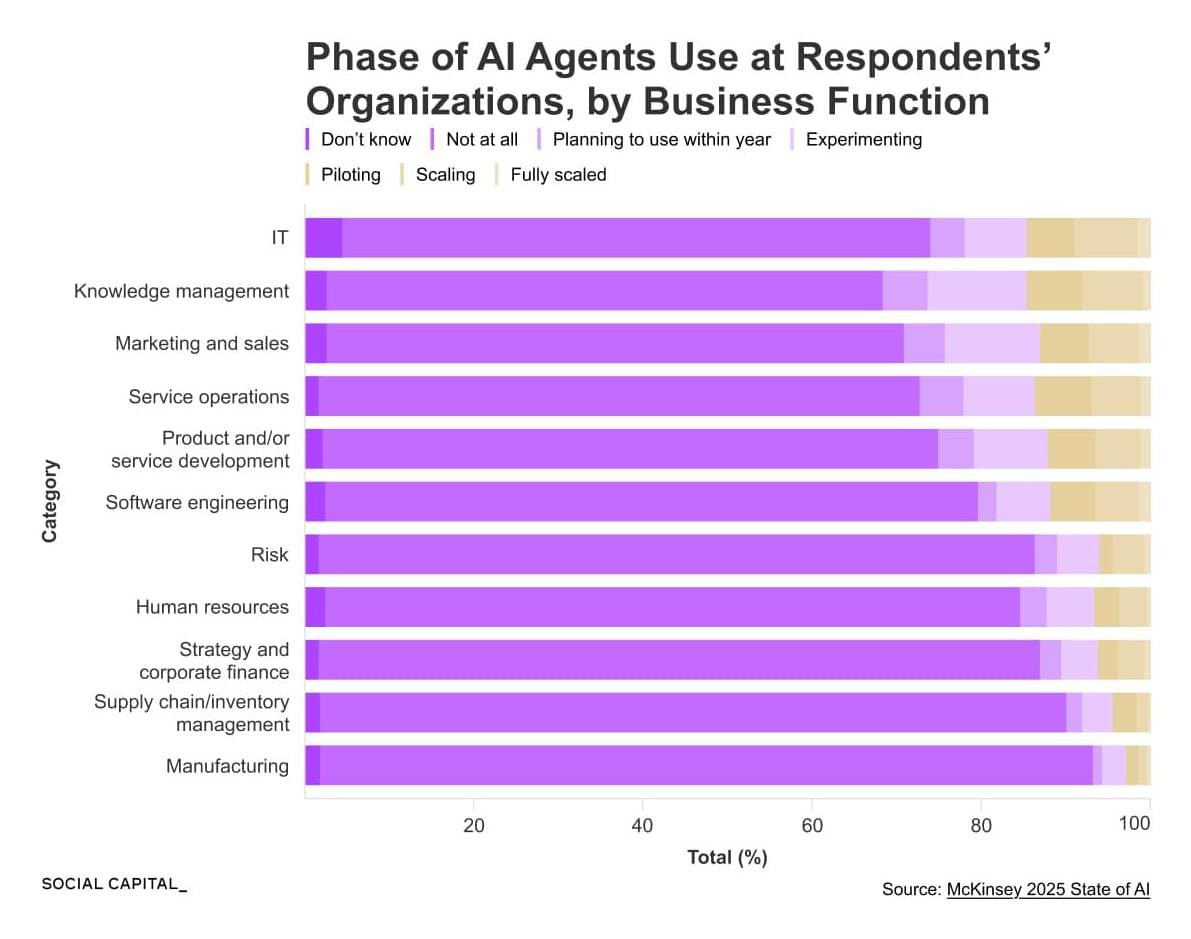

The Moat: The moat is no longer how smart your AI is; it’s what your AI is allowed to touch. An agent that has “Write Access” to a company’s internal financial system or a medical record database is 100x more valuable than a “smart” chatbot that can only read public websites. Connectivity is the new Intellectual Property.

In the agentic economy, the most valuable human skill isn’t “coding” or “writing”—it is Agentic Orchestration.

The agentic economy thrives on Data Flywheels. As an agent performs a task (e.g., “Review this legal contract”), it gets human feedback (“This clause was too aggressive”). That feedback isn’t just a correction; it’s training data that makes the agent more valuable for the next task. This creates a winner-take-all dynamic for whoever has the most active agents in a specific niche.

We are moving toward an outcome-based economy. However, the real “gold rush” isn’t in building the smartest AI; it’s in building the safest and most connected AI—the one that humans trust enough to give the “keys” to their bank accounts, their calendars, and their businesses.

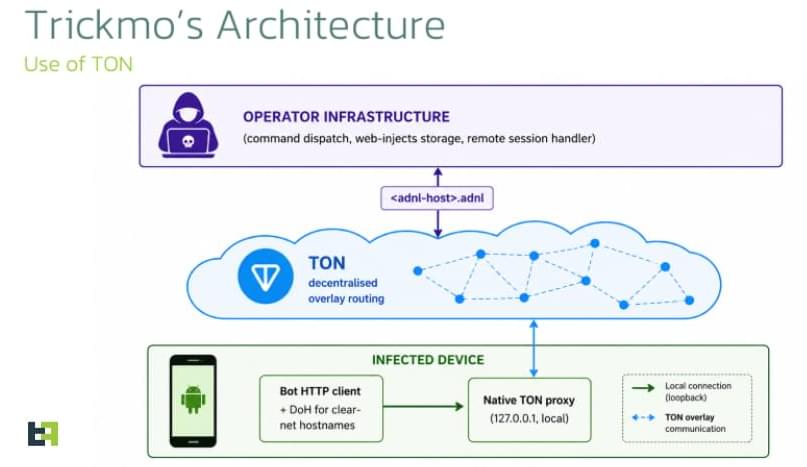

A new variant of the TrickMo Android banking malware, delivered in campaigns targeting users across Europe, introduces new commands and uses The Open Network (TON) for stealthy command-and-control communications.

The TrickMo banker was first spotted in September 2019 and has remained in active development, constantly receiving updates since then.

In October 2024, Zimperium analyzed 40 variants of the malware delivered via 16 droppers, communicating with 22 distinct command-and-control (C2) infrastructures, and targeting sensitive data belonging to users worldwide.

Cybersecurity researchers have discovered fraudulent apps on the official Google Play Store for Android that falsely claimed to offer access to call histories for any phone number, only to trick users into joining a subscription that provided fake data and incurred financial loss.

The 28 apps have collectively racked up more than 7.3 million downloads, with one of them alone accounting for over 3 million downloads, before they were taken down from the official app storefront. The activity, codenamed CallPhantom by Slovakian cybersecurity company ESET, primarily targeted Android users in India and the broader Asia-Pacific region.

“The offending apps, which we named CallPhantom based on their false claims, purport to provide access to call histories, SMS records, and even WhatsApp call logs for any phone number,” ESET security researcher Lukáš Štefanko said in a report shared with The Hacker News. “To unlock this supposed feature, users are asked to pay — but all they get in return is randomly generated data.”