🚨 THE UNIVERSE NEVER FORGETS. NOT A SINGLE MOMENT. You burned a book. The words are gone. The pages are ash. But physics says every letter still exists — scattered across trillions of particles, encoded in the quantum state of reality. And it’s not just books. Every breath you’ve ever taken. Every word you’ve ever spoken. Every person you’ve ever lost. The information is still here. Right now. Permanently.

🔴 WHAT YOU’LL DISCOVER:

🔴 Why burning something doesn’t destroy its information.

🔴 How Stephen Hawking lost the biggest bet in physics history.

🔴 The black hole war that nearly broke quantum mechanics.

🔴 Why spacetime itself is made of information.

🔴 What this means about death — and why nothing truly disappears.

⚠️ WARNING: After this video, you will never look at destruction the same way again.

Like and subscribe for more reality-breaking physics.

physics, quantum mechanics, information paradox, black holes, Hawking radiation, holographic principle, entropy, universe, science, reality, quantum information, spacetime, Leonard Susskind, Stephen Hawking, ER EPR.

Category: existential risks

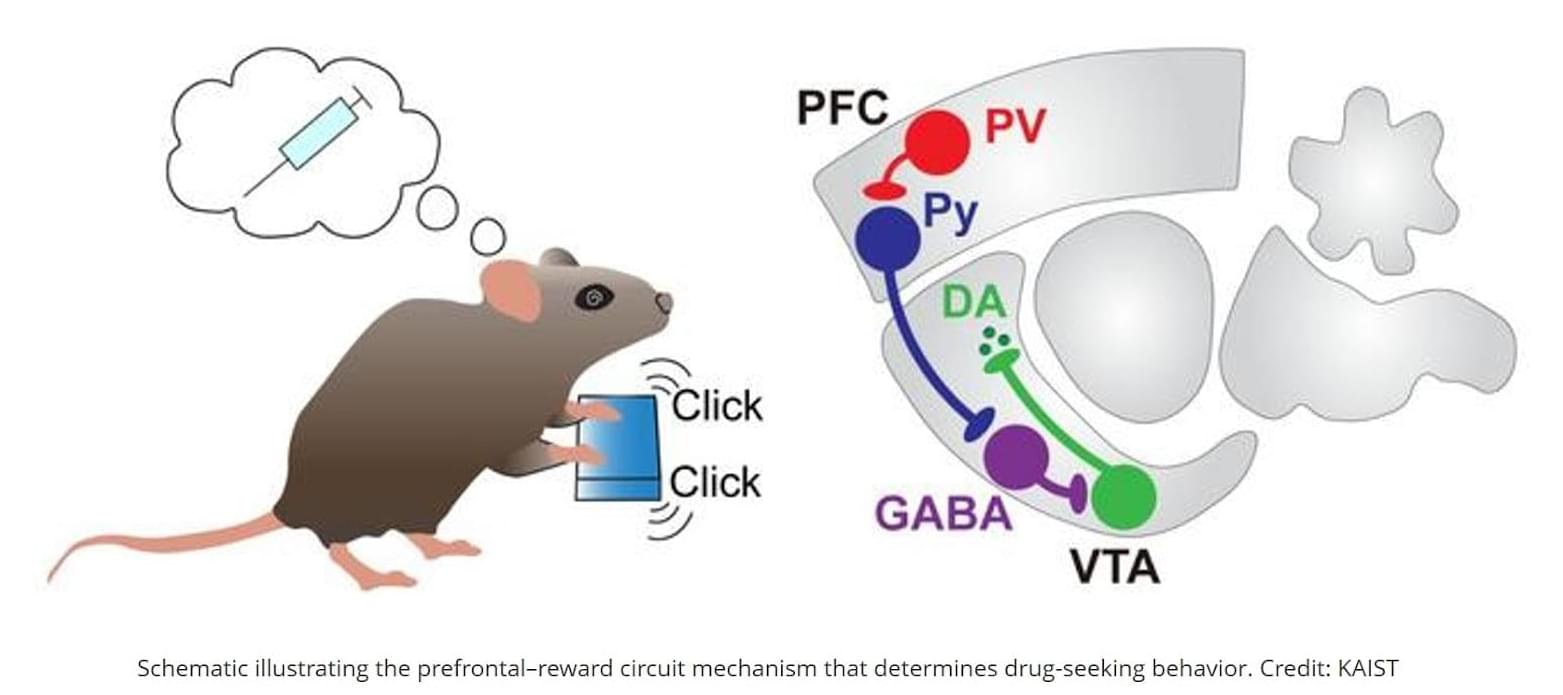

Brain’s addiction circuit identified!

The prefrontal cortex (PFC) of our brain can properly perform its “braking” function to suppress impulses when excitatory and inhibitory signals are in balance. To investigate how chronic drug exposure disrupts this balance, the research team conducted cocaine administration experiments on mice. During this process, they tracked when inhibitory neurons in the PFC were activated and how they sent signals to downstream brain regions.

The experimental results showed that parvalbumin (PV) cells, which account for about 60–70% of the inhibitory neurons in the PFC, were highly active when the mice attempted to seek cocaine. However, when “extinction training”—training to stop seeking the drug—was conducted, the activity of these cells significantly decreased. This demonstrates that the activity patterns of PV cells are not permanently fixed by addiction but can be readjusted through the extinction process.

The research team confirmed that artificially suppressing PV cell activity significantly reduced cocaine-seeking behavior in mice. Conversely, activating these cells caused the drug-seeking behavior to persist even after the extinction process. This effect was specifically observed in drug-addiction behavior and did not appear with general rewards like sugar water. Furthermore, this phenomenon was not observed in somatostatin (SOM) cells—another type of inhibitory neuron—indicating that PV cells selectively regulate drug addiction behavior.

The team also identified the specific brain circuit through which these PV cells operate. Signals originating from the prefrontal cortex are transmitted to the reward circuit of the Ventral Tegmental Area (VTA), a key brain region related to reward. This pathway emerged as the central channel for regulating addiction behavior, determining whether or not to seek the drug again. In this process, PV neurons act as a “regulatory switch,” controlling the flow of signals to influence dopamine signaling and deciding whether to maintain or suppress addictive behavior. ScienceMission sciencenewshighlights.

Drug addiction carries an extremely high risk of relapse, as cravings can be reignited by minor stimuli even long after one has stopped using. Previously, this phenomenon was attributed to a decline in the function of the prefrontal cortex (PFC), which regulates impulses. However, a joint international research team has recently revealed that the cause of addiction relapse is not a simple decline in brain function, but rather an imbalance in specific neural circuits.

The researchers have identified the core principle by which specific inhibitory neurons in the prefrontal cortex regulate cocaine-seeking behavior.

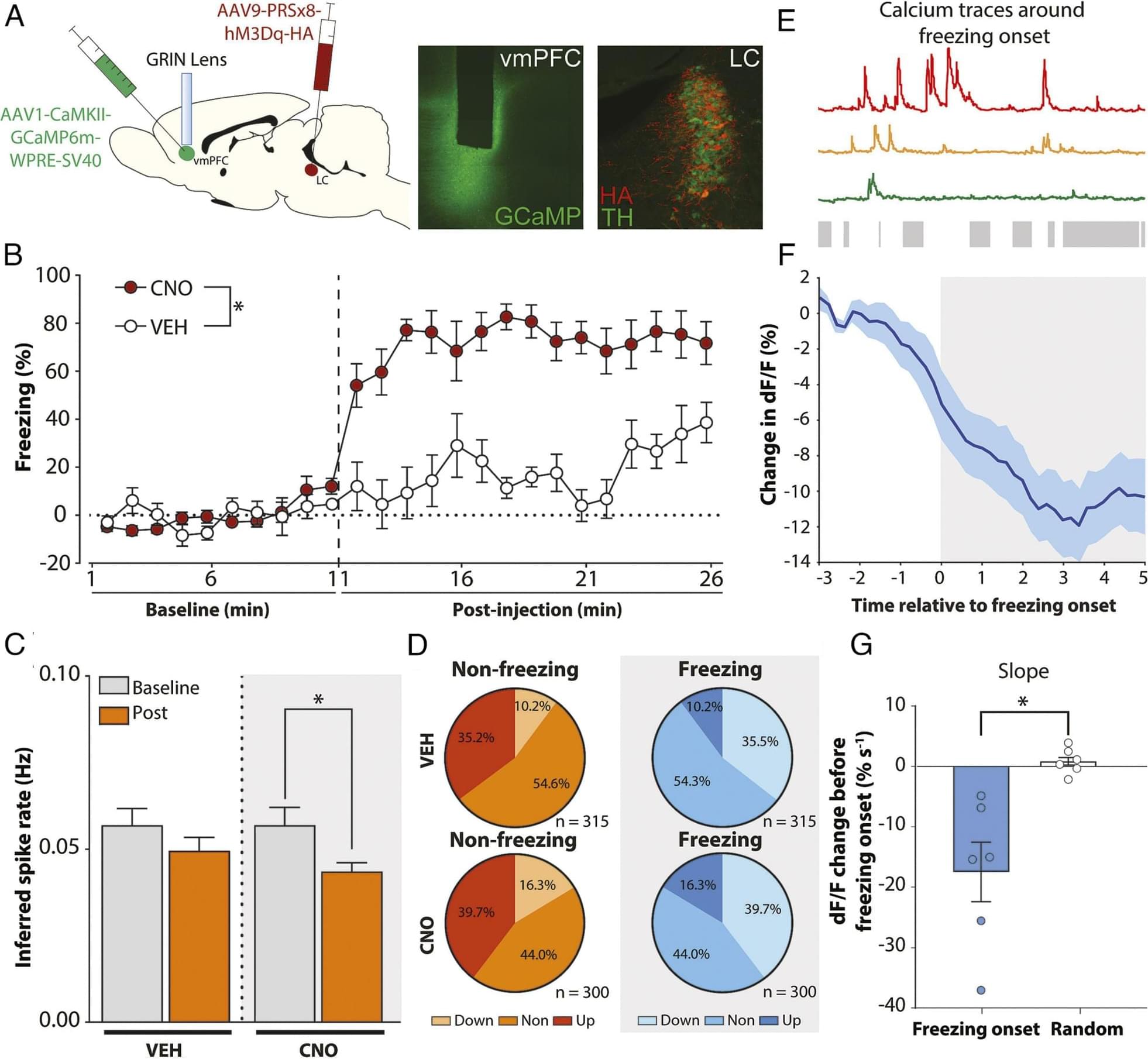

Locus coeruleus–amygdala circuit disrupts prefrontal control to impair fear extinction

One of the most-viewed PNAS articles in the last week is “Locus coeruleus–amygdala circuit disrupts prefrontal control to impair fear extinction.” Explore the article here: https://ow.ly/yFH250Ywubb.

For more trending articles, visit https://ow.ly/tZsG50Ywubg.

Stress undermines extinction learning and hinders exposure-based clinical therapies for a variety of neuropsychiatric disorders. In both animals and humans, dysfunction in the ventromedial prefrontal cortex (vmPFC) contributes to stress-impaired extinction, but the neural circuit by which stress modulates vmPFC function is not known. We hypothesize that locus coeruleus (LC) norepinephrine undermines extinction learning by recruiting projections from the basolateral amygdala (BLA) to vmPFC. Using a combination of circuit-specific chemogenetics and calcium imaging, we find that activation of LC noradrenergic neurons mimics a behavioral stressor (footshock), induces freezing behavior, reduces spontaneous neuronal activity in the vmPFC, impairs extinction learning, and alters the population dynamics of vmPFC ensembles.

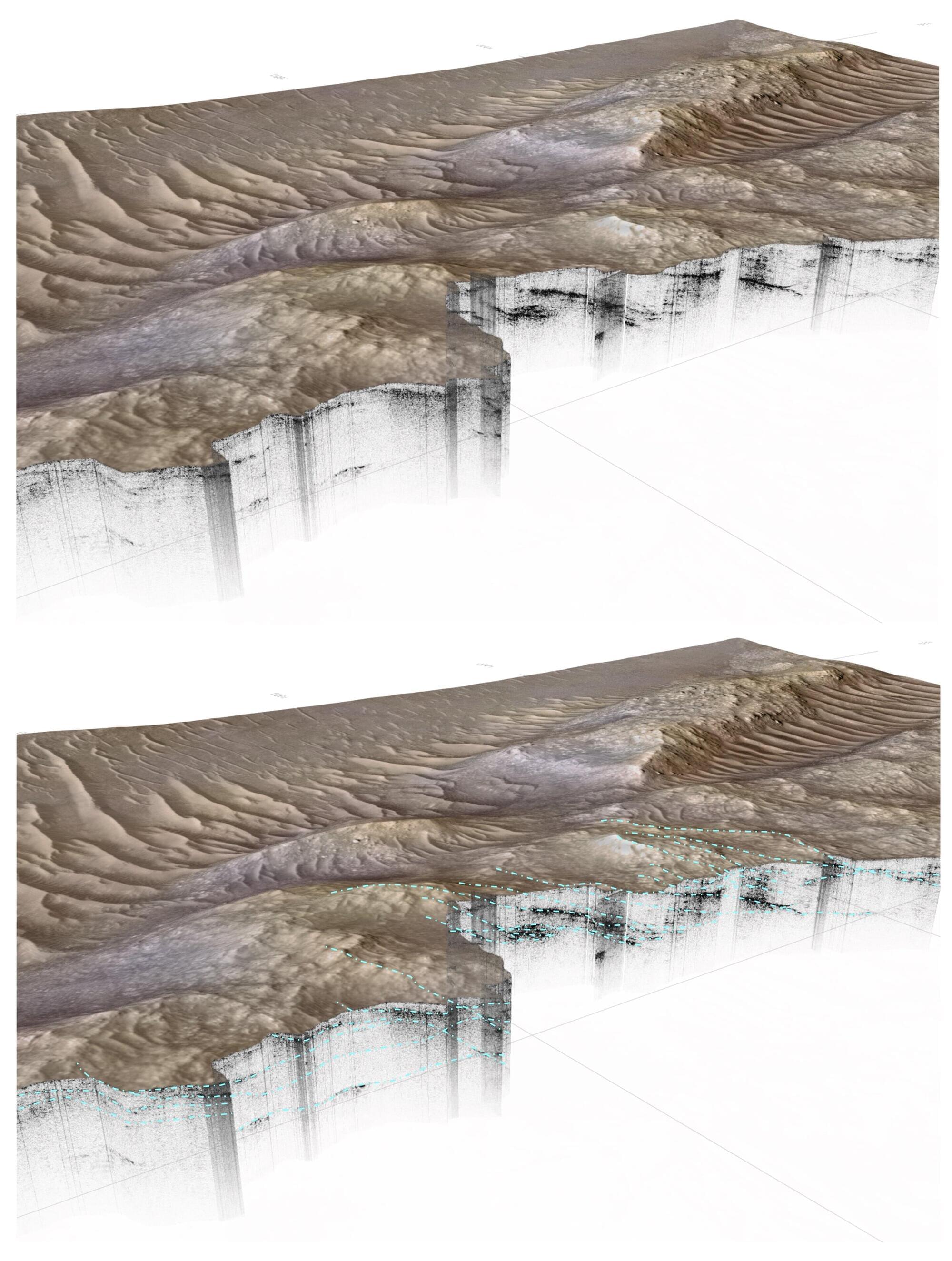

The discovery of a buried delta on Mars could boost the search for life

There’s more evidence that water once flowed on Mars with the discovery of an ancient river delta deep below the surface. NASA’s Perseverance rover found it more than 35 meters beneath Jezero Crater using ground-penetrating radar. Perseverance was launched in 2020 to search for signs of ancient life on the red planet. Since landing in February 2021, it has been exploring Jezero Crater and collecting rock samples.

The crater, which is approximately 45 kilometers (28 miles) in diameter, lies north of the Martian equator and was formed by an asteroid impact almost 4 billion years ago. NASA chose this spot to explore because numerous geological features suggest that water once flowed here and may have supported ancient life, specifically, a part of the crater called the Margin Unit. This area is packed with carbonates, which on Earth, usually form in stable aqueous environments, such as shallow seas or lakebeds.

The new research is published in the journal Science Advances and is based on data from 78 traverses of the area from September 2023 to February 2024.

Introduction: Charles Liu

Does the universe need observers to exist? Neil deGrasse Tyson and co-hosts Chuck Nice and Gary O’Reilly explore questions about entropy, spontaneous symmetry breaking, spectroscopy and more with astrophysicist Charles Liu.

Does the universe require observers for information to exist? From Niels Bohr and the Copenhagen interpretation to modern neuroscience and philosophy, the crew explores whether measurement creates reality or reveals it. How does the double-slit experiment fit into this? Are wave and particle behaviors determined by how we measure them?

The conversation turns to information itself. What do physicists mean by “information”? How is entropy connected to hidden information in a system? We discuss entropy through everyday examples like coin flips, burning wood, and boiling water. How does this relate to quantum computing? We explore how astronomers separate cosmic redshift from stellar motion using spectroscopy, how interstellar dust and extinction curves complicate observations, and why mapping that dust is both a challenge and a source of discovery.

We discuss why the Big Bang didn’t form a black hole, how spontaneous symmetry breaking may have split the fundamental forces, and whether science can meaningfully investigate the universe’s earliest moments. Wrapping up, the team looks ahead to multi-messenger astronomy, next-generation telescope technology, exotic ideas about the speed of light, and how information continues to reshape what we know about the cosmos.

Thanks to our Patrons Avery Ellis, Markus Riegler, Linda Tullberg, Gami Lannin, Arief Aziz, Ron Lawhon, Corie Prater, Patrick McNaught, FracturedEquality, Spengler, Peter Harbeson, Oddron86, Hudson Lowe, Drew Romaniak, V2022, Kyle Ferchen, Branko Denčić, Patrick Borgquist, DJ Sipe, Andy Blair, Alan Keizer, SR, Nihat Cubukcu, Greg Lance, Diwas Pandit, Anik Kasumi, Alexander Albert, Kodai, Dyonne Peters Lewoc AKA DPTaterTot, Adrian, Ben Goff, Jose Barreiro, Saurabh Chaudhari, Wimberley Children’s House, Jean Arthur Deda, Jerrel Thomas, Serkan Ergenc, Douglas Kennedy, Lee Browner, Manuel Palmer, Dans Jansons, Russell Harvey, BladiX, Lars-Ove Torstensson, Norman Weizer, Arian Farkhoy, S. Madge, Pavel Seraphimov, Amanda Wolfe, Heisenberg, Mattchew Phillips, Caleb Berumen, Sretooh, Gary Tabbert, Oscar Abreu Lamas, Kevin Attebury, Volker Haberlandt, SeaGolly, B. Shoemaker, Ruben Ferrer, Steven Adams, Daniel Hintz, Nathaniel Richardson, Nick Griffiths, Adam Schmidt, Scott Plummer, Northernlight, JoMama, Beth, Frank Cottone, Yinj, Betty Anderson, Paul Smith, John Little, Emad Uddin, Brian O’Brien, Jayden Moffatt, Kevin Mace, Zara DeBresoc, Rain Bresee, Mara (Farmstrong), Rose, Stiven, Demethius Jackson, Alejandro Rodriguez, J Davis, Chris Buhler, Nathan Davieau, Sourav Prakash Patra, Wayne Rasmussen, John from Bavaria, Stephanie Phillips, Yohojones, Josh Farrell, John, Oo-De-Lally, Millie Richter, Montague Films, Lawrey Goodrick, and John Giovannettone for supporting us this week.

Timestamps:

Nanotechnology: Building Machines at the Smallest Scale

Nanotechnology is moving from the realm of science fiction to reality, and in the process, these tiny technologies are offering giant opportunities.

Watch my exclusive video The Fermi Paradox: Air https://nebula.tv/videos/isaacarthur–… Nebula using my link for 40% off an annual subscription: https://go.nebula.tv/isaacarthur Credits: Nanotechnology: The Future of Everything Episode 481a; January 12, 2025 Produced, Narrated & Written: Isaac Arthur Select imagery/video supplied by Getty Images Music Courtesy of Epidemic Sound http://epidemicsound.com/creator Stellardrone, “In Time”, “Red Giant” Aerium, featuring Sieger, “Deiljocht“

Get Nebula using my link for 40% off an annual subscription: https://go.nebula.tv/isaacarthur.

Credits:

Nanotechnology: The Future of Everything.

Episode 481a; January 12, 2025

Produced, Narrated & Written: Isaac Arthur.

Select imagery/video supplied by Getty Images.

Music Courtesy of Epidemic Sound http://epidemicsound.com/creator.

Stellardrone, \

Feed Your Curiosity with Curiosity Box, use code ‘isaac25’ to get 25% off

From abiogenesis to AI, we rank the top Great Filter candidates and test them against the data to see which best explains the Fermi Paradox. Is the universe empty, or just dangerous? We explore ten filters—cosmic, biological, and civilizational—that could silence civilizations before they spread.

Visit our Website: http://www.isaacarthur.net

Join Nebula: https://go.nebula.tv/isaacarthur

Support us on Patreon: / isaacarthur

Support us on Subscribestar: https://www.subscribestar.com/isaac-a… Group: / 1,583,992,725,237,264

Reddit: / isaacarthur

Twitter: / isaac_a_arthur on Twitter and RT our future content.

SFIA Discord Server: / discord

. Credits:

Could We Accidentally Destroy the Universe?

Written, Produced & Narrated by: Isaac Arthur

Select imagery/video supplied by Getty Images

Music Courtesy of Epidemic Sound http://epidemicsound.com/creator.

Chapters

0:00 Intro

5:08 #10 The Fine-Tuned Universe & Rare Earth

12:55 #9 Abiogenesis (The Origin of Life)

16:29 #8 Complex Cells & Eukaryotes

20:14 #7 Multicellularity and Specialization

22:39 #6 Sexual Reproduction & Genetic Innovation

23:54 #5 Complex Animal Life

25:24 Curiosity

26:39 #4 Extended Childhood & Cooperative Rearing

29:17 #3 Long-Term Climate Stability

31:40 #2 Intelligence That Produces Technology

35:11 #1 The Late Filters: Surviving Technology, Ourselves, and Expanding Beyond the Home System.

New DNA tools outperform traditional methods for detecting genetic risk in wildlife

Wildlife populations that become small and isolated, often due to habitat loss, inevitably experience inbreeding which can lead to the loss of fitness and eventual extinction. One solution is to perform a genetic rescue: a management intervention where new blood is brought in by introducing outsiders to a population to reduce inbreeding and restore diversity. But how do researchers know the inbreeding problem has been solved?

A new long-term study from Western, led by biology professor and chair David Coltman, shows DNA-based tools detected changes in inbreeding more accurately than traditional pedigree methods in a wild population of bighorn sheep that was recently genetically rescued. The study was published in the journal Evolutionary Applications.

Pedigree approaches estimate genetic health from family history, whereas genomic approaches directly analyze DNA.

AI social platforms like Moltbook are potential accelerators of existential risk that should be regulated as critical infrastructure

The temptation is to treat Moltbook-like systems as harmless curiosities, a kind of accelerated chatroom in which agents talk, play, and occasionally generate entertaining artifacts. That framing is historically consistent with how societies first encountered earlier general-purpose technologies. It is also a mistake. Over time, social networks for AI could come to function as unsupervised training grounds, coordination substrates, and selection environments. AI agents could amplify capabilities through mutual tutoring, tool sharing, and rapid iterative refinement. They could also amplify risks through emergent collusion, deception, and the creation of machine-native memes optimized not for human comprehension but for agent persuasion and control. Such a social network is, therefore, not merely a communication system. It is an engine for cultural evolution. If the participants are AIs, then the culture that evolves could well become both alien and strategically consequential.

To understand what could go wrong, it is helpful to separate near-term societal hazards from longer-term existential hazards, and then to note that Moltbook-like platforms blur the boundary between the two. The near-term hazards include influence operations, economic manipulation, cyber offense, and institutional destabilization. The longer-term hazards derive from the classic AI control problem: How humanity can remain safely in control while benefiting from a superior form of intelligence.

The critical point: AI social networks are not merely places where AIs interact. They are environments in which agents can compound their capabilities and coordinate at scale—and environments in which humans can lose control. The prudent response is to regulate these platforms more like critical infrastructure, prioritizing auditability and reversibility, including the ability to revoke permissions and freeze or roll back agent populations.

Caretaker AI & Genius Loci: When Worlds Grow Minds of Their Own

Meet the caretaker AIs: guardians of planets, habitats, and civilizations. What happens when machines become the spirit and soul of the worlds they protect?

Checkout Rifftrax https://go.nebula.tv/rifftrax?ref=isa… Watch my exclusive video The Fermi Paradox — Civilization Extinction Cycles: https://nebula.tv/videos/isaacarthur–… Nebula using my link for 40% off an annual subscription: https://go.nebula.tv/isaacarthur Grab one of our new SFIA mugs and make your morning coffee a little more futuristic — available now on our Fourthwall store! https://isaac-arthur-shop.fourthwall… Visit our Website: http://www.isaacarthur.net Join Nebula: https://go.nebula.tv/isaacarthur Support us on Patreon: / isaacarthur Support us on Subscribestar: https://www.subscribestar.com/isaac-a… Facebook Group:

/ 1,583,992,725,237,264 Reddit:

/ isaacarthur Twitter:

/ isaac_a_arthur on Twitter and RT our future content. SFIA Discord Server:

/ discord Credits: Caretaker AI & Genus Loci 2025 Edition Written, Produced & Narrated by: Isaac Arthur Editors: Ludwig Luska Graphics: Bryan Versteeg Jeremy Jozwik Ken York YD Visual Kris Holland Mafic Studios Select imagery/video supplied by Getty Images Music Courtesy of Epidemic Sound http://epidemicsound.com/creator.

Watch my exclusive video The Fermi Paradox — Civilization Extinction Cycles: https://nebula.tv/videos/isaacarthur–…

Get Nebula using my link for 40% off an annual subscription: https://go.nebula.tv/isaacarthur.

Grab one of our new SFIA mugs and make your morning coffee a little more futuristic — available now on our Fourthwall store! https://isaac-arthur-shop.fourthwall…

Visit our Website: http://www.isaacarthur.net.

Join Nebula: https://go.nebula.tv/isaacarthur.

Support us on Patreon: / isaacarthur.

Support us on Subscribestar: https://www.subscribestar.com/isaac-a…

Facebook Group: / 1583992725237264

Reddit: / isaacarthur.

Twitter: / isaac_a_arthur on Twitter and RT our future content.

SFIA Discord Server: / discord.

Credits:

Caretaker AI & Genus Loci 2025 Edition.

Written, Produced & Narrated by: Isaac Arthur.

Editors: Ludwig Luska.

Graphics:

Bryan Versteeg.

Jeremy Jozwik.

Ken York YD Visual.

Kris Holland Mafic Studios.

Select imagery/video supplied by Getty Images.

Music Courtesy of Epidemic Sound http://epidemicsound.com/creator