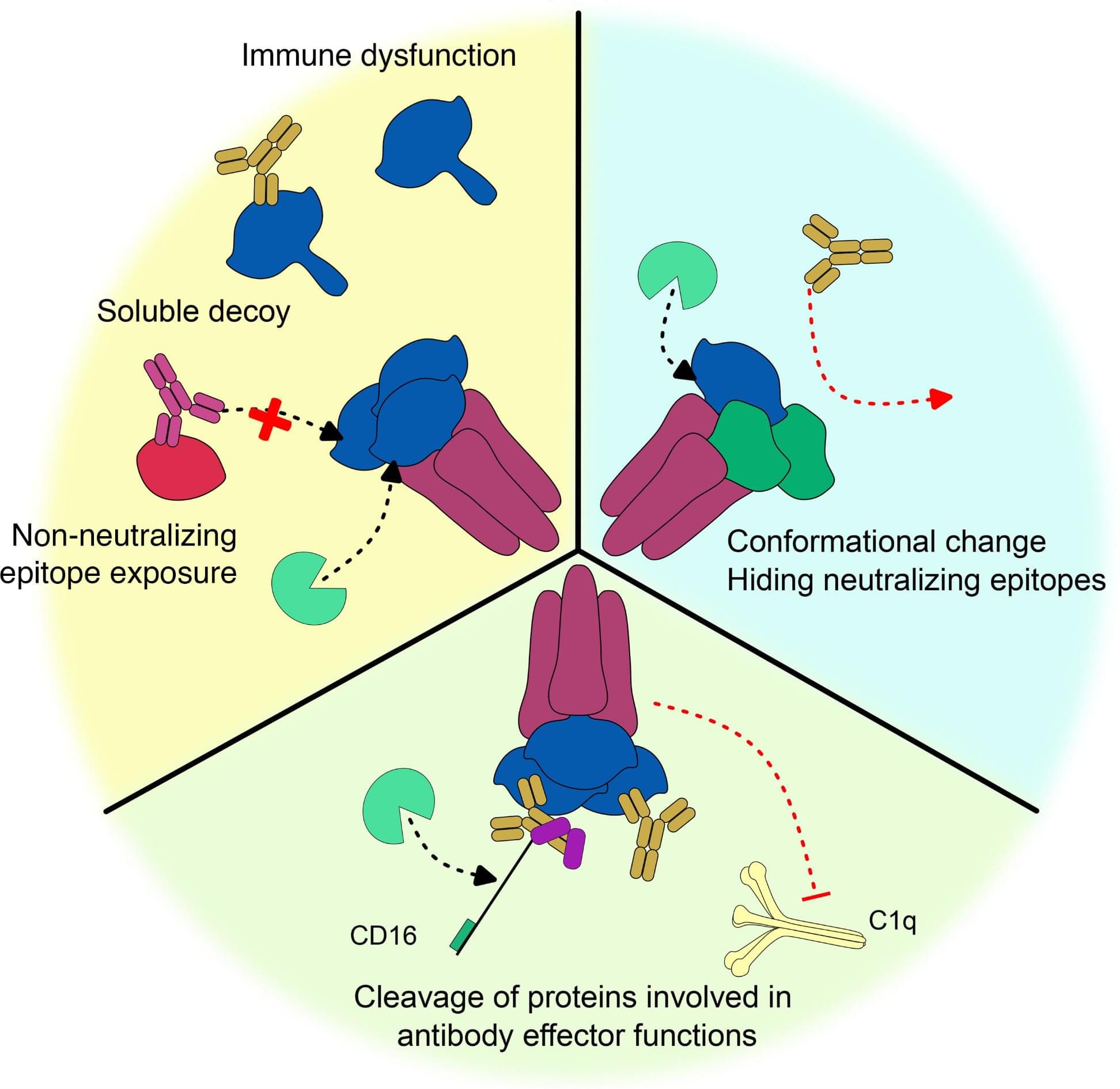

Microbiology Monday: Antibodies play a key role in clearing viruses from the body—but there are a lot of ways viruses evade them. For instance, viral infections can hijack host proteases to reduce antibody effectiveness. These proteases lob off viral antigens expressed on host cell membranes, creating soluble decoys that bind antibodies and hinder their neutralizing powers, among other mechanisms. Learn more in JVirology.

Viruses and their hosts have been co-evolving in a continual arms race for fitness and survival, respectively (1). In humans, the innate and adaptive arms of immunity intimately interact to control infection. Antibodies (Abs), secreted by certain activated B cells, are an essential portion of the adaptive immune response and are a major pillar in the viral clearance of both enveloped viruses as well as some non-enveloped viruses (1–5). Certain antibodies are developed with the ability, through their fragment antigen-binding region, to bind to viral epitopes and, through a variety of methods (e.g., steric obstruction or changing conformation), result in the neutralization of the target antigen (4).

Antibodies are also a bridge between the adaptive and innate immune responses. Through their fragment crystallizable (Fc) region, antibodies bind to either activators of the complement system or Fc Receptors (FcR) on effector cells, inducing the so-called antibody “effector” or “non-neutralizing” functions, such as complement-mediated cytotoxicity, antibody-dependent cellular cytotoxicity (ADCC), or antibody-dependent cellular phagocytosis (6–8). Together, neutralization and effector function induction place antibodies as correlates of protection across many infections (9–11), as well as at the center of vaccine and therapeutic monoclonal antibody design (2–4, 12).

Apart from complement activation, induction of effector functions depends on the formation of an immune synapse between an antibody-coated target and an effector cell. Globally, this immune synapse depends on Ab density on the target membrane, cofactors within the effector cells (adhesion molecules, signaling molecules, or cofactors such as NKG2D on NK cells), and conditioning by the microenvironment (cytokines, pH, etc.). For complement, completion of the cascade and elimination of viruses and/or infected cells depend on the initial hexamerization of the antibody’s Fc on the target surface and the presence and activity of several inhibitory factors existing within the cascade (7, 11).