What if the thermal noise that hinders the efficiency of both classical and quantum computers could, instead, be used as a power source? What if computers could make use of the noise instead of suppressing or overcoming it? These are the goals of a relatively new branch of computing known as thermodynamic computing. A collaboration between researchers at the Molecular Foundry and the National Energy Research Scientific Computing Center (NERSC), both U.S. Department of Energy (DOE) user facilities located at Lawrence Berkeley National Laboratory (Berkeley Lab), is bringing them closer to reality.

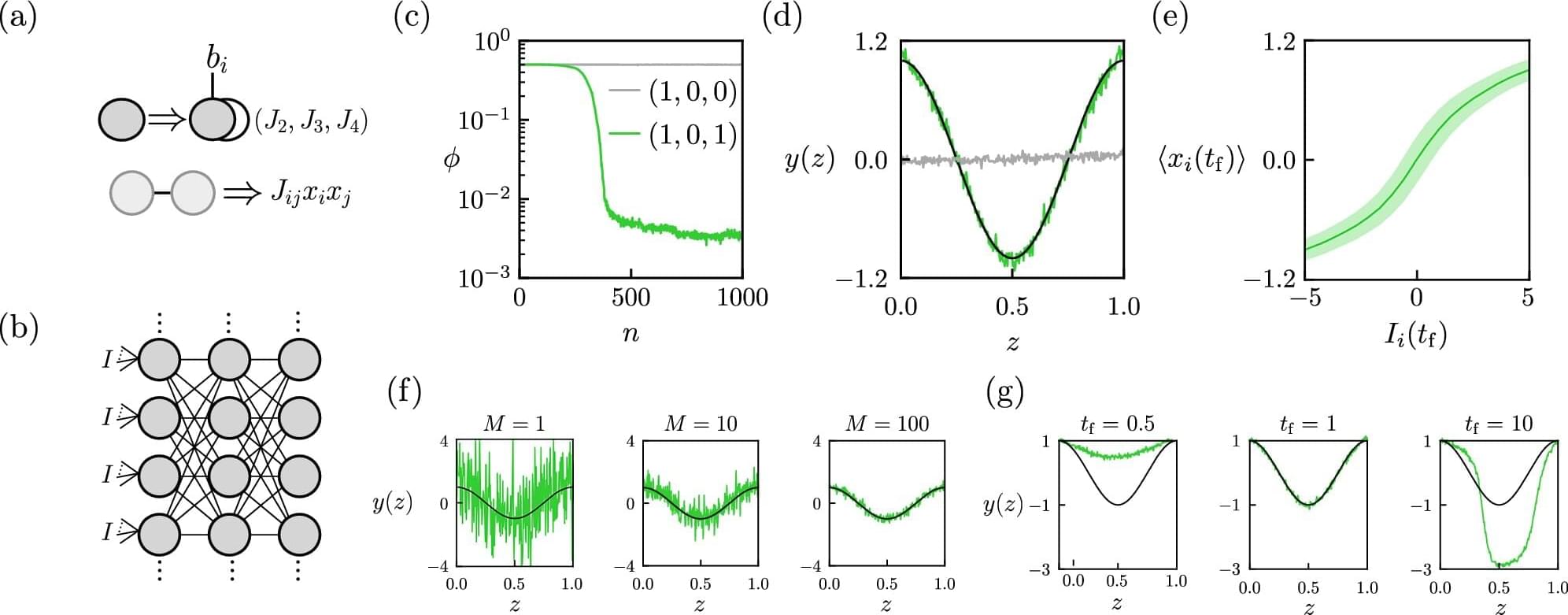

In a paper published in Nature Communications, the researchers have proposed a design and training framework for a type of thermodynamic computer that mimics a neural network, which could drastically reduce the energy requirements of machine learning.

Modern computing requires energy: a single Google search, for example, consumes enough energy to power a six-watt LED for three minutes. This is partly because computers must contend with thermal noise—that is, the vibration of charge carriers, mostly electrons, within electronically conductive materials. In classical computers, even the smallest devices, such as transistors and gates, operate at energy scales thousands of times larger than that of this vibration.