@CircRes Compendium on Migration of Mitochondria Beyond Cell Boundary.

Authored by Drs. Rapushi & colleagues.

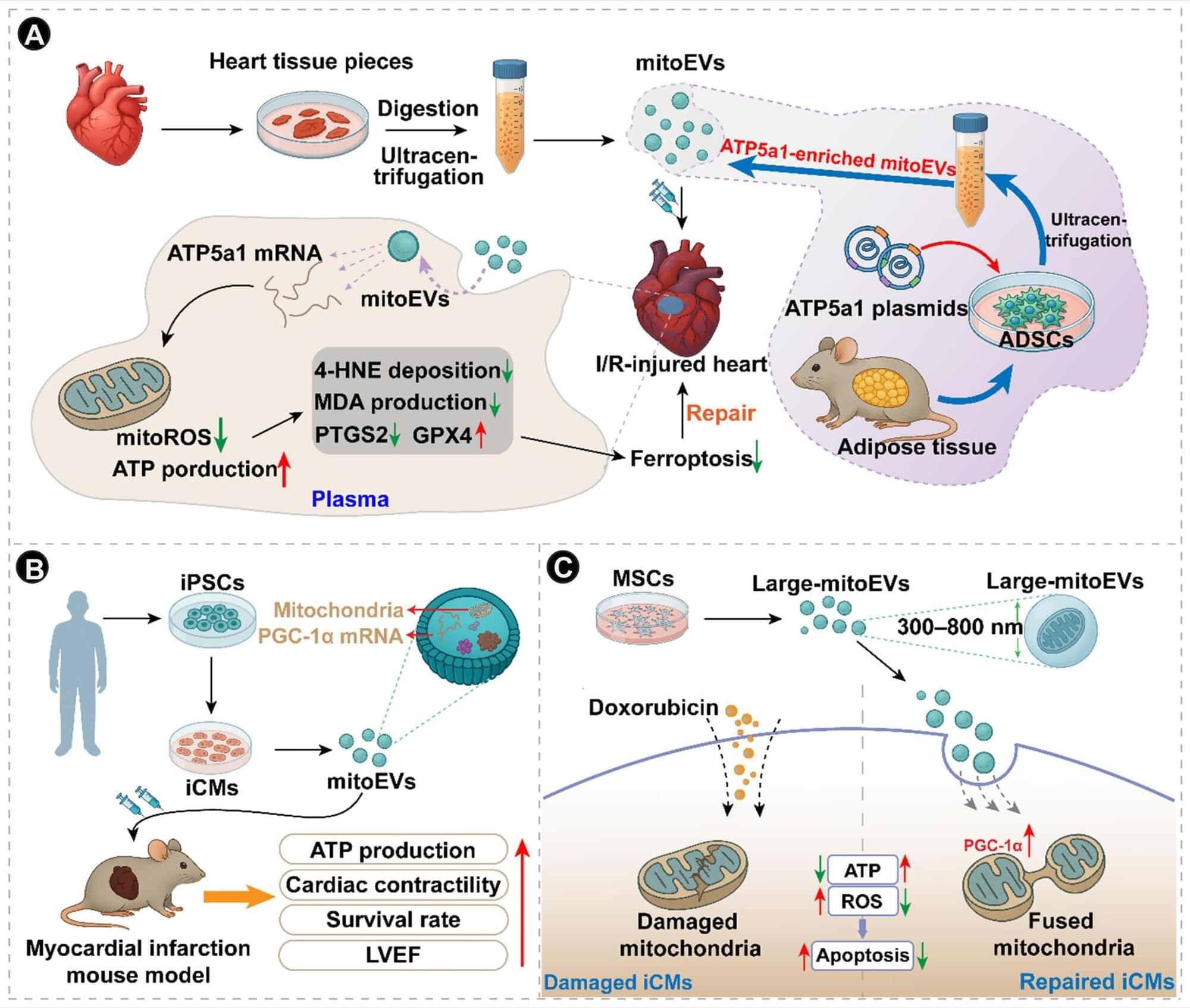

Mitochondria-derived vesicles (MDVs) and mitochondrial extracellular vesicles (mitoEVs) represent 2 related extensions of mitochondrial dynamics that link organelle maintenance to communication within and between cells. MDVs are small vesicles that bud directly from mitochondria, selectively packaging components of the outer membrane, inner membrane, or matrix. They serve as a localized quality control mechanism that removes oxidized or damaged material without engaging the entire mitophagic machinery. After budding, MDVs typically enter the endolysosomal pathway, where they can fuse with late endosomes or lysosomes for cargo degradation. A subset of MDVs also targets other organelles, particularly peroxisomes, contributing to organelle crosstalk, lipid metabolism, and redox balance.