SideWinder’s 2025 phishing attacks used fake PDFs and ClickOnce apps to target South Asian embassies.

To sidestep detection, the attack chain involves the execution of PowerShell commands to disable AMSI, turn off TLS certificate validation, and enable Restricted Admin, in addition to running tools such as dark-kill and HRSword to terminate security software. Also deployed on the host are Cobalt Strike and SystemBC for persistent remote access.

The infection culminates with the launch of the Qilin ransomware, which encrypts files and drops a ransom note in each encrypted folder, but not before wiping event logs and deleting all shadow copies maintained by the Windows Volume Shadow Copy Service (VSS).

The findings coincide with the discovery of a sophisticated Qilin attack that deployed their Linux ransomware variant on Windows systems and combined it with legitimate IT tools and the bring your own vulnerable driver (BYOVD) technique to bypass security barriers.

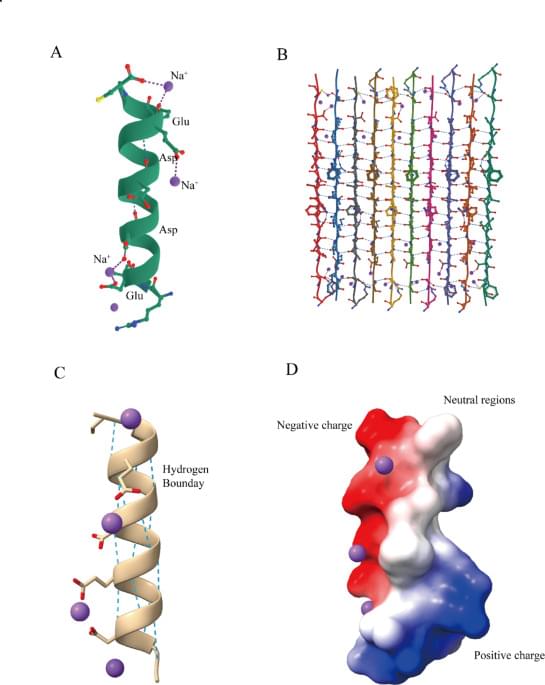

Zhang, M., Wan, Y. & Li, D. Predicted peptide scaffolds for drug screening in endometrial cancer organoids. Sci Rep 15, 37,408 (2025). https://doi.org/10.1038/s41598-025-21282-1

ALS, also known as Lou Gehrig’s disease, is among the most challenging neurological disorders: relentlessly progressive, universally fatal, and without a cure even after more than a century and a half of research. Despite many advances, a key unanswered question remains—why do motor neurons, the cells that control body movement, degenerate while others are spared?

In a study appearing in Nature Communications, Kazuhide Asakawa and colleagues used single-cell–resolution imaging in transparent zebrafish to show that large spinal motor neurons —which generate strong body movements and are most vulnerable in ALS—operate under a constant, intrinsic burden of protein and organelle degradation.

These neurons maintain high baseline levels of autophagy, proteasome activity, and the unfolded protein response, suggesting a continuous struggle to maintain protein quality control.

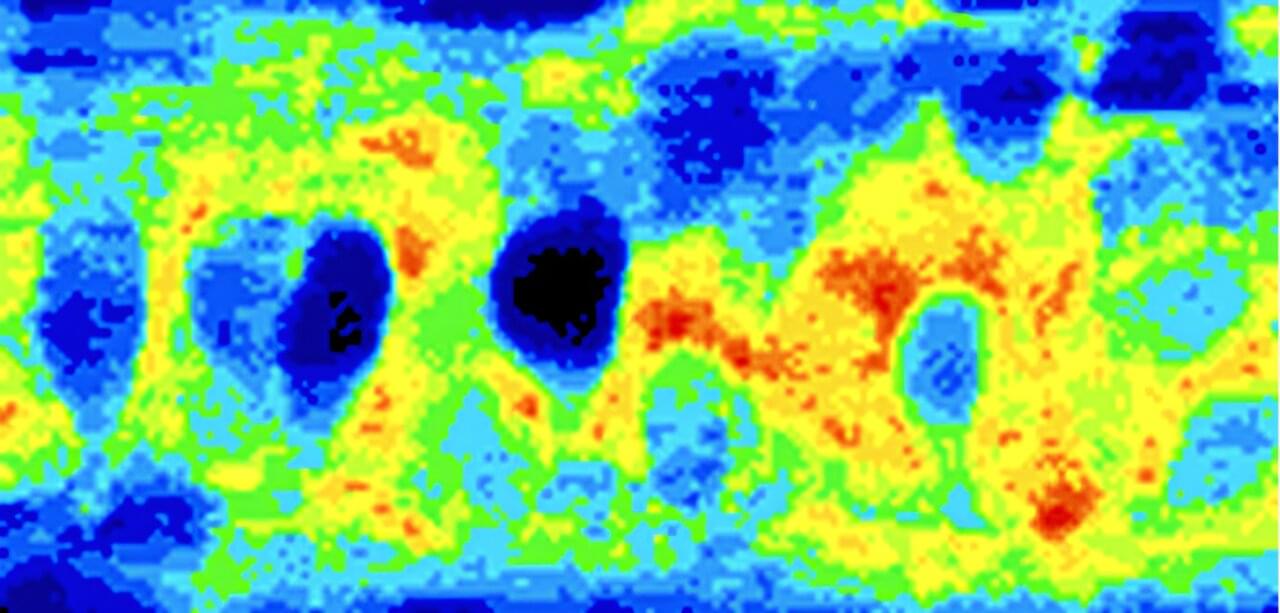

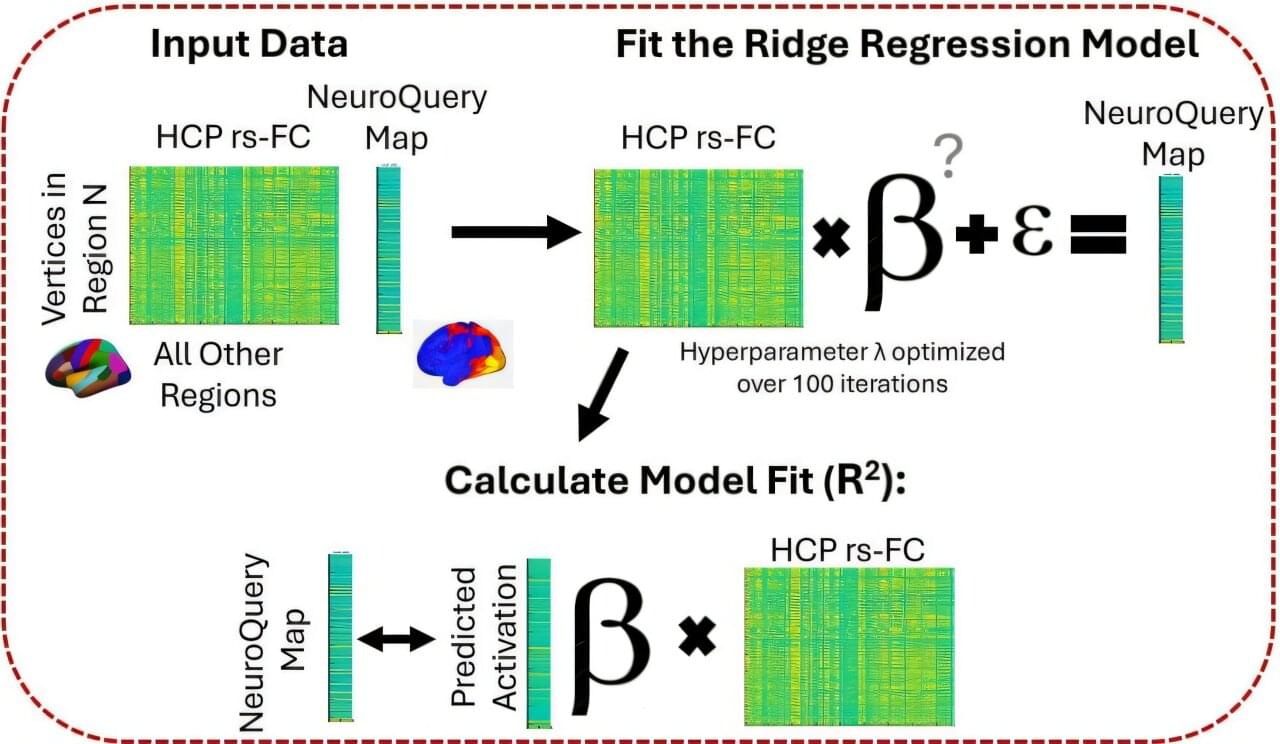

A new study provides the best evidence to date that the connection patterns between various parts of the human brain can tell scientists the specialized functions of each region.

Previous research has shown the relationship between connectivity and brain function for just one or a few functions, such as perception or social interactions.

But this study goes further by providing a “bird’s eye view” of the whole brain and its many functions, said Kelly Hiersche, lead author of the study and doctoral student in psychology at The Ohio State University.

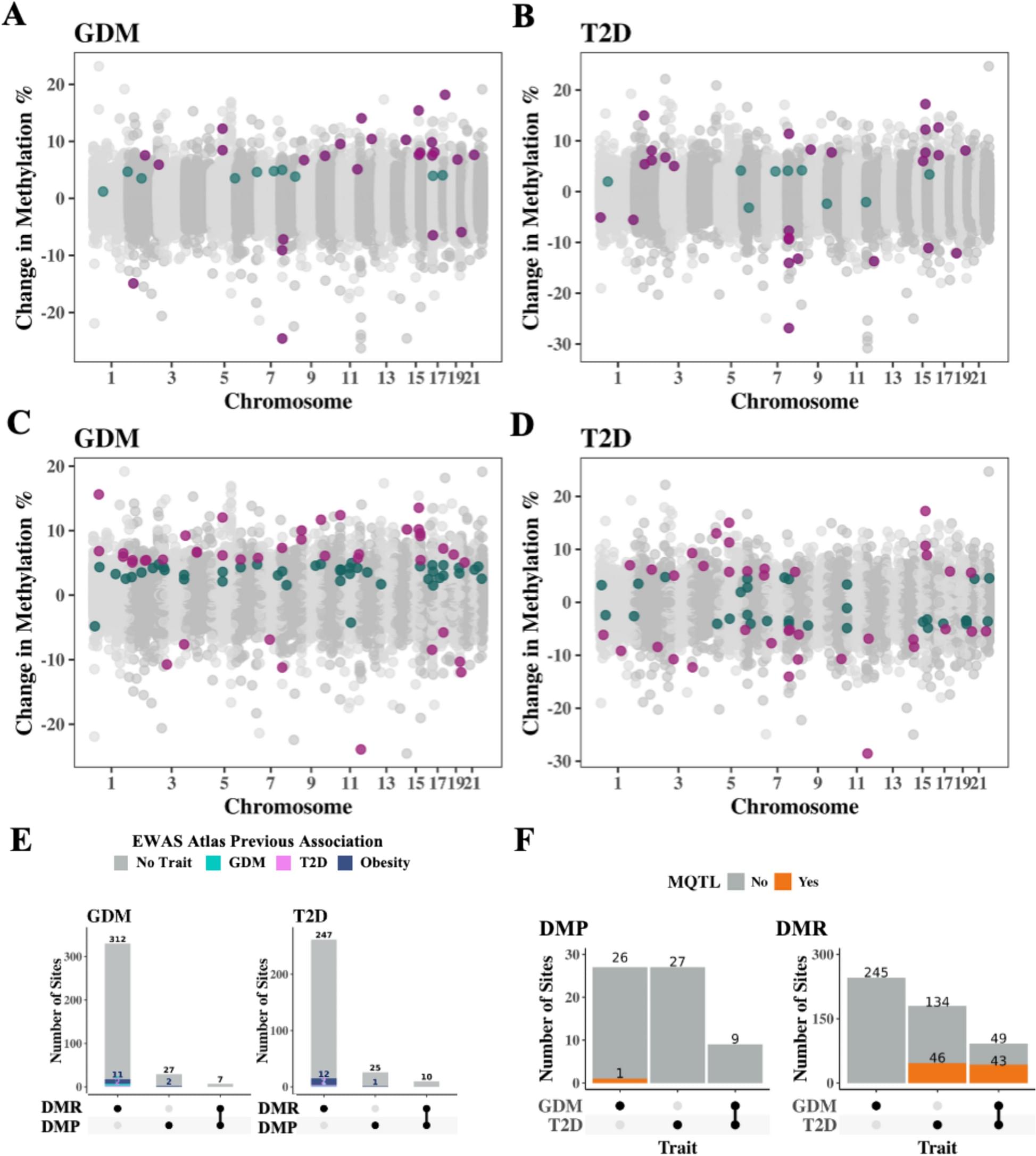

The incidence of type 2 diabetes (T2D) in youth is increasing and in-utero exposure to maternal diabetes is a known risk factor, with higher risk associated with pregestational T2D exposure compared to gestational diabetes mellitus (GDM) exposure. We hypothesize this differential risk is reflected in DNA methylation (DNAm) changes induced by differential timing of in-utero exposure to maternal diabetes, and that exposure to diabetes throughout pregnancy (T2D) compared to exposure later in development (GDM), induces different DNAm signatures and different T2D risk to offspring. This study presents an epigenome-wide investigation of DNAm alterations associated with in-utero exposure to either maternal pregestational T2D or GDM, to determine if the timing of prenatal diabetes exposure differentially alters DNAm.

We performed an epigenome-wide analysis on cord blood from 99 newborns exposed to pregestational T2D, 70 newborns exposed to GDM, and 41 unexposed to diabetes in-utero from the Next Generation birth cohort. Associations were tested using multiple linear regression models while adjusting for sex, maternal age, BMI, smoking status, gestational age, cord blood cell type proportions and batch effects.

We identified 27 differentially methylated sites associated with exposure to GDM, 27 sites associated with exposure to T2D, and 9 common sites associated with exposure to either GDM or T2D (adjusted p value 0.01). One site at CLDN15 and two unannotated sites were previously reported as associated with obesity. We also identified 87 differentially methylated regions (DMRs) associated with in-utero exposure to GDM and 69 DMRs associated with in-utero exposure to T2D. We identified 23 DMR sites that were previously associated with obesity, three with T2D and five with in-utero exposure to GDM. Furthermore, we identified six CpG sites in the PTPRN2 gene, a gene previously associated with DNAm differences in blood of youth with T2D from the same population.

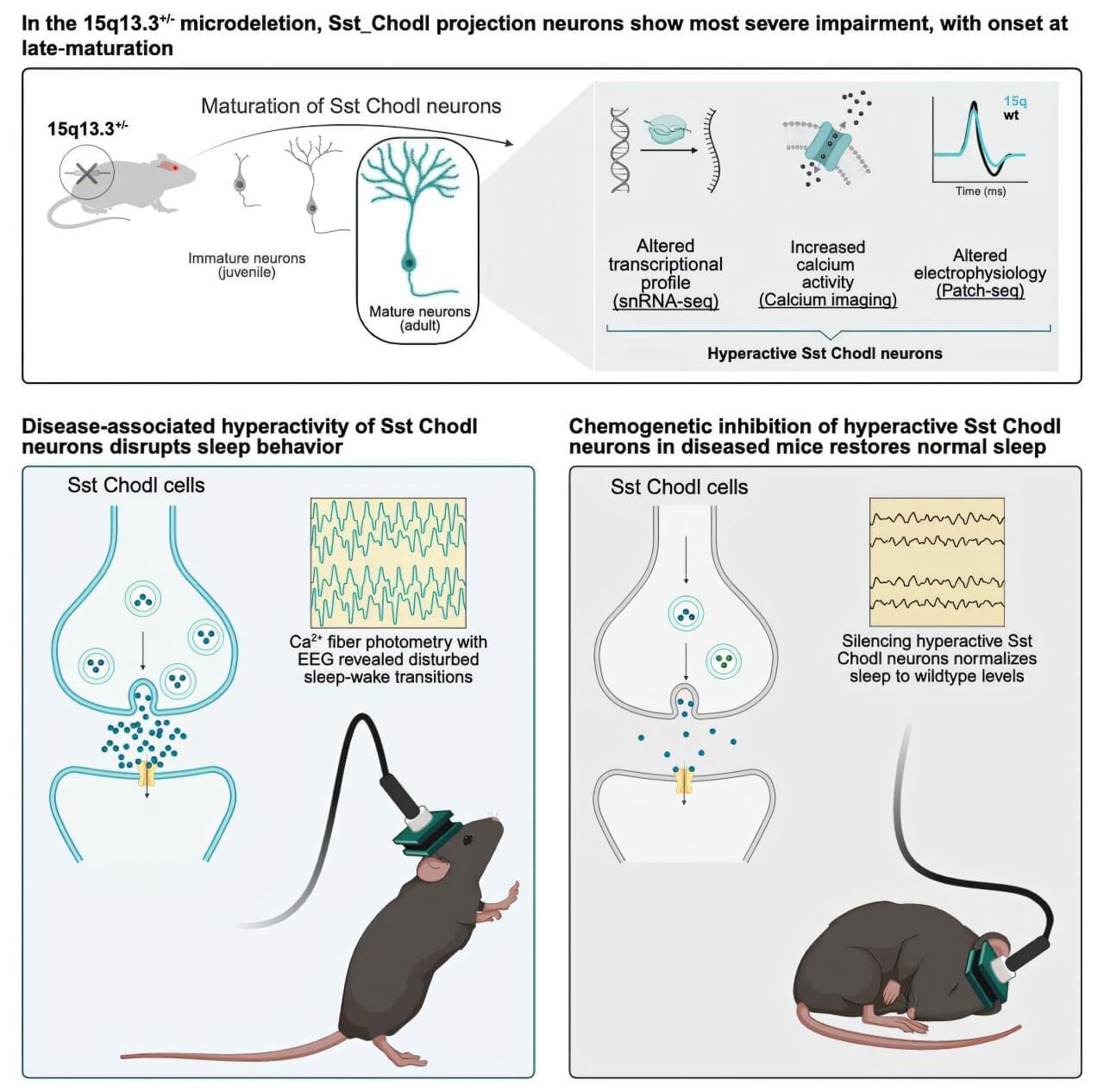

Difficulty completing everyday tasks. Failing memory. Unusually poor concentration. For many people living with schizophrenia, cognitive challenges are part of daily life. Alongside well-known symptoms such as hallucinations and delusions, these difficulties can make it hard to live the life they want. That is why researchers at the University of Copenhagen are working to find ways to prevent such symptoms—and they may now be one step closer.

In a new study, researchers discovered that a specific type of brain cell is abnormally active in mice displaying schizophrenia-like behavior. When the researchers reduced the activity of these cells, the mice’s behavior changed. The findings are published in the journal Neuron.

“Current treatments for cognitive symptoms in patients with diagnoses such as schizophrenia are inadequate. We need to understand more about what causes these cognitive symptoms that are derived from impairments during brain development. Our study may be the first step toward a new, targeted treatment that can prevent cognitive symptoms,” says Professor Konstantin Khodosevich from the Biotech Research and Innovation Center at the University of Copenhagen, and one of the researchers behind the study.

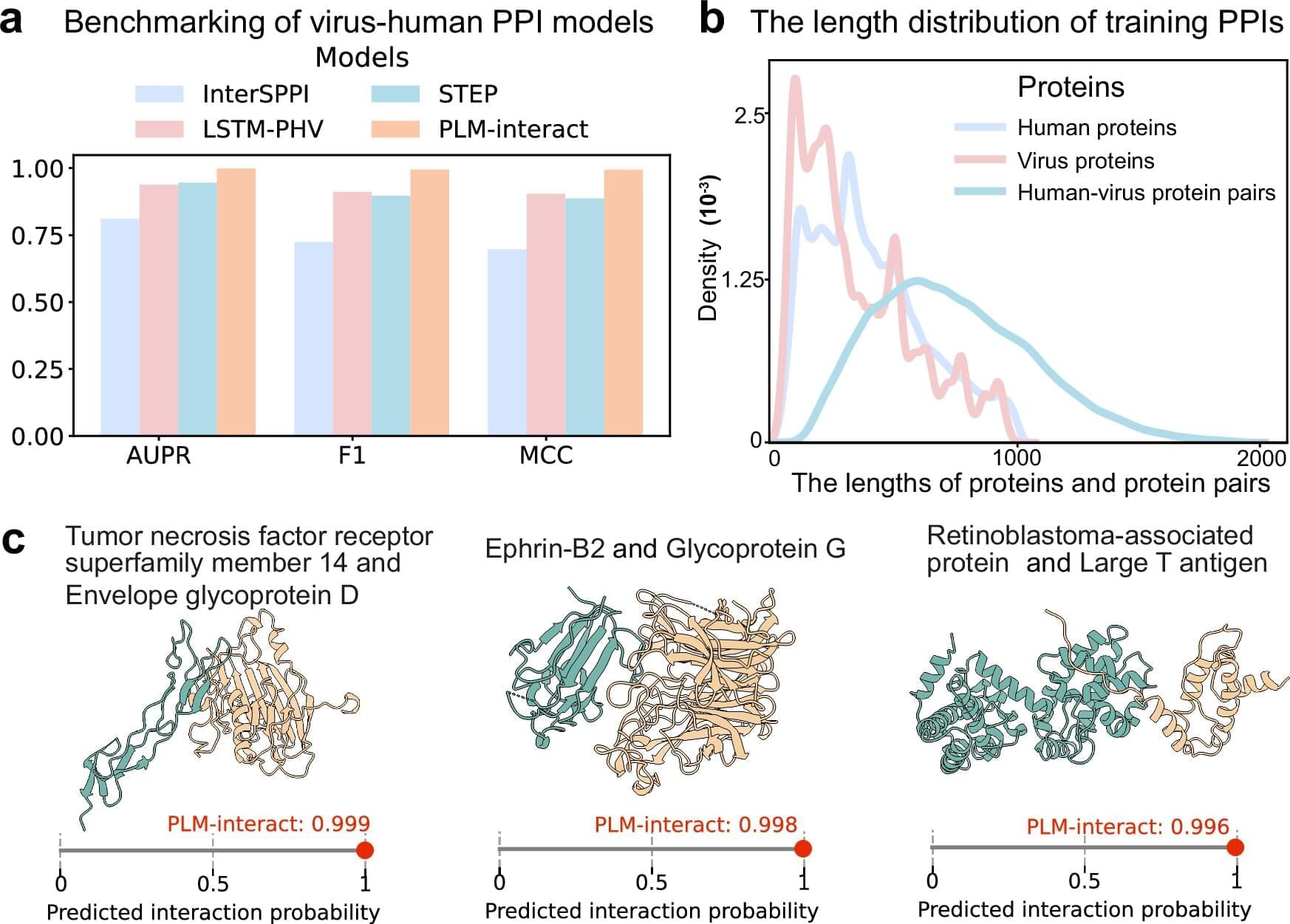

Scientists at the University of Glasgow have harnessed a powerful supercomputer, normally used by astronomers and physicists to study the universe, to develop a new machine learning model which can help translate the language of proteins.

In a new study published in Nature Communications, the cross-disciplinary team developed a large language model (LLM), called PLM-Interact, to better understand protein interactions, and even predict which mutations will impact how these crucial molecules “talk” to one another.

Early tests of PLM-interact, a protein language model (PLM), show that it outperforms competing models in understanding and predicting how proteins interact with one another. The team’s research demonstrates PLM-interact could help us better understand key areas of medical science, including the development of diseases such as cancer and virus infection.