Artificial intelligence is not replacing human intuition in these fields, but reimagining how questions are asked, explored and understood.

The energy-efficient desalination system produces fresh water without chemical additives and transforms leftover salts into useful materials.

face_with_colon_three year 2025.

Researchers at Meta have developed a wristband that translates your hand gestures into commands to interact with a computer, including moving a cursor, and even transcribing your handwriting in the air into text. It could make today’s personal devices a lot more accessible to people with reduced mobility or muscle weakness, and even unlock new ways for people to control their gadgets effortlessly.

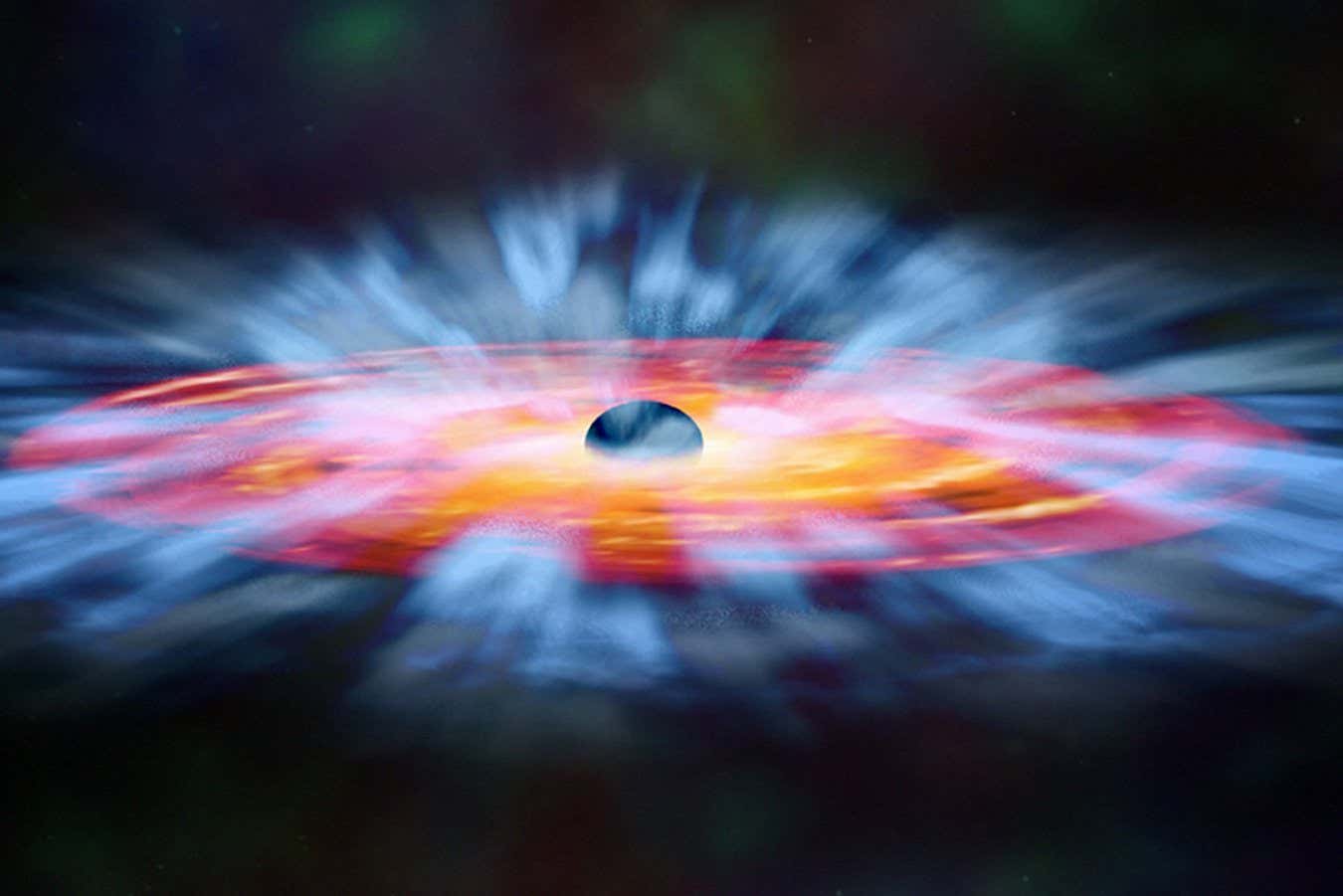

Emerging evidence highlights the brain renin–angiotensin system (RAS) as a key regulator of reward, memory, and stress. While these discoveries established the brain RAS as a promising therapeutic target for interventions in neurological and neuropsychiatric disorders, translational progress is hampered by the lack of an integrative mechanistic framework. Here, we consolidate accumulating evidence on the molecular and system-level roles of the brain RAS in reward, memory, and stress pathways, and its dual regulatory architecture. Pharmacological RAS modulation regulates domain-specific signaling in frontostriatal reward circuits, hippocampal–prefrontal memory networks, and frontolimbic fear networks. We evaluate the transdiagnostic therapeutic potential in neurological and neuropsychiatric disorders (e.g.

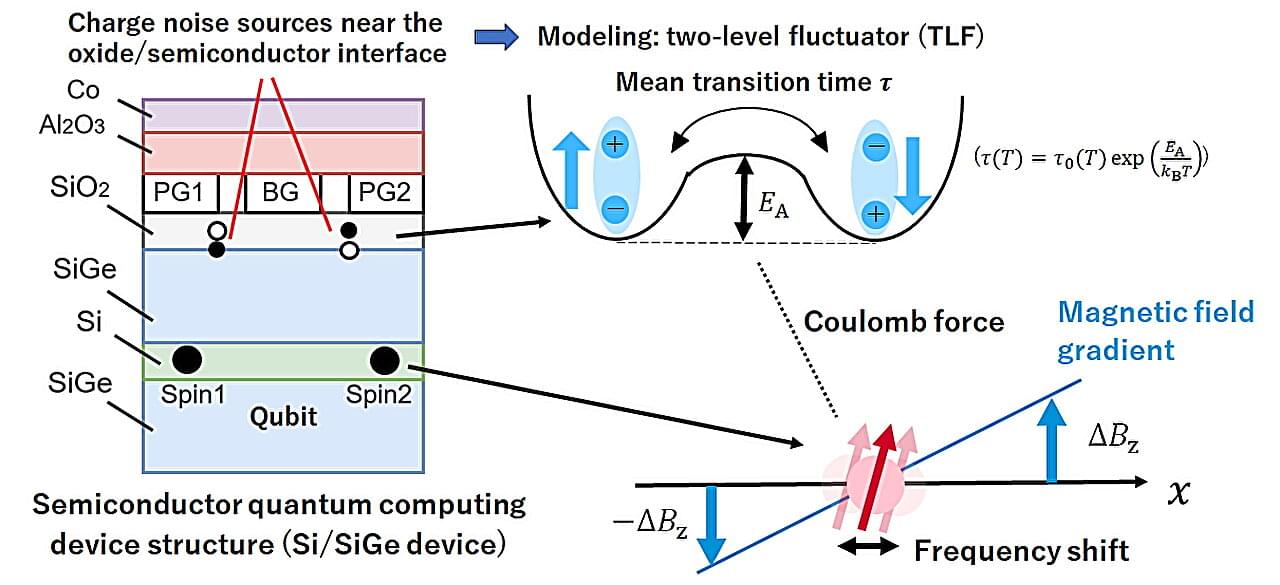

A spin qubit, in which quantum information is encoded in the spin state of an electron, is one of the most promising platforms for quantum computing. Spin qubits exhibit long coherence times and are compatible with advanced semiconductor manufacturing technologies. The leading implementation of spin qubits involves confined electrons inside quantum dots, a nanoscale semiconductor architecture that behaves like a controllable artificial atom. Recent advances have enabled high-fidelity operation of single- and two-qubit gates, exceeding the threshold required for certain surface code quantum error correction techniques.

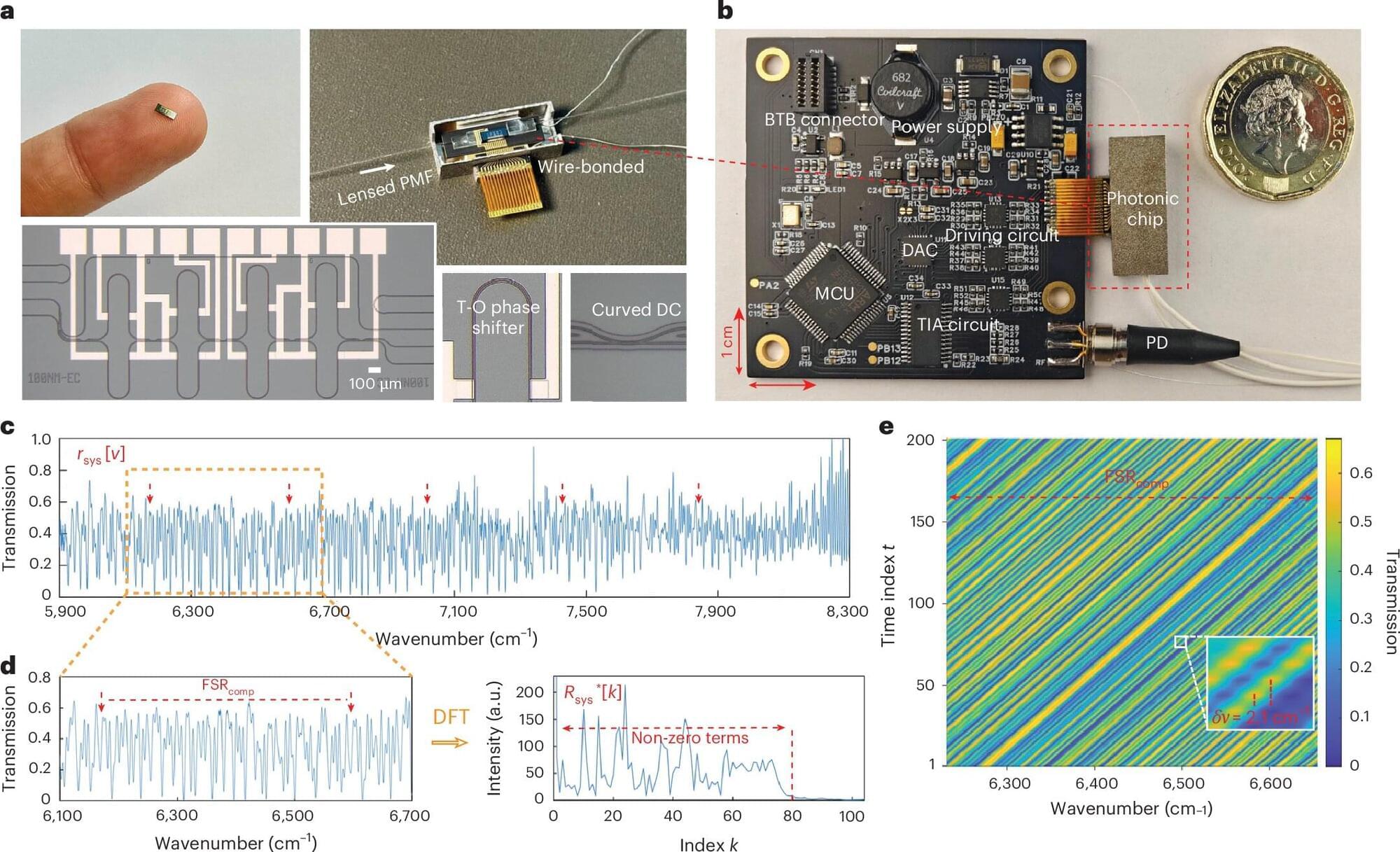

Researchers from the University of Cambridge and GlitterinTech, a startup founded by the same research group, have unveiled a fundamentally new type of optical spectrometer that delivers laboratory-grade precision in a device small enough to be embedded in portable and wearable technologies. By rethinking how spectra are measured and processed, the team has demonstrated a spectrometer costing only around $10, operating at a centimeter scale, and capable of applications ranging from industrial quality control to real-time health care monitoring.

Optical spectrometers underpin countless technologies, from chemical analysis and manufacturing to environmental sensing and medicine. Yet shrinking these instruments has historically involved painful trade-offs: Miniaturized devices typically sacrifice bandwidth, resolution or accuracy, limiting them to rough identification rather than true metrological measurements. The newly reported convolutional spectrometer overcomes these barriers by introducing a conceptually elegant operating principle grounded in the convolution theorem, offering unprecedented performance metrics compared with existing dispersive, Fourier-transform and reconstructive spectrometers.

As artificial intelligence systems grow larger and more powerful, their energy demands are rising dramatically. But recent research from the University of Massachusetts Amherst published in Nature Communications suggests that advanced AI capabilities may be achievable with dramatically lower energy consumption.

A team led by Hava Siegelmann, Provost Professor in the Manning College of Information and Computer Sciences at UMass Amherst, has developed a novel AI that more closely mirrors key aspects of how the human brain operates. Siegelmann and her lab have focused on two complementary goals: enabling AI systems to learn continuously in real time rather than only during a fixed training phase, and dramatically reducing the energy required for intelligent computation.

“Current AI systems are extraordinarily powerful, but they are also extraordinarily energy-hungry,” said Siegelmann. “Our work shows that it is possible to design AI that remains highly capable while operating much more efficiently.”