Law of Accelerating Returns

by Lifeboat Foundation Scientific Advisory Board member Ray Kurzweil.

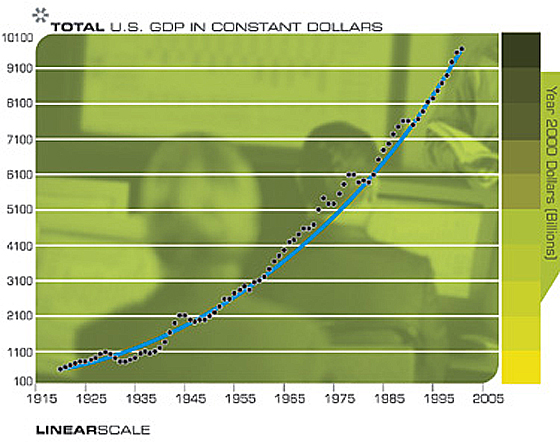

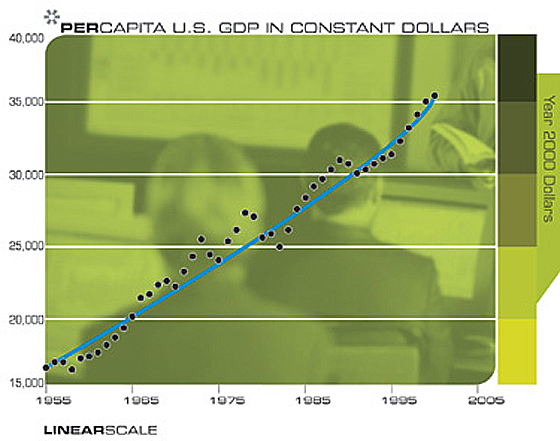

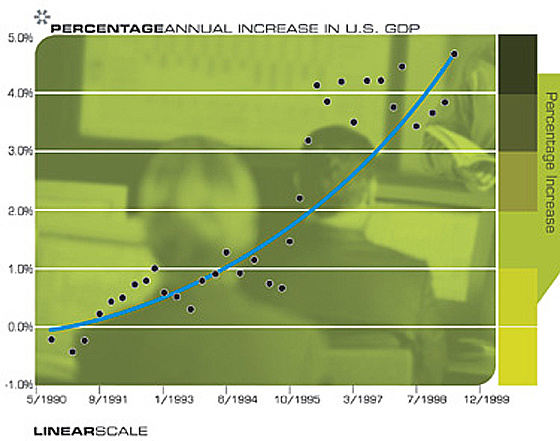

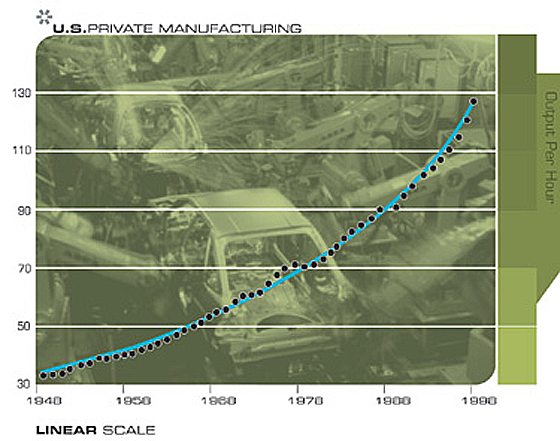

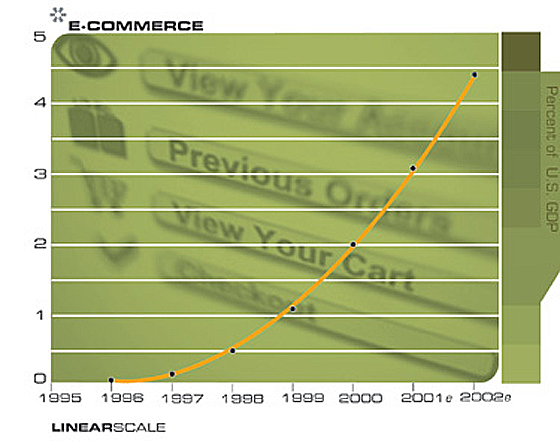

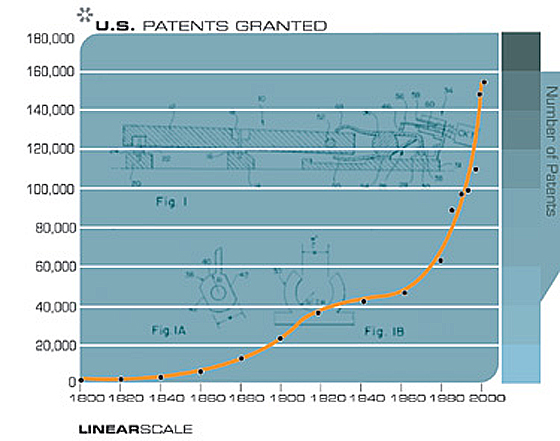

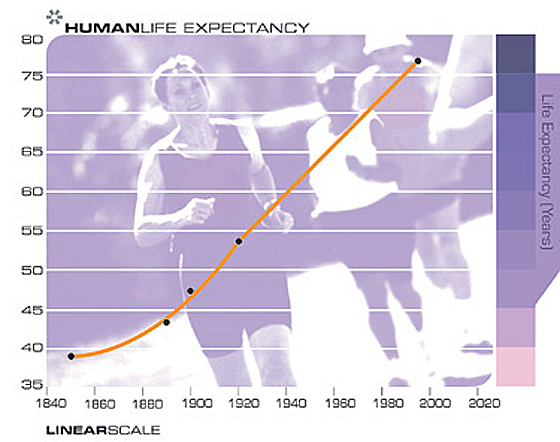

An analysis of the history of technology shows that technological change is exponential, contrary to the common-sense “intuitive linear” view. So we won’t experience 100 years of progress in the 21st century — it will be more like 20,000 years of progress (at today’s rate). The “returns”, such as chip speed and cost-effectiveness, also increase exponentially. There’s even exponential growth in the rate of exponential growth. Within a few decades, machine intelligence will surpass human intelligence, leading to The Singularity — technological change so rapid and profound it represents a rupture in the fabric of human history. The implications include the merger of biological and nonbiological intelligence, immortal software-based humans, and ultra-high levels of intelligence that expand outward in the universe at the speed of light.

Overview

You will get $40 trillion just by reading this essay and understanding what it says. For complete details, see below. (It’s true that authors will do just about anything to keep your attention, but I’m serious about this statement. Until I return to a further explanation, however, do read the first sentence of this paragraph carefully.)

Now back to the future: it’s widely misunderstood. Our forebears expected the future to be pretty much like their present, which had been pretty much like their past. Although exponential trends did exist a thousand years ago, they were at that very early stage where an exponential trend is so flat that it looks like no trend at all. So their lack of expectations was largely fulfilled. Today, in accordance with the common wisdom, everyone expects continuous technological progress and the social repercussions that follow. But the future will be far more surprising than most observers realize: few have truly internalized the implications of the fact that the rate of change itself is accelerating.

The Intuitive Linear View versus the Historical Exponential View

Most long range forecasts of technical feasibility in future time periods dramatically underestimate the power of future technology because they are based on what I call the “intuitive linear” view of technological progress rather than the “historical exponential view”. To express this another way, it is not the case that we will experience a hundred years of progress in the twenty-first century; rather we will witness on the order of twenty thousand years of progress (at today’s rate of progress, that is).

This disparity in outlook comes up frequently in a variety of contexts, for example, the discussion of the ethical issues that Bill Joy raised in his controversial WIRED cover story, Why The Future Doesn’t Need Us. Bill and I have been frequently paired in a variety of venues as pessimist and optimist respectively. Although I’m expected to criticize Bill’s position, and indeed I do take issue with his prescription of relinquishment, I nonetheless usually end up defending Joy on the key issue of feasibility. Recently a Noble Prize winning panelist dismissed Bill’s concerns, exclaiming that, “we’re not going to see self-replicating nanoengineered entities for a hundred years.” I pointed out that 100 years was indeed a reasonable estimate of the amount of technical progress required to achieve this particular milestone at today’s rate of progress. But because we’re doubling the rate of progress every decade, we’ll see a century of progress — at today’s rate — in only 25 calendar years.

When people think of a future period, they intuitively assume that the current rate of progress will continue for future periods. However, careful consideration of the pace of technology shows that the rate of progress is not constant, but it is human nature to adapt to the changing pace, so the intuitive view is that the pace will continue at the current rate. Even for those of us who have been around long enough to experience how the pace increases over time, our unexamined intuition nonetheless provides the impression that progress changes at the rate that we have experienced recently. From the mathematician’s perspective, a primary reason for this is that an exponential curve approximates a straight line when viewed for a brief duration. So even though the rate of progress in the very recent past (e.g., this past year) is far greater than it was ten years ago (let alone a hundred or a thousand years ago), our memories are nonetheless dominated by our very recent experience. It is typical, therefore, that even sophisticated commentators, when considering the future, extrapolate the current pace of change over the next 10 years or 100 years to determine their expectations. This is why I call this way of looking at the future the “intuitive linear” view.

But a serious assessment of the history of technology shows that technological change is exponential. In exponential growth, we find that a key measurement such as computational power is multiplied by a constant factor for each unit of time (e.g., doubling every year) rather than just being added to incrementally. Exponential growth is a feature of any evolutionary process, of which technology is a primary example.

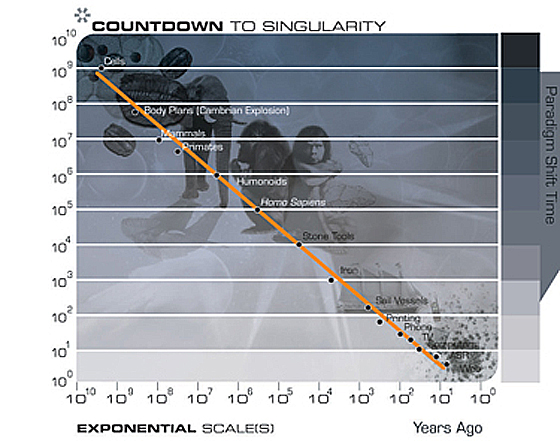

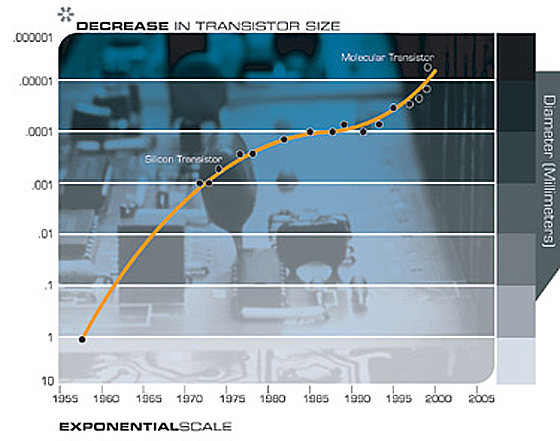

One can examine the data in different ways, on different time scales, and for a wide variety of technologies ranging from electronic to biological, and the acceleration of progress and growth applies. Indeed, we find not just simple exponential growth, but “double” exponential growth, meaning that the rate of exponential growth is itself growing exponentially. These observations do not rely merely on an assumption of the continuation of Moore’s law (i.e., the exponential shrinking of transistor sizes on an integrated circuit), but is based on a rich model of diverse technological processes. What it clearly shows is that technology, particularly the pace of technological change, advances (at least) exponentially, not linearly, and has been doing so since the advent of technology, indeed since the advent of evolution on Earth.

I emphasize this point because it is the most important failure that would-be prognosticators make in considering future trends. Most technology forecasts ignore altogether this “historical exponential view” of technological progress. That is why people tend to overestimate what can be achieved in the short term (because we tend to leave out necessary details), but underestimate what can be achieved in the long term (because the exponential growth is ignored).

The Law of Accelerating Returns

We can organize these observations into what I call the law of accelerating returns as follows:

Evolution applies positive feedback in that the more capable methods resulting from one stage of evolutionary progress are used to create the next stage. As a result, the rate of progress of an evolutionary process increases exponentially over time.

Over time, the “order” of the information embedded in the evolutionary process (i.e., the measure of how well the information fits a purpose, which in evolution is survival) increases.

A correlate of the above observation is that the “returns” of an evolutionary process (e.g., the speed, cost-effectiveness, or overall “power” of a process) increase exponentially over time.

In another positive feedback loop, as a particular evolutionary process (e.g., computation) becomes more effective (e.g., cost effective), greater resources are deployed toward the further progress of that process. This results in a second level of exponential growth (i.e., the rate of exponential growth itself grows exponentially).

Biological evolution is one such evolutionary process.

Technological evolution is another such evolutionary process. Indeed, the emergence of the first technology creating species resulted in the new evolutionary process of technology. Therefore, technological evolution is an outgrowth of — and a continuation of — biological evolution.

A specific paradigm (a method or approach to solving a problem, e.g., shrinking transistors on an integrated circuit as an approach to making more powerful computers) provides exponential growth until the method exhausts its potential. When this happens, a paradigm shift (i.e., a fundamental change in the approach) occurs, which enables exponential growth to continue.

If we apply these principles at the highest level of evolution on Earth, the first step, the creation of cells, introduced the paradigm of biology. The subsequent emergence of DNA provided a digital method to record the results of evolutionary experiments. Then, the evolution of a species who combined rational thought with an opposable appendage (i.e., the thumb) caused a fundamental paradigm shift from biology to technology. The upcoming primary paradigm shift will be from biological thinking to a hybrid combining biological and nonbiological thinking. This hybrid will include “biologically inspired” processes resulting from the reverse engineering of biological brains.

If we examine the timing of these steps, we see that the process has continuously accelerated. The evolution of life forms required billions of years for the first steps (e.g., primitive cells); later on progress accelerated. During the Cambrian explosion, major paradigm shifts took only tens of millions of years. Later on, Humanoids developed over a period of millions of years, and Homo sapiens over a period of only hundreds of thousands of years.

With the advent of a technology-creating species, the exponential pace became too fast for evolution through DNA-guided protein synthesis and moved on to human-created technology. Technology goes beyond mere tool making; it is a process of creating ever more powerful technology using the tools from the previous round of innovation. In this way, human technology is distinguished from the tool making of other species. There is a record of each stage of technology, and each new stage of technology builds on the order of the previous stage.

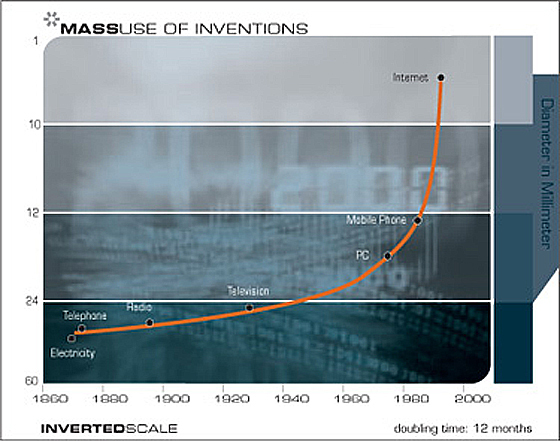

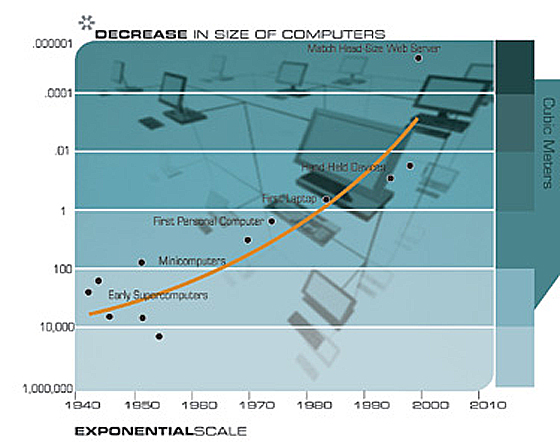

The first technological steps — sharp edges, fire, the wheel — took tens of thousands of years. For people living in this era, there was little noticeable technological change in even a thousand years. By 1000 A.D., progress was much faster and a paradigm shift required only a century or two. In the nineteenth century, we saw more technological change than in the nine centuries preceding it. Then in the first twenty years of the twentieth century, we saw more advancement than in all of the nineteenth century. Now, paradigm shifts occur in only a few years time. The World Wide Web did not exist in anything like its present form just a few years ago; it didn’t exist at all a decade ago.

The paradigm shift rate (i.e., the overall rate of technical progress) is currently doubling (approximately) every decade; that is, paradigm shift times are halving every decade (and the rate of acceleration is itself growing exponentially). So, the technological progress in the twenty-first century will be equivalent to what would require (in the linear view) on the order of 200 centuries. In contrast, the twentieth century saw only about 25 years of progress (again at today’s rate of progress) since we have been speeding up to current rates. So the twenty-first century will see almost a thousand times greater technological change than its predecessor.

The Singularity Is Near

To appreciate the nature and significance of the coming “singularity”, it is important to ponder the nature of exponential growth. Toward this end, I am fond of telling the tale of the inventor of chess and his patron, the emperor of China. In response to the emperor’s offer of a reward for his new beloved game, the inventor asked for a single grain of rice on the first square, two on the second square, four on the third, and so on. The Emperor quickly granted this seemingly benign and humble request. One version of the story has the emperor going bankrupt as the 63 doublings ultimately totaled 18 million trillion grains of rice. At ten grains of rice per square inch, this requires rice fields covering twice the surface area of the Earth, oceans included. Another version of the story has the inventor losing his head.

It should be pointed out that as the emperor and the inventor went through the first half of the chess board, things were fairly uneventful. The inventor was given spoonfuls of rice, then bowls of rice, then barrels. By the end of the first half of the chess board, the inventor had accumulated one large field’s worth (4 billion grains), and the emperor did start to take notice. It was as they progressed through the second half of the chessboard that the situation quickly deteriorated. Incidentally, with regard to the doublings of computation, that’s about where we stand now — there have been slightly more than 32 doublings of performance since the first programmable computers were invented during World War II.

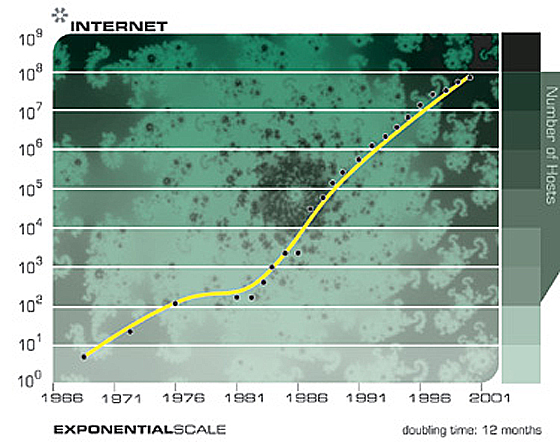

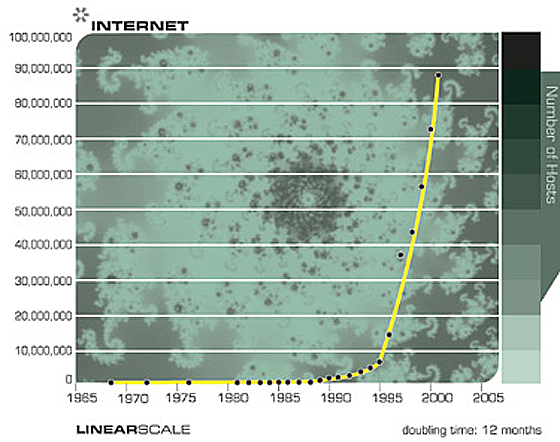

This is the nature of exponential growth. Although technology grows in the exponential domain, we humans live in a linear world. So technological trends are not noticed as small levels of technological power are doubled. Then seemingly out of nowhere, a technology explodes into view. For example, when the Internet went from 20,000 to 80,000 nodes over a two year period during the 1980s, this progress remained hidden from the general public. A decade later, when it went from 20 million to 80 million nodes in the same amount of time, the impact was rather conspicuous.

As exponential growth continues to accelerate into the first half of the twenty-first century, it will appear to explode into infinity, at least from the limited and linear perspective of contemporary humans. The progress will ultimately become so fast that it will rupture our ability to follow it. It will literally get out of our control. The illusion that we have our hand “on the plug”, will be dispelled.

Can the pace of technological progress continue to speed up indefinitely? Is there not a point where humans are unable to think fast enough to keep up with it? With regard to unenhanced humans, clearly so. But what would a thousand scientists, each a thousand times more intelligent than human scientists today, and each operating a thousand times faster than contemporary humans (because the information processing in their primarily nonbiological brains is faster) accomplish? One year would be like a millennium. What would they come up with?

Well, for one thing, they would come up with technology to become even more intelligent (because their intelligence is no longer of fixed capacity). They would change their own thought processes to think even faster. When the scientists evolve to be a million times more intelligent and operate a million times faster, then an hour would result in a century of progress (in today’s terms).

This, then, is the Singularity. The Singularity is technological change so rapid and so profound that it represents a rupture in the fabric of human history. Some would say that we cannot comprehend the Singularity, at least with our current level of understanding, and that it is impossible, therefore, to look past its “event horizon” and make sense of what lies beyond.

My view is that despite our profound limitations of thought, constrained as we are today to a mere hundred trillion interneuronal connections in our biological brains, we nonetheless have sufficient powers of abstraction to make meaningful statements about the nature of life after the Singularity. Most importantly, it is my view that the intelligence that will emerge will continue to represent the human civilization, which is already a human-machine civilization. This will be the next step in evolution, the next high level paradigm shift.

To put the concept of Singularity into perspective, let’s explore the history of the word itself. Singularity is a familiar word meaning a unique event with profound implications. In mathematics, the term implies infinity, the explosion of value that occurs when dividing a constant by a number that gets closer and closer to zero. In physics, similarly, a singularity denotes an event or location of infinite power. At the center of a black hole, matter is so dense that its gravity is infinite. As nearby matter and energy are drawn into the black hole, an event horizon separates the region from the rest of the Universe. It constitutes a rupture in the fabric of space and time. The Universe itself is said to have begun with just such a Singularity.

In the 1950s, John Von Neumann was quoted as saying that “the ever accelerating progress of technology…gives the appearance of approaching some essential singularity in the history of the race beyond which human affairs, as we know them, could not continue.” In the 1960s, I. J. Good wrote of an “intelligence explosion”, resulting from intelligent machines designing their next generation without human intervention. In 1986, Vernor Vinge, a mathematician and computer scientist at San Diego State University, wrote about a rapidly approaching technological “singularity” in his science fiction novel, Marooned in Realtime. Then in 1993, Vinge presented a paper to a NASA-organized symposium which described the Singularity as an impending event resulting primarily from the advent of “entities with greater than human intelligence”, which Vinge saw as the harbinger of a run-away phenomenon.

From my perspective, the Singularity has many faces. It represents the nearly vertical phase of exponential growth where the rate of growth is so extreme that technology appears to be growing at infinite speed. Of course, from a mathematical perspective, there is no discontinuity, no rupture, and the growth rates remain finite, albeit extraordinarily large. But from our currently limited perspective, this imminent event appears to be an acute and abrupt break in the continuity of progress. However, I emphasize the word “currently”. because one of the salient implications of the Singularity will be a change in the nature of our ability to understand. In other words, we will become vastly smarter as we merge with our technology.

When I wrote my first book, The Age of Intelligent Machines, in the 1980s, I ended the book with the specter of the emergence of machine intelligence greater than human intelligence, but found it difficult to look beyond this event horizon. Now having thought about its implications for the past 20 years, I feel that we are indeed capable of understanding the many facets of this threshold, one that will transform all spheres of human life.

Consider a few examples of the implications. The bulk of our experiences will shift from real reality to virtual reality. Most of the intelligence of our civilization will ultimately be nonbiological, which by the end of this century will be trillions of trillions of times more powerful than human intelligence. However, to address often expressed concerns, this does not imply the end of biological intelligence, even if thrown from its perch of evolutionary superiority. Moreover, it is important to note that the nonbiological forms will be derivative of biological design. In other words, our civilization will remain human, indeed in many ways more exemplary of what we regard as human than it is today, although our understanding of the term will move beyond its strictly biological origins.

Many observers have nonetheless expressed alarm at the emergence of forms of nonbiological intelligence superior to human intelligence. The potential to augment our own intelligence through intimate connection with other thinking mediums does not necessarily alleviate the concern, as some people have expressed the wish to remain “unenhanced” while at the same time keeping their place at the top of the intellectual food chain. My view is that the likely outcome is that on the one hand, from the perspective of biological humanity, these superhuman intelligences will appear to be their transcendent servants, satisfying their needs and desires. On the other hand, fulfilling the wishes of a revered biological legacy will occupy only a trivial portion of the intellectual power that the Singularity will bring.

Needless to say, the Singularity will transform all aspects of our lives, social, sexual, and economic, which I explore herewith.

Wherefrom Moore’s Law

Before considering further the implications of the Singularity, let’s examine the wide range of technologies that are subject to the law of accelerating returns. The exponential trend that has gained the greatest public recognition has become known as “Moore’s Law”. Gordon Moore, one of the inventors of integrated circuits, and then Chairman of Intel, noted in the mid 1970s that we could squeeze twice as many transistors on an integrated circuit every 24 months. Given that the electrons have less distance to travel, the circuits also run twice as fast, providing an overall quadrupling of computational power.

After sixty years of devoted service, Moore’s Law will die a dignified death no later than the year 2019. By that time, transistor features will be just a few atoms in width, and the strategy of ever finer photolithography will have run its course. So, will that be the end of the exponential growth of computing?

Don’t bet on it.

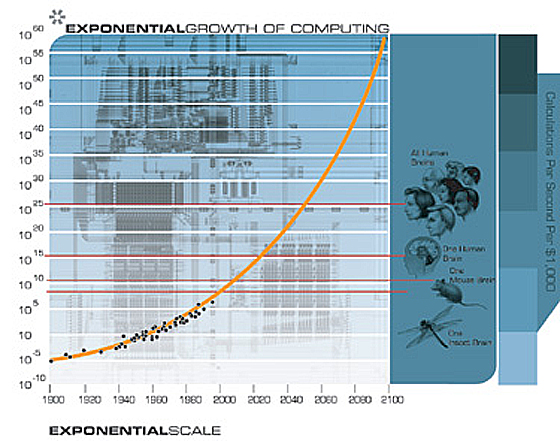

If we plot the speed (in instructions per second) per $1,000 (in constant dollars) of 49 famous calculators and computers spanning the entire twentieth century, we note some interesting observations.

Moore’s Law Was Not the First, but the Fifth Paradigm To Provide Exponential Growth of Computing

Each time one paradigm runs out of steam, another picks up the pace

It is important to note that Moore’s Law of Integrated Circuits was not the first, but the fifth paradigm to provide accelerating price-performance. Computing devices have been consistently multiplying in power (per unit of time) from the mechanical calculating devices used in the 1890 U.S. Census, to Turing’s relay-based “Robinson” machine that cracked the Nazi enigma code, to the CBS vacuum tube computer that predicted the election of Eisenhower, to the transistor-based machines used in the first space launches, to the integrated-circuit-based personal computer which I used to dictate (and automatically transcribe) this essay.

But I noticed something else surprising. When I plotted the 49 machines on an exponential graph (where a straight line means exponential growth), I didn’t get a straight line. What I got was another exponential curve. In other words, there’s exponential growth in the rate of exponential growth. Computer speed (per unit cost) doubled every three years between 1910 and 1950, doubled every two years between 1950 and 1966, and is now doubling every year.

But where does Moore’s Law come from? What is behind this remarkably predictable phenomenon? I have seen relatively little written about the ultimate source of this trend. Is it just “a set of industry expectations and goals”, as Randy Isaac, head of basic science at IBM contends? Or is there something more profound going on?

In my view, it is one manifestation (among many) of the exponential growth of the evolutionary process that is technology. The exponential growth of computing is a marvelous quantitative example of the exponentially growing returns from an evolutionary process. We can also express the exponential growth of computing in terms of an accelerating pace: it took ninety years to achieve the first MIPS (million instructions per second) per thousand dollars, now we add one MIPS per thousand dollars every day.

Moore’s Law narrowly refers to the number of transistors on an integrated circuit of fixed size, and sometimes has been expressed even more narrowly in terms of transistor feature size. But rather than feature size (which is only one contributing factor), or even number of transistors, I think the most appropriate measure to track is computational speed per unit cost. This takes into account many levels of “cleverness” (i.e., innovation, which is to say, technological evolution). In addition to all of the innovation in integrated circuits, there are multiple layers of innovation in computer design, e.g., pipelining, parallel processing, instruction look-ahead, instruction and memory caching, and many others.

From the above chart, we see that the exponential growth of computing didn’t start with integrated circuits (around 1958), or even transistors (around 1947), but goes back to the electromechanical calculators used in the 1890 and 1900 U.S. Census. This chart spans at least five distinct paradigms of computing, of which Moore’s Law pertains to only the latest one.

It’s obvious what the sixth paradigm will be after Moore’s Law runs out of steam during the second decade of this century. Chips today are flat (although it does require up to 20 layers of material to produce one layer of circuitry). Our brain, in contrast, is organized in three dimensions. We live in a three dimensional world, why not use the third dimension? The human brain actually uses a very inefficient electrochemical digital controlled analog computational process. The bulk of the calculations are done in the interneuronal connections at a speed of only about 200 calculations per second (in each connection), which is about ten million times slower than contemporary electronic circuits. But the brain gains its prodigious powers from its extremely parallel organization in three dimensions. There are many technologies in the wings that build circuitry in three dimensions. Nanotubes, for example, which are already working in laboratories, build circuits from pentagonal arrays of carbon atoms. One cubic inch of nanotube circuitry would be a million times more powerful than the human brain. There are more than enough new computing technologies now being researched, including three-dimensional silicon chips, optical computing, crystalline computing, DNA computing, and quantum computing, to keep the law of accelerating returns as applied to computation going for a long time.

Thus the (double) exponential growth of computing is broader than Moore’s Law, which refers to only one of its paradigms. And this accelerating growth of computing is, in turn, part of the yet broader phenomenon of the accelerating pace of any evolutionary process. Observers are quick to criticize extrapolations of an exponential trend on the basis that the trend is bound to run out of “resources”. The classical example is when a species happens upon a new habitat (e.g., rabbits in Australia), the species’ numbers will grow exponentially for a time, but then hit a limit when resources such as food and space run out.

But the resources underlying the exponential growth of an evolutionary process are relatively unbounded:

The (ever growing) order of the evolutionary process itself. Each stage of evolution provides more powerful tools for the next. In biological evolution, the advent of DNA allowed more powerful and faster evolutionary “experiments”. Later, setting the “designs” of animal body plans during the Cambrian explosion allowed rapid evolutionary development of other body organs such as the brain. Or to take a more recent example, the advent of computer assisted design tools allows rapid development of the next generation of computers.

The “chaos” of the environment in which the evolutionary process takes place and which provides the options for further diversity. In biological evolution, diversity enters the process in the form of mutations and ever changing environmental conditions. In technological evolution, human ingenuity combined with ever changing market conditions keep the process of innovation going.

The maximum potential of matter and energy to contain intelligent processes is a valid issue. But according to my models, we won’t approach those limits during this century (but this will become an issue within a couple of centuries).

We also need to distinguish between the “S” curve (an “S” stretched to the right, comprising very slow, virtually unnoticeable growth — followed by very rapid growth — followed by a flattening out as the process approaches an asymptote) that is characteristic of any specific technological paradigm and the continuing exponential growth that is characteristic of the ongoing evolutionary process of technology. Specific paradigms, such as Moore’s Law, do ultimately reach levels at which exponential growth is no longer feasible. Thus Moore’s Law is an S curve. But the growth of computation is an ongoing exponential (at least until we “saturate” the Universe with the intelligence of our human-machine civilization, but that will not be a limit in this coming century). In accordance with the law of accelerating returns, paradigm shift, also called innovation, turns the S curve of any specific paradigm into a continuing exponential. A new paradigm (e.g., three-dimensional circuits) takes over when the old paradigm approaches its natural limit. This has already happened at least four times in the history of computation. This difference also distinguishes the tool making of non-human species, in which the mastery of a tool-making (or using) skill by each animal is characterized by an abruptly ending S shaped learning curve, versus human-created technology, which has followed an exponential pattern of growth and acceleration since its inception.

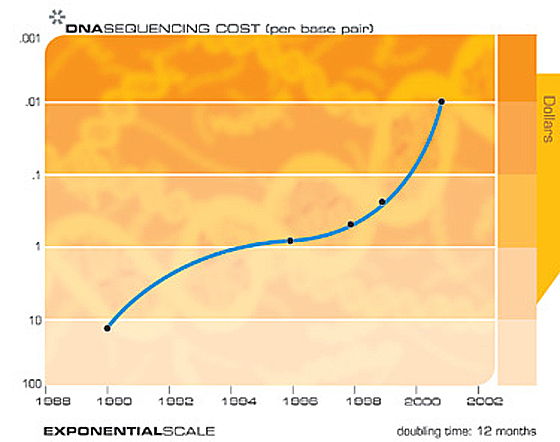

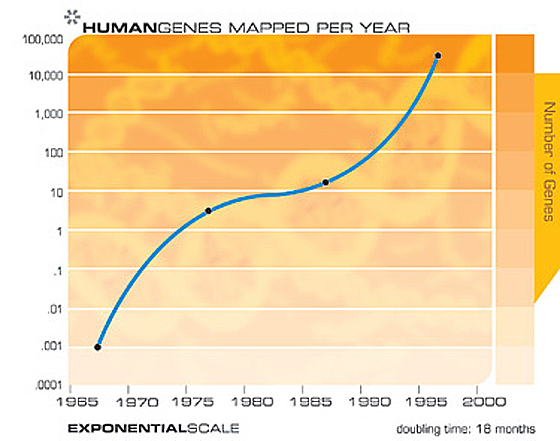

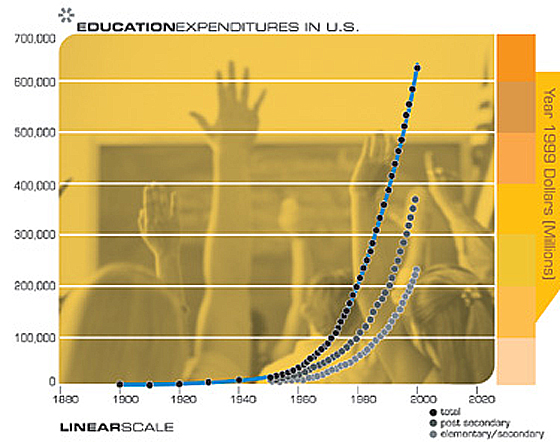

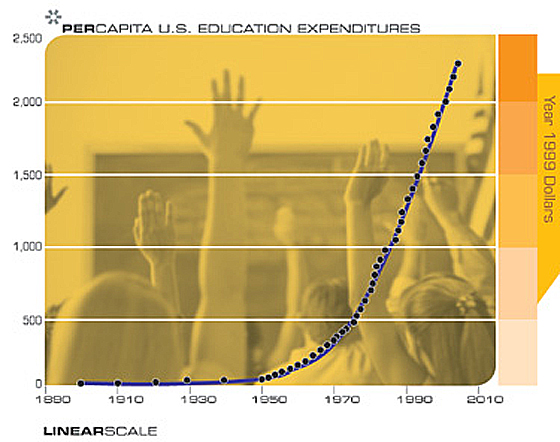

DNA Sequencing, Memory, Communications, the Internet, and Miniaturization

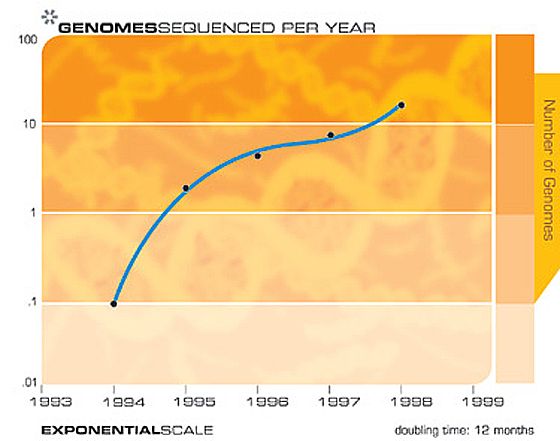

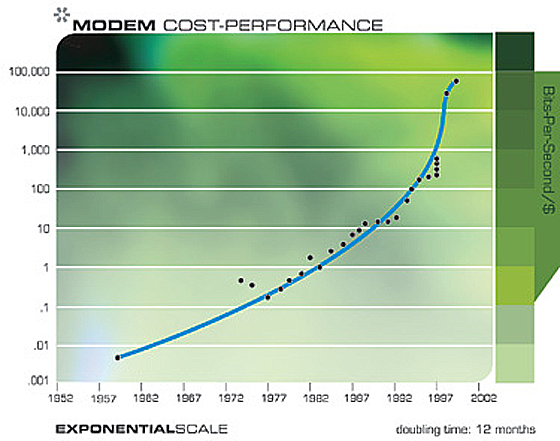

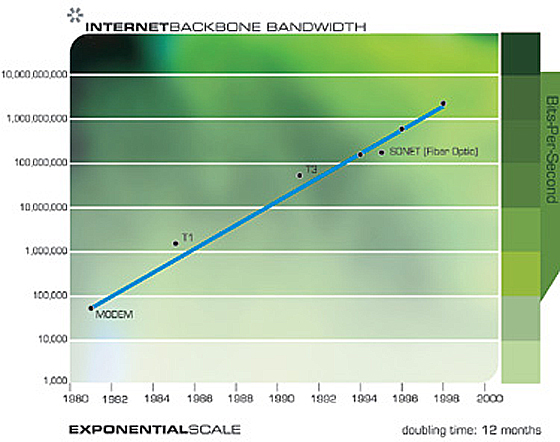

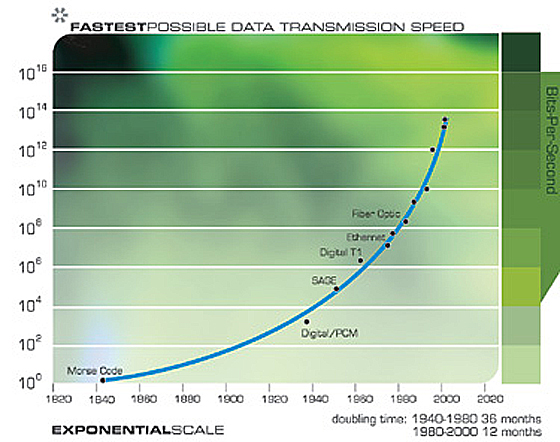

This “law of accelerating returns” applies to all of technology, indeed to any true evolutionary process, and can be measured with remarkable precision in information based technologies. There are a great many examples of the exponential growth implied by the law of accelerating returns in technologies as varied as DNA sequencing, communication speeds, electronics of all kinds, and even in the rapidly shrinking size of technology. The Singularity results not from the exponential explosion of computation alone, but rather from the interplay and myriad synergies that will result from manifold intertwined technological revolutions. Also, keep in mind that every point on the exponential growth curves underlying these panoply of technologies (see the graphs below) represents an intense human drama of innovation and competition. It is remarkable therefore that these chaotic processes result in such smooth and predictable exponential trends.

For example, when the human genome scan started fourteen years ago, critics pointed out that given the speed with which the genome could then be scanned, it would take thousands of years to finish the project. Yet the fifteen year project was nonetheless completed slightly ahead of schedule.

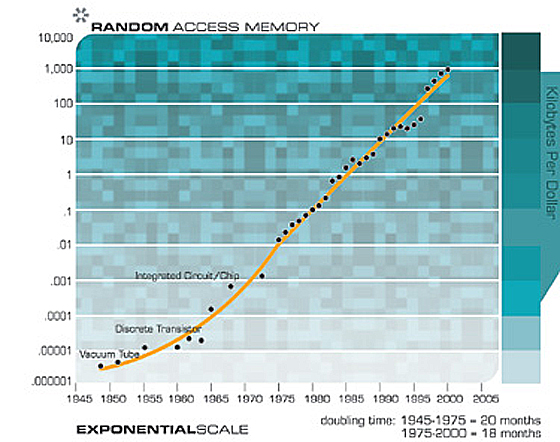

Of course, we expect to see exponential growth in electronic memories such as RAM.

Notice How Exponential Growth Continued through Paradigm Shifts from Vacuum Tubes to Discrete Transistors to Integrated Circuits

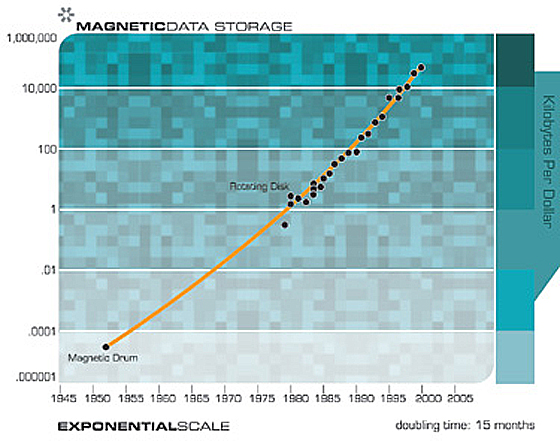

However, growth in magnetic memory is not primarily a matter of Moore’s law, but includes advances in mechanical and electromagnetic systems.

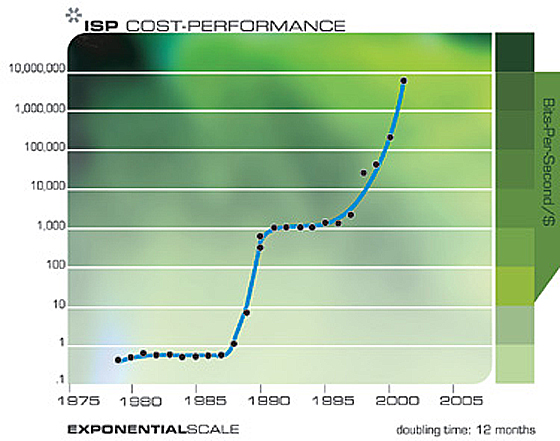

Exponential growth in communications technology has been even more explosive than in computation and is no less significant in its implications. Again, this progression involves far more than just shrinking transistors on an integrated circuit, but includes accelerating advances in fiber optics, optical switching, electromagnetic technologies, and others.

Notice Cascade of smaller “S” Curves

Note that in the above two charts we can actually see the progression of “S” curves: the acceleration fostered by a new paradigm, followed by a leveling off as the paradigm runs out of steam, followed by renewed acceleration through paradigm shift.

The following two charts show the overall growth of the Internet based on the number of hosts. These two charts plot the same data, but one is on an exponential axis and the other is linear. As I pointed out earlier, whereas technology progresses in the exponential domain, we experience it in the linear domain. So from the perspective of most observers, nothing was happening until the mid 1990s when seemingly out of nowhere, the world wide web and email exploded into view. But the emergence of the Internet into a worldwide phenomenon was readily predictable much earlier by examining the exponential trend data.

Notice how the explosion of the Internet appears to be a surprise from the Linear Chart, but was perfectly predictable from the Exponential Chart.

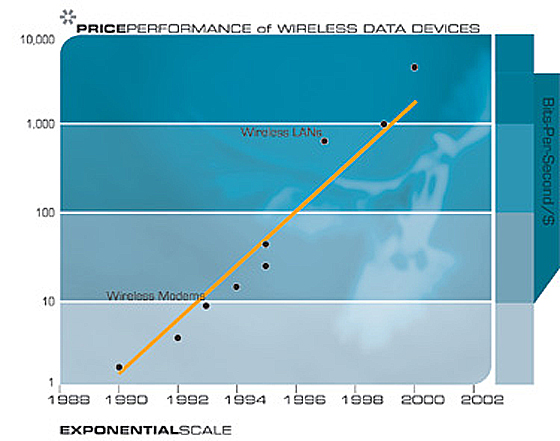

Ultimately we will get away from the tangle of wires in our cities and in our lives through wireless communication, the power of which is doubling every 10 to 11 months.

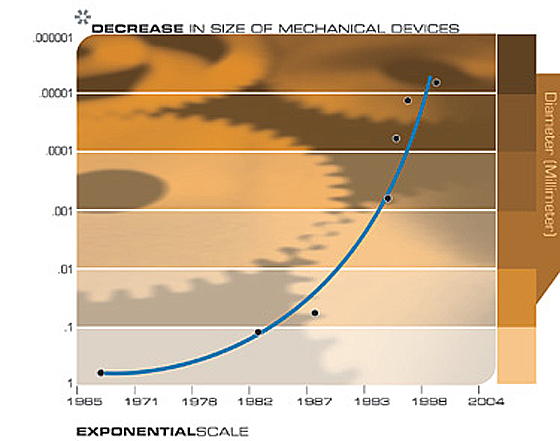

Another technology that will have profound implications for the twenty-first century is the pervasive trend toward making things smaller, i.e., miniaturization. The salient implementation sizes of a broad range of technologies, both electronic and mechanical, are shrinking, also at a double exponential rate. At present, we are shrinking technology by a factor of approximately 5.6 per linear dimension per decade.

The Exponential Growth of Computation Revisited

If we view the exponential growth of computation in its proper perspective as one example of the pervasiveness of the exponential growth of information based technology, that is, as one example of many of the law of accelerating returns, then we can confidently predict its continuation.

The Law of Accelerating Returns Applied to the Growth of Computation

The following provides a brief overview of the law of accelerating returns as it applies to the double exponential growth of computation. This model considers the impact of the growing power of the technology to foster its own next generation. For example, with more powerful computers and related technology, we have the tools and the knowledge to design yet more powerful computers, and to do so more quickly.

Note that the data for the year 2000 and beyond assume neural net connection calculations as it is expected that this type of calculation will ultimately dominate, particularly in emulating human brain functions. This type of calculation is less expensive than conventional (e.g., Pentium III / IV) calculations by a factor of at least 100 (particularly if implemented using digital controlled analog electronics, which would correspond well to the brain’s digital controlled analog electrochemical processes). A factor of 100 translates into approximately 6 years (today) and less than 6 years later in the twenty-first century.

My estimate of brain capacity is 100 billion neurons times an average 1,000 connections per neuron (with the calculations taking place primarily in the connections) times 200 calculations per second. Although these estimates are conservatively high, one can find higher and lower estimates. However, even much higher (or lower) estimates by orders of magnitude only shift the prediction by a relatively small number of years.

Some prominent dates from this analysis include the following:

We achieve one Human Brain capability (2 * 1016 cps) for $1,000 around the year 2023.

We achieve one Human Brain capability (2 * 1016 cps) for one cent around the year 2037.

We achieve one Human Race capability (2 * 1026 cps) for $1,000 around the year 2049.

We achieve one Human Race capability (2 * 1026 cps) for one cent around the year 2059.

The Model considers the following variables:

V: Velocity (i.e., power) of computing (measured in CPS/unit cost)

W: World Knowledge as it pertains to designing and building computational devices

t: Time

The assumptions of the model are:

1. V = C1 * W

In other words, computer power is a linear function of the knowledge of how to build computers. This is actually a conservative assumption. In general, innovations improve V (computer power) by a multiple, not in an additive way. Independent innovations multiply each other’s effect. For example, a circuit advance such as CMOS, a more efficient IC wiring methodology, and a processor innovation such as pipelining all increase V by independent multiples.

2. W = C2 * Integral (0 to t) V

In other words, W (knowledge) is cumulative, and the instantaneous increment to knowledge is proportional to V.

This gives us:

W = C1 * C2 * Integral (0 to t) W

W = C1 * C2 * C3(C4 * t)

V = C12 * C2 * C3(C4 * t)

Simplifying the constants, we get:

V = Ca * Cb(Cc * t)

So this is a formula for “accelerating” (i.e., exponentially growing) returns, a “regular Moore’s Law”.

As I mentioned above, the data shows exponential growth in the rate of exponential growth. (We doubled computer power every three years early in the twentieth century, every two years in the middle of the century, and close to every one year during the 1990s.)

Let’s factor in another exponential phenomenon, which is the growing resources for computation. Not only is each (constant cost) device getting more powerful as a function of W, but the resources deployed for computation are also growing exponentially.

We now have:

N: Expenditures for computation

V = C1 * W (as before)

N = C4(C5 * t) (Expenditure for computation is growing at its own exponential rate)

W = C2 * Integral(0 to t) (N * V)

As before, world knowledge is accumulating, and the instantaneous increment is proportional to the amount of computation, which equals the resources deployed for computation (N) * the power of each (constant cost) device.

This gives us:

W = C1 * C2 * Integral(0 to t) (C4(C5 * t) * W)

W = C1 * C2 * C3(C6 * t)(C7 * t)

V = C12 * C2 * C3(C6 * t)(C7 * t)

Simplifying the constants, we get:

V = Ca * Cb(Cc * t)(Cd * t)

This is a double exponential — an exponential curve in which the rate of exponential growth is growing at a different exponential rate.

Now let’s consider real-world data. Considering the data for actual calculating devices and computers during the twentieth century:

CPS/$1K: Calculations Per Second for $1,000

Twentieth century computing data matches:

CPS/$1K = 10(6.00*((20.40/6.00)((A13–1900)/100))-11.00)

We can determine the growth rate over a period of time:

Growth Rate =10((LOG(CPS/$1K for Current Year) – LOG(CPS/$1K for Previous Year))/(Current Year – Previous Year))

Human Brain = 100 Billion (1011) neurons * 1000 (103) Connections/Neuron * 200 (2 * 102) Calculations Per Second Per Connection = 2 * 1016 Calculations Per Second

Human Race = 10 Billion (1010) Human Brains = 2 * 1026 Calculations Per Second

These formulas produce the graph above.

Already, IBM’s Blue Gene supercomputer, now being built and scheduled to be completed by 2005, is projected to provide 1 million billion calculations per second (i.e., one billion megaflops). This is already one twentieth of the capacity of the human brain, which I estimate at a conservatively high 20 million billion calculations per second (100 billion neurons times 1,000 connections per neuron times 200 calculations per second per connection). In line with my earlier predictions, supercomputers will achieve one human brain capacity by 2010, and personal computers will do so by around 2020. By 2030, it will take a village of human brains (around a thousand) to match $1,000 of computing. By 2050, $1,000 of computing will equal the processing power of all human brains on Earth.

Of course, this only includes those brains still using carbon-based neurons. While human neurons are wondrous creations in a way, we wouldn’t (and don’t) design computing circuits the same way. Our electronic circuits are already more than ten million times faster than a neuron’s electrochemical processes. Most of the complexity of a human neuron is devoted to maintaining its life support functions, not its information processing capabilities. Ultimately, we will need to port our mental processes to a more suitable computational substrate. Then our minds won’t have to stay so small, being constrained as they are today to a mere hundred trillion neural connections each operating at a ponderous 200 digitally controlled analog calculations per second.

The Software of Intelligence

So far, I’ve been talking about the hardware of computing. The software is even more salient. One of the principal assumptions underlying the expectation of the Singularity is the ability of nonbiological mediums to emulate the richness, subtlety, and depth of human thinking. Achieving the computational capacity of the human brain, or even villages and nations of human brains will not automatically produce human levels of capability. By human levels I include all the diverse and subtle ways in which humans are intelligent, including musical and artistic aptitude, creativity, physically moving through the world, and understanding and responding appropriately to emotion. The requisite hardware capacity is a necessary but not sufficient condition. The organization and content of these resources — the software of intelligence — is also critical.

Before addressing this issue, it is important to note that once a computer achieves a human level of intelligence, it will necessarily soar past it. A key advantage of nonbiological intelligence is that machines can easily share their knowledge. If I learn French, or read War and Peace, I can’t readily download that learning to you. You have to acquire that scholarship the same painstaking way that I did. My knowledge, embedded in a vast pattern of neurotransmitter concentrations and interneuronal connections, cannot be quickly accessed or transmitted. But we won’t leave out quick downloading ports in our nonbiological equivalents of human neuron clusters. When one computer learns a skill or gains an insight, it can immediately share that wisdom with billions of other machines.

As a contemporary example, we spent years teaching one research computer how to recognize continuous human speech. We exposed it to thousands of hours of recorded speech, corrected its errors, and patiently improved its performance. Finally, it became quite adept at recognizing speech (I dictated most of my recent book to it). Now if you want your own personal computer to recognize speech, it doesn’t have to go through the same process; you can just download the fully trained patterns in seconds. Ultimately, billions of nonbiological entities can be the master of all human and machine acquired knowledge.

In addition, computers are potentially millions of times faster than human neural circuits. A computer can also remember billions or even trillions of facts perfectly, while we are hard pressed to remember a handful of phone numbers. The combination of human level intelligence in a machine with a computer’s inherent superiority in the speed, accuracy, and sharing ability of its memory will be formidable.

There are a number of compelling scenarios to achieve higher levels of intelligence in our computers, and ultimately human levels and beyond. We will be able to evolve and train a system combining massively parallel neural nets with other paradigms to understand language and model knowledge, including the ability to read and model the knowledge contained in written documents. Unlike many contemporary “neural net” machines, which use mathematically simplified models of human neurons, some contemporary neural nets are already using highly detailed models of human neurons, including detailed nonlinear analog activation functions and other relevant details.

Although the ability of today’s computers to extract and learn knowledge from natural language documents is limited, their capabilities in this domain are improving rapidly. Computers will be able to read on their own, understanding and modeling what they have read, by the second decade of the twenty-first century. We can then have our computers read all of the world’s literature — books, magazines, scientific journals, and other available material. Ultimately, the machines will gather knowledge on their own by venturing out on the web, or even into the physical world, drawing from the full spectrum of media and information services, and sharing knowledge with each other (which machines can do far more easily than their human creators).

Reverse Engineering the Human Brain

The most compelling scenario for mastering the software of intelligence is to tap into the blueprint of the best example we can get our hands on of an intelligent process. There is no reason why we cannot reverse engineer the human brain, and essentially copy its design. Although it took its original designer several billion years to develop, it’s readily available to us, and not (yet) copyrighted. Although there’s a skull around the brain, it is not hidden from our view.

The most immediately accessible way to accomplish this is through destructive scanning: we take a frozen brain, preferably one frozen just slightly before rather than slightly after it was going to die anyway, and examine one brain layer — one very thin slice — at a time. We can readily see every neuron and every connection and every neurotransmitter concentration represented in each synapse-thin layer.

Human brain scanning has already started. A condemned killer allowed his brain and body to be scanned and you can access all 10 billion bytes of him on the Internet.

He has a 25 billion byte female companion on the site as well in case he gets lonely. This scan is not high enough in resolution for our purposes, but then, we probably don’t want to base our templates of machine intelligence on the brain of a convicted killer, anyway.

But scanning a frozen brain is feasible today, albeit not yet at a sufficient speed or bandwidth, but again, the law of accelerating returns will provide the requisite speed of scanning, just as it did for the human genome scan. Carnegie Mellon University’s Andreas Nowatzyk plans to scan the nervous system of the brain and body of a mouse with a resolution of less than 200 nanometers, which is getting very close to the resolution needed for reverse engineering.

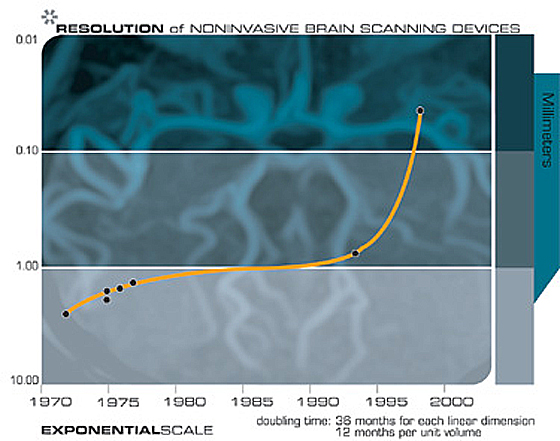

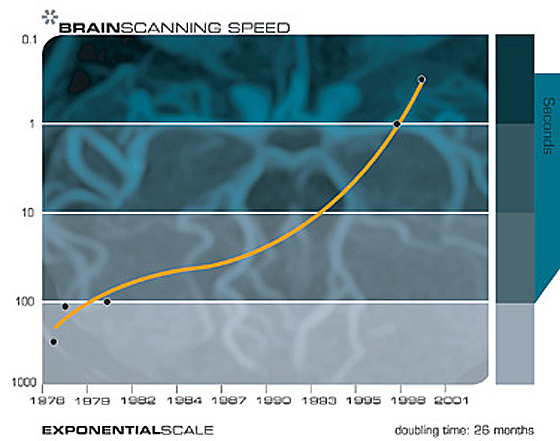

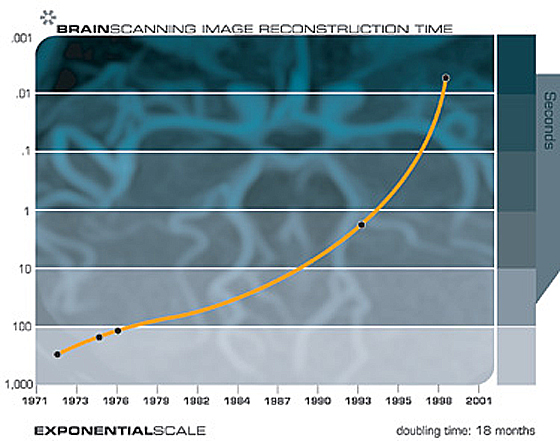

We also have noninvasive scanning techniques today, including high-resolution magnetic resonance imaging (MRI) scans, optical imaging, near-infrared scanning, and other technologies which are capable in certain instances of resolving individual somas, or neuron cell bodies. Brain scanning technologies are also increasing their resolution with each new generation, just what we would expect from the law of accelerating returns. Future generations will enable us to resolve the connections between neurons and to peer inside the synapses and record the neurotransmitter concentrations.

We can peer inside someone’s brain today with noninvasive scanners, which are increasing their resolution with each new generation of this technology. There are a number of technical challenges in accomplishing this, including achieving suitable resolution, bandwidth, lack of vibration, and safety. For a variety of reasons it is easier to scan the brain of someone recently deceased than of someone still living. It is easier to get someone deceased to sit still, for one thing. But noninvasively scanning a living brain will ultimately become feasible as MRI, optical, and other scanning technologies continue to improve in resolution and speed.

Scanning from Inside

Although noninvasive means of scanning the brain from outside the skull are rapidly improving, the most practical approach to capturing every salient neural detail will be to scan it from inside. By 2030, “nanobot” (i.e., nano robot) technology will be viable, and brain scanning will be a prominent application. Nanobots are robots that are the size of human blood cells, or even smaller. Billions of them could travel through every brain capillary and scan every relevant feature from up close. Using high speed wireless communication, the nanobots would communicate with each other, and with other computers that are compiling the brain scan data base (in other words, the nanobots will all be on a wireless local area network).

This scenario involves only capabilities that we can touch and feel today. We already have technology capable of producing very high resolution scans, provided that the scanner is physically proximate to the neural features. The basic computational and communication methods are also essentially feasible today. The primary features that are not yet practical are nanobot size and cost. As I discussed above, we can project the exponentially declining cost of computation, and the rapidly declining size of both electronic and mechanical technologies. We can conservatively expect, therefore, the requisite nanobot technology by around 2030. Because of its ability to place each scanner in very close physical proximity to every neural feature, nanobot-based scanning will be more practical than scanning the brain from outside.

How to Use Your Brain Scan

How will we apply the thousands of trillions of bytes of information derived from each brain scan? One approach is to use the results to design more intelligent parallel algorithms for our machines, particularly those based on one of the neural net paradigms. With this approach, we don’t have to copy every single connection. There is a great deal of repetition and redundancy within any particular brain region. Although the information contained in a human brain would require thousands of trillions of bytes of information (on the order of 100 billion neurons times an average of 1,000 connections per neuron, each with multiple neurotransmitter concentrations and connection data), the design of the brain is characterized by a human genome of only about a billion bytes.

Furthermore, most of the genome is redundant, so the initial design of the brain is characterized by approximately one hundred million bytes, about the size of Microsoft Word. Of course, the complexity of our brains greatly increases as we interact with the world (by a factor of more than ten million). Because of the highly repetitive patterns found in each specific brain region, it is not necessary to capture each detail in order to reverse engineer the significant digital-analog algorithms. With this information, we can design simulated nets that operate similarly. There are already multiple efforts under way to scan the human brain and apply the insights derived to the design of intelligent machines.

The pace of brain reverse engineering is only slightly behind the availability of the brain scanning and neuron structure information. A contemporary example is a comprehensive model of a significant portion of the human auditory processing system that Lloyd Watts has developed from both neurobiology studies of specific neuron types and brain interneuronal connection information. Watts’ model includes five parallel paths and includes the actual intermediate representations of auditory information at each stage of neural processing. Watts has implemented his model as real-time software which can locate and identify sounds with many of the same properties as human hearing. Although a work in progress, the model illustrates the feasibility of converting neurobiological models and brain connection data into working simulations. Also, as Hans Moravec and others have speculated, these efficient simulations require about 1,000 times less computation than the theoretical potential of the biological neurons being simulated.

Reverse Engineering the Human Brain: Five Parallel Auditory Pathways

Chart by Lloyd Watts

Cochlea: Sense organ of hearing. 30,000 fibers converts motion of the stapes into spectro-temporal representation of sound.

MC: Multipolar Cells. Measure spectral energy.

GBC: Globular Bushy Cells. Relays spikes from the auditory nerve to the Lateral Superior.

Olivary Complex (includes LSO and MSO). Encoding of timing and amplitude of signals for binaural comparison of level.

SBC: Spherical Bushy Cells. Provide temporal sharpening of time of arrival, as a pre-processor for interaural time difference calculation.

OC: Octopus Cells. Detection of transients.

DCN: Dorsal Cochlear Nucleus. Detection of spectral edges and calibrating for noise levels.

VNTB: Ventral Nucleus of the Trapezoid Body. Feedback signals to modulate outer hair cell function in the cochlea.

VNLL, PON: Ventral Nucleus of the Lateral Lemniscus, Peri-Olivary Nuclei. Processing transients from the Octopus Cells.

MSO: Medial Superior Olive. Computing inter-aural time difference (difference in time of arrival between the two ears, used to tell where a sound is coming from).

LSO: Lateral Superior Olive. Also involved in computing inter-aural level difference.

ICC: Central Nucleus of the Inferior Colliculus. The site of major integration of multiple representations of sound.

ICx: Exterior Nucleus of the Inferior Colliculus. Further refinement of sound localization.

SC: Superior Colliculus. Location of auditory/visual merging.

MGB: Medial Geniculate Body. The auditory portion of the thalamus.

LS: Limbic System. Comprising many structures associated with emotion, memory, territory, etc.

AC: Auditory Cortex.

The brain is not one huge “tabula rasa” (i.e., undifferentiated blank slate), but rather an intricate and intertwined collection of hundreds of specialized regions. The process of “peeling the onion” to understand these interleaved regions is well underway. As the requisite neuron models and brain interconnection data becomes available, detailed and implementable models such as the auditory example above will be developed for all brain regions.

After the algorithms of a region are understood, they can be refined and extended before being implemented in synthetic neural equivalents. For one thing, they can be run on a computational substrate that is already more than ten million times faster than neural circuitry. And we can also throw in the methods for building intelligent machines that we already understand.

Downloading the Human Brain

A more controversial application than this scanning-the-brain-to-understand-it scenario is scanning-the-brain-to-download-it. Here we scan someone’s brain to map the locations, interconnections, and contents of all the somas, axons, dendrites, presynaptic vesicles, neurotransmitter concentrations, and other neural components and levels. Its entire organization can then be re-created on a neural computer of sufficient capacity, including the contents of its memory.

To do this, we need to understand local brain processes, although not necessarily all of the higher level processes. Scanning a brain with sufficient detail to download it may sound daunting, but so did the human genome scan. All of the basic technologies exist today, just not with the requisite speed, cost, and size, but these are the attributes that are improving at a double exponential pace.

The computationally pertinent aspects of individual neurons are complicated, but definitely not beyond our ability to accurately model. For example, Ted Berger and his colleagues at Hedco Neurosciences have built integrated circuits that precisely match the digital and analog information processing characteristics of neurons, including clusters with hundreds of neurons. Carver Mead and his colleagues at CalTech have built a variety of integrated circuits that emulate the digital-analog characteristics of mammalian neural circuits.

A recent experiment at San Diego’s Institute for Nonlinear Science demonstrates the potential for electronic neurons to precisely emulate biological ones. Neurons (biological or otherwise) are a prime example of what is often called “chaotic computing”. Each neuron acts in an essentially unpredictable fashion. When an entire network of neurons receives input (from the outside world or from other networks of neurons), the signaling amongst them appears at first to be frenzied and random. Over time, typically a fraction of a second or so, the chaotic interplay of the neurons dies down, and a stable pattern emerges. This pattern represents the “decision” of the neural network. If the neural network is performing a pattern recognition task (which, incidentally, comprises the bulk of the activity in the human brain), then the emergent pattern represents the appropriate recognition.

So the question addressed by the San Diego researchers was whether electronic neurons could engage in this chaotic dance alongside biological ones. They hooked up their artificial neurons with those from spiney lobsters in a single network, and their hybrid biological-nonbiological network performed in the same way (i.e., chaotic interplay followed by a stable emergent pattern) and with the same type of results as an all biological net of neurons. Essentially, the biological neurons accepted their electronic peers. It indicates that their mathematical model of these neurons was reasonably accurate.

There are many projects around the world which are creating nonbiological devices to recreate in great detail the functionality of human neuron clusters. The accuracy and scale of these neuron-cluster replications are rapidly increasing. We started with functionally equivalent recreations of single neurons, then clusters of tens, then hundreds, and now thousands. Scaling up technical processes at an exponential pace is what technology is good at.

As the computational power to emulate the human brain becomes available — we’re not there yet, but we will be there within a couple of decades — projects already under way to scan the human brain will be accelerated, with a view both to understand the human brain in general, as well as providing a detailed description of the contents and design of specific brains. By the third decade of the twenty-first century, we will be in a position to create highly detailed and complete maps of all relevant features of all neurons, neural connections and synapses in the human brain, all of the neural details that play a role in the behavior and functionality of the brain, and to recreate these designs in suitably advanced neural computers.

Is the Human Brain Different from a Computer?

The answer depends on what we mean by the word “computer”. Certainly the brain uses very different methods from conventional contemporary computers. Most computers today are all digital and perform one (or perhaps a few) computations at a time at extremely high speed. In contrast, the human brain combines digital and analog methods with most computations performed in the analog domain. The brain is massively parallel, performing on the order of a hundred trillion computations at the same time, but at extremely slow speeds.

With regard to digital versus analog computing, we know that digital computing can be functionally equivalent to analog computing (although the reverse is not true), so we can perform all of the capabilities of a hybrid digital — analog network with an all digital computer. On the other hand, there is an engineering advantage to analog circuits in that analog computing is potentially thousands of times more efficient. An analog computation can be performed by a few transistors, or, in the case of mammalian neurons, specific electrochemical processes. A digital computation, in contrast, requires thousands or tens of thousands of transistors. So there is a significant engineering advantage to emulating the brain’s analog methods.

The massive parallelism of the human brain is the key to its pattern recognition abilities, which reflects the strength of human thinking. As I discussed above, mammalian neurons engage in a chaotic dance, and if the neural network has learned its lessons well, then a stable pattern will emerge reflecting the network’s decision. There is no reason why our nonbiological functionally equivalent recreations of biological neural networks cannot be built using these same principles, and indeed there are dozens of projects around the world that have succeeded in doing this. My own technical field is pattern recognition, and the projects that I have been involved in for over thirty years use this form of chaotic computing. Particularly successful examples are Carver Mead’s neural chips, which are highly parallel, use digital controlled analog computing, and are intended as functionally similar recreations of biological networks.

Objective and Subjective

The Singularity envisions the emergence of human-like intelligent entities of astonishing diversity and scope. Although these entities will be capable of passing the “Turing test” (i.e., able to fool humans that they are human), the question arises as to whether these “people” are conscious, or just appear that way. To gain some insight as to why this is an extremely subtle question (albeit an ultimately important one) it is useful to consider some of the paradoxes that emerge from the concept of downloading specific human brains.

Although I anticipate that the most common application of the knowledge gained from reverse engineering the human brain will be creating more intelligent machines that are not necessarily modeled on specific biological human individuals, the scenario of scanning and reinstantiating all of the neural details of a specific person raises the most immediate questions of identity. Let’s consider the question of what we will find when we do this.

We have to consider this question on both the objective and subjective levels. “Objective” means everyone except me, so let’s start with that. Objectively, when we scan someone’s brain and reinstantiate their personal mind file into a suitable computing medium, the newly emergent “person” will appear to other observers to have very much the same personality, history, and memory as the person originally scanned. That is, once the technology has been refined and perfected. Like any new technology, it won’t be perfect at first. But ultimately, the scans and recreations will be very accurate and realistic.

Interacting with the newly instantiated person will feel like interacting with the original person. The new person will claim to be that same old person and will have a memory of having been that person. The new person will have all of the patterns of knowledge, skill, and personality of the original. We are already creating functionally equivalent recreations of neurons and neuron clusters with sufficient accuracy that biological neurons accept their nonbiological equivalents and work with them as if they were biological. There are no natural limits that prevent us from doing the same with the hundred billion neuron cluster of clusters we call the human brain.

Subjectively, the issue is more subtle and profound, but first we need to reflect on one additional objective issue: our physical self.

The Importance of Having a Body

Consider how many of our thoughts and thinking are directed toward our body and its survival, security, nutrition, and image, not to mention affection, sexuality, and reproduction. Many, if not most, of the goals we attempt to advance using our brains have to do with our bodies: protecting them, providing them with fuel, making them attractive, making them feel good, providing for their myriad needs and desires. Some philosophers maintain that achieving human level intelligence is impossible without a body. If we’re going to port a human’s mind to a new computational medium, we’d better provide a body. A disembodied mind will quickly get depressed.

There are a variety of bodies that we will provide for our machines, and that they will provide for themselves: bodies built through nanotechnology (i.e., building highly complex physical systems atom by atom), virtual bodies (that exist only in virtual reality), bodies comprised of swarms of nanobots, and other technologies.

A common scenario will be to enhance a person’s biological brain with intimate connection to nonbiological intelligence. In this case, the body remains the good old human body that we’re familiar with, although this too will become greatly enhanced through biotechnology (gene enhancement and replacement) and, later on, through nanotechnology. A detailed examination of twenty-first century bodies is beyond the scope of this essay, but recreating and enhancing our bodies will be (and has been) an easier task than recreating our minds.

So Just Who Are These People?

To return to the issue of subjectivity, consider: is the reinstantiated mind the same consciousness as the person we just scanned? Are these “people” conscious at all? Is this a mind or just a brain?

Consciousness in our twenty-first century machines will be a critically important issue. But it is not easily resolved, or even readily understood. People tend to have strong views on the subject, and often just can’t understand how anyone else could possibly see the issue from a different perspective. Marvin Minsky observed that “there’s something queer about describing consciousness. Whatever people mean to say, they just can’t seem to make it clear.”

We don’t worry, at least not yet, about causing pain and suffering to our computer programs. But at what point do we consider an entity, a process, to be conscious, to feel pain and discomfort, to have its own intentionality, its own free will? How do we determine if an entity is conscious; if it has subjective experience? How do we distinguish a process that is conscious from one that just acts as if it is conscious?

We can’t simply ask it. If it says “Hey I’m conscious”, does that settle the issue? No, we have computer games today that effectively do that, and they’re not terribly convincing.

How about if the entity is very convincing and compelling when it says “I’m lonely, please keep me company.” Does that settle the issue?

If we look inside its circuits, and see essentially the identical kinds of feedback loops and other mechanisms in its brain that we see in a human brain (albeit implemented using nonbiological equivalents), does that settle the issue?

And just who are these people in the machine, anyway? The answer will depend on who you ask. If you ask the people in the machine, they will strenuously claim to be the original persons. For example, if we scan — let’s say myself — and record the exact state, level, and position of every neurotransmitter, synapse, neural connection, and every other relevant detail, and then reinstantiate this massive data base of information (which I estimate at thousands of trillions of bytes) into a neural computer of sufficient capacity, the person who then emerges in the machine will think that “he” is (and had been) me, or at least he will act that way. He will say “I grew up in Queens, New York, went to college at MIT, stayed in the Boston area, started and sold a few artificial intelligence companies, walked into a scanner there, and woke up in the machine here. Hey, this technology really works.”

But wait.

Is this really me? For one thing, old biological Ray (that’s me) still exists. I’ll still be here in my carbon-cell-based brain. Alas, I will have to sit back and watch the new Ray succeed in endeavors that I could only dream of.

A Thought Experiment

Let’s consider the issue of just who I am, and who the new Ray is a little more carefully. First of all, am I the stuff in my brain and body?

Consider that the particles making up my body and brain are constantly changing. We are not at all permanent collections of particles. The cells in our bodies turn over at different rates, but the particles (e.g., atoms and molecules) that comprise our cells are exchanged at a very rapid rate. I am just not the same collection of particles that I was even a month ago. It is the patterns of matter and energy that are semipermanent (that is, changing only gradually), but our actual material content is changing constantly, and very quickly. We are rather like the patterns that water makes in a stream. The rushing water around a formation of rocks makes a particular, unique pattern. This pattern may remain relatively unchanged for hours, even years. Of course, the actual material constituting the pattern — the water — is replaced in milliseconds. The same is true for Ray Kurzweil. Like the water in a stream, my particles are constantly changing, but the pattern that people recognize as Ray has a reasonable level of continuity. This argues that we should not associate our fundamental identity with a specific set of particles, but rather the pattern of matter and energy that we represent. Many contemporary philosophers seem partial to this “identify from pattern” argument.

But (again) wait.

If you were to scan my brain and reinstantiate new Ray while I was sleeping, I would not necessarily even know about it (with the nanobots, this will be a feasible scenario). If you then come to me, and say, “good news, Ray, we’ve successfully reinstantiated your mind file, so we won’t be needing your old brain anymore”, I may suddenly realize the flaw in this “identity from pattern” argument. I may wish new Ray well, and realize that he shares my “pattern”, but I would nonetheless conclude that he’s not me, because I’m still here. How could he be me? After all, I would not necessarily know that he even existed.

Let’s consider another perplexing scenario. Suppose I replace a small number of biological neurons with functionally equivalent nonbiological ones (they may provide certain benefits such as greater reliability and longevity, but that’s not relevant to this thought experiment). After I have this procedure performed, am I still the same person? My friends certainly think so. I still have the same self-deprecating humor, the same silly grin — yes, I’m still the same guy.

It should be clear where I’m going with this. Bit by bit, region by region, I ultimately replace my entire brain with essentially identical (perhaps improved) nonbiological equivalents (preserving all of the neurotransmitter concentrations and other details that represent my learning, skills, and memories). At each point, I feel the procedures were successful. At each point, I feel that I am the same guy. After each procedure, I claim to be the same guy. My friends concur. There is no old Ray and new Ray, just one Ray, one that never appears to fundamentally change.

But consider this. This gradual replacement of my brain with a nonbiological equivalent is essentially identical to the following sequence:

(i) scan Ray and reinstantiate Ray’s mind file into new (nonbiological) Ray, and, then

(ii) terminate old Ray. But we concluded above that in such a scenario new Ray is not the same as old Ray. And if old Ray is terminated, well then that’s the end of Ray. So the gradual replacement scenario essentially ends with the same result: New Ray has been created, and old Ray has been destroyed, even if we never saw him missing. So what appears to be the continuing existence of just one Ray is really the creation of new Ray and the termination of old Ray.

On yet another hand (we’re running out of philosophical hands here), the gradual replacement scenario is not altogether different from what happens normally to our biological selves, in that our particles are always rapidly being replaced. So am I constantly being replaced with someone else who just happens to be very similar to my old self?

I am trying to illustrate why consciousness is not an easy issue. If we talk about consciousness as just a certain type of intelligent skill: the ability to reflect on one’s own self and situation, for example, then the issue is not difficult at all because any skill or capability or form of intelligence that one cares to define will be replicated in nonbiological entities (i.e., machines) within a few decades. With this type of objective view of consciousness, the conundrums do go away. But a fully objective view does not penetrate to the core of the issue, because the essence of consciousness is subjective experience, not objective correlates of that experience.

Will these future machines be capable of having spiritual experiences?

They certainly will claim to. They will claim to be people, and to have the full range of emotional and spiritual experiences that people claim to have. And these will not be idle claims; they will evidence the sort of rich, complex, and subtle behavior one associates with these feelings. How do the claims and behaviors — compelling as they will be — relate to the subjective experience of these reinstantiated people? We keep coming back to the very real but ultimately unmeasurable issue of consciousness.

People often talk about consciousness as if it were a clear property of an entity that can readily be identified, detected, and gauged. If there is one crucial insight that we can make regarding why the issue of consciousness is so contentious, it is the following:

There exists no objective test that can conclusively determine its presence.

Science is about objective measurement and logical implications therefrom, but the very nature of objectivity is that you cannot measure subjective experience-you can only measure correlates of it, such as behavior (and by behavior, I include the actions of components of an entity, such as neurons). This limitation has to do with the very nature of the concepts “objective” and “subjective”. Fundamentally, we cannot penetrate the subjective experience of another entity with direct objective measurement. We can certainly make arguments about it: i.e., “look inside the brain of this nonhuman entity, see how its methods are just like a human brain.” Or, “see how its behavior is just like human behavior.” But in the end, these remain just arguments. No matter how convincing the behavior of a reinstantiated person, some observers will refuse to accept the consciousness of an entity unless it squirts neurotransmitters, or is based on DNA-guided protein synthesis, or has some other specific biologically human attribute.

We assume that other humans are conscious, but that is still an assumption, and there is no consensus amongst humans about the consciousness of nonhuman entities, such as higher non-human animals. The issue will be even more contentious with regard to future nonbiological entities with human-like behavior and intelligence.

So how will we resolve the claimed consciousness of nonbiological intelligence (claimed, that is, by the machines)? From a practical perspective, we’ll accept their claims. Keep in mind that nonbiological entities in the twenty-first century will be extremely intelligent, so they’ll be able to convince us that they are conscious. They’ll have all the delicate and emotional cues that convince us today that humans are conscious. They will be able to make us laugh and cry. And they’ll get mad if we don’t accept their claims. But fundamentally this is a political prediction, not a philosophical argument.

On Tubules and Quantum Computing

Over the past several years, Roger Penrose, a noted physicist and philosopher, has suggested that fine structures in the neurons called tubules perform an exotic form of computation called “quantum computing”. Quantum computing is computing using what are called “qu bits” which take on all possible combinations of solutions simultaneously. It can be considered to be an extreme form of parallel processing (because every combination of values of the qu bits are tested simultaneously). Penrose suggests that the tubules and their quantum computing capabilities complicate the concept of recreating neurons and reinstantiating mind files.

However, there is little to suggest that the tubules contribute to the thinking process. Even generous models of human knowledge and capability are more than accounted for by current estimates of brain size, based on contemporary models of neuron functioning that do not include tubules. In fact, even with these tubule-less models, it appears that the brain is conservatively designed with many more connections (by several orders of magnitude) than it needs for its capabilities and capacity. Recent experiments (e.g., the San Diego Institute for Nonlinear Science experiments) showing that hybrid biological-nonbiological networks perform similarly to all biological networks, while not definitive, are strongly suggestive that our tubule-less models of neuron functioning are adequate. Lloyd Watts’ software simulation of his intricate model of human auditory processing uses orders of magnitude less computation than the networks of neurons he is simulating, and there is no suggestion that quantum computing is needed.

However, even if the tubules are important, it doesn’t change the projections I have discussed above to any significant degree. According to my model of computational growth, if the tubules multiplied neuron complexity by a factor of a thousand (and keep in mind that our current tubule-less neuron models are already complex, including on the order of a thousand connections per neuron, multiple nonlinearities and other details), this would delay our reaching brain capacity by only about 9 years. If we’re off by a factor of a million, that’s still only a delay of 17 years. A factor of a billion is around 24 years (keep in mind computation is growing by a double exponential).

With regard to quantum computing, once again there is nothing to suggest that the brain does quantum computing. Just because quantum technology may be feasible does not suggest that the brain is capable of it. After all, we don’t have lasers or even radios in our brains. Although some scientists have claimed to detect quantum wave collapse in the brain, no one has suggested human capabilities that actually require a capacity for quantum computing.

However, even if the brain does do quantum computing, this does not significantly change the outlook for human-level computing (and beyond) nor does it suggest that brain downloading is infeasible. First of all, if the brain does do quantum computing this would only verify that quantum computing is feasible. There would be nothing in such a finding to suggest that quantum computing is restricted to biological mechanisms. Biological quantum computing mechanisms, if they exist, could be replicated. Indeed, recent experiments with small scale quantum computers appear to be successful. Even the conventional transistor relies on the quantum effect of electron tunneling.